This blog post is part II of the WWTSD blog series from Esri Support Services. Click here to view the first part in the series: WWTSD (What Would Tech Support Do?) Part I.Have you ever attempted to run a geoprocessing tool, only to have the tool fail? Perhaps your data fails to publish to ArcGIS Online or draws incorrectly on your map. Maybe you are running a geoprocessing tool only to have it fail with a generic error message. You are using the same workflow you use every day with the same settings and configuration, but you can't seem to find another cause to the problem.

You may be dealing with a data-specific issue. There are a few basic troubleshooting steps that may provide a resolution to this, but it all starts with determining if the issue is truly data-specific.

Determining if an Issue Is Data-specificA quick test to determine if an issue is data-specific is to bring your dataset into a blank map document, map frame, or web map (depending on the environment in which you are working). If the issue does not persist in a new map, then the issue may be specific to the map document. If you experience the same issue in a new map document, the source of the problem may be the data.

Another way to determine if an issue is data-specific is to run the same process with a different dataset similar to the one that you are using. For instance, if using a point shapefile that fails to import into your file geodatabase, run the process on a different point shapefile of a similar size. If the tool or process succeeds on the new dataset, then the issue may be data-specific. Luckily, there are tools available that can help to resolve some of these data-specific issues.

If you find that the problem or error is reproducible with multiple datasets, you may want to investigate some of our additional resources to determine the source of the issue. Feel free to check out more resources from the first post in the WWTSD series (linked above).

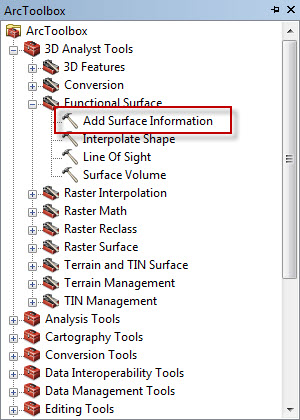

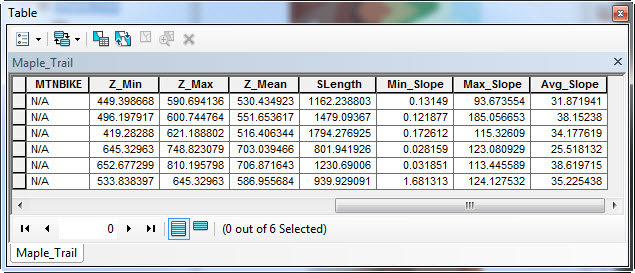

Possible Data-specific Issues and Their SolutionsA geometry error can be one potential source of a data-specific issue that has a quick fix. ArcGIS applications require that a feature's geometry meets certain standards. Issues can occur if any features have null or incorrect geometry. In ArcMap and ArcGIS Pro, you can determine if your dataset has any geometry errors by running the Check Geometry

tool, which generates a table that lists the geometry errors found in the data. If there are errors present in the resulting table, run the Repair Geometry

tool to fix the geometry errors present in the data. It is recommended to make a copy of your data prior to running this tool, as the tool may delete records with geometry errors.

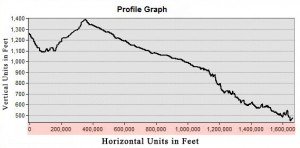

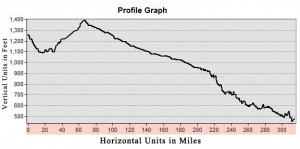

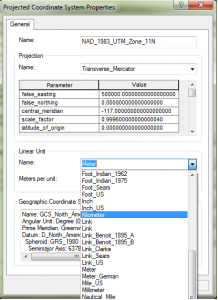

If your features appear in a different location on the globe than you would expect, your data may have an issue with your data's projection. You can view the coordinate system of your data by navigating to the properties of the layer. If the data does not have a defined projection, you may need to use the Define Projection tool to assign the correct projection (see the tool documentation

here for more information). If your data has been assigned a different projection than the other layers in your map, you may need to use the Project tool (

here) to alter the coordinate system of your data. For more information about when to use the Define Projection tool versus the Project tool, take a look at the blog post found

here. If you do not know what projection your data should be in, please see the technical article

here for more information.

Data can become corrupt for various reasons, including incorrectly copying data or experiencing connection issues to a network drive. These issues sometimes can be resolved by exporting the data into a different format or location, such as to a different feature class or to a .tif rather than to a .png raster file. If you are working in a file geodatabase, run the Recover File Geodatabase

tool, which creates a new file geodatabase with repaired versions of feature classes that the tool identifies as potentially corrupt.

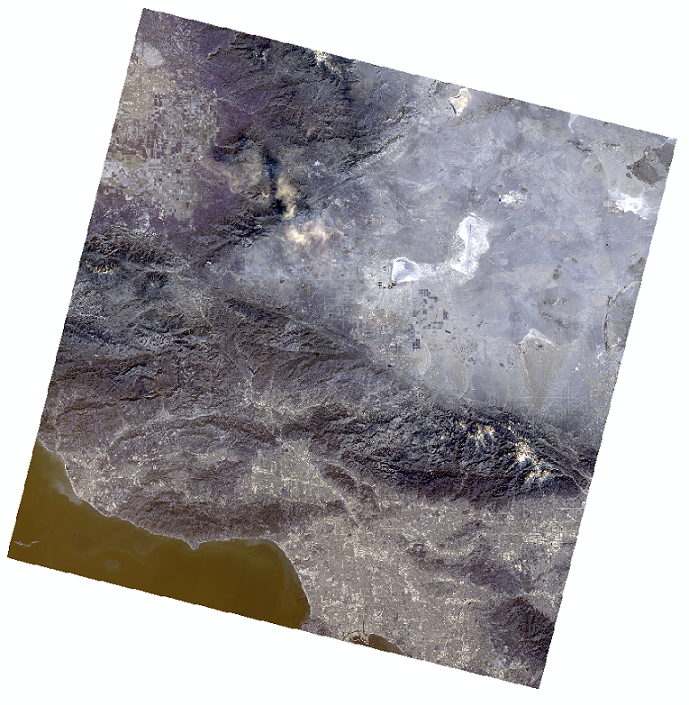

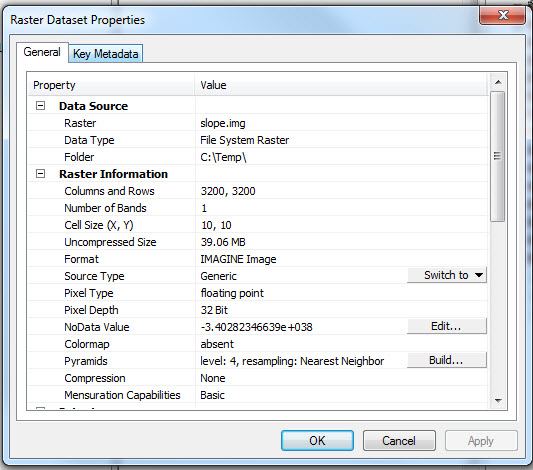

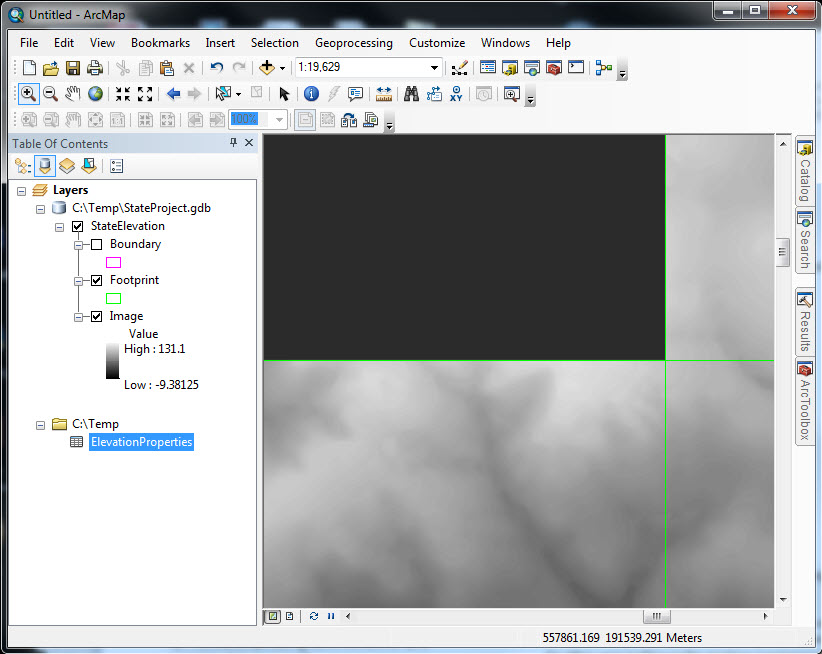

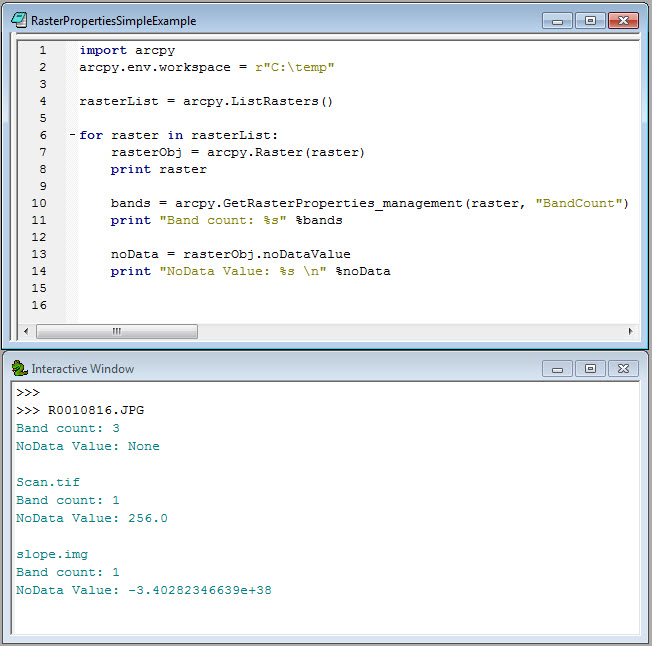

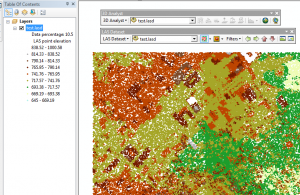

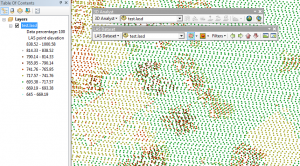

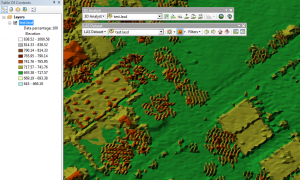

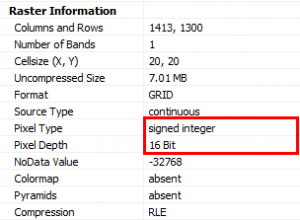

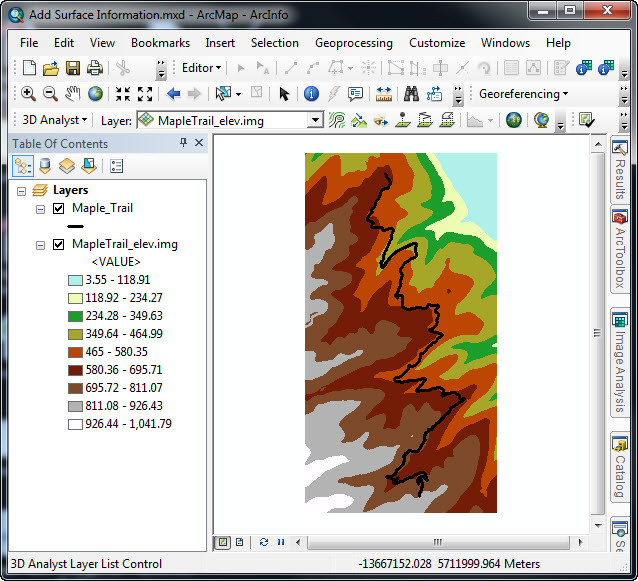

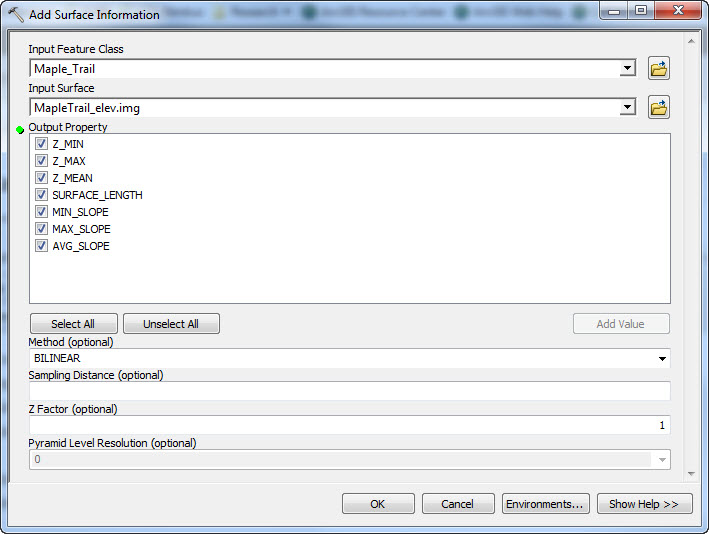

Considerations for Raster DatasetsRaster datasets have many parameters and properties and therefore, many sources of data-specific issues. The following by no means addresses all potential issues with raster datasets, but does address a couple common sources of data-specific issues for rasters and troubleshooting steps to address the issues.

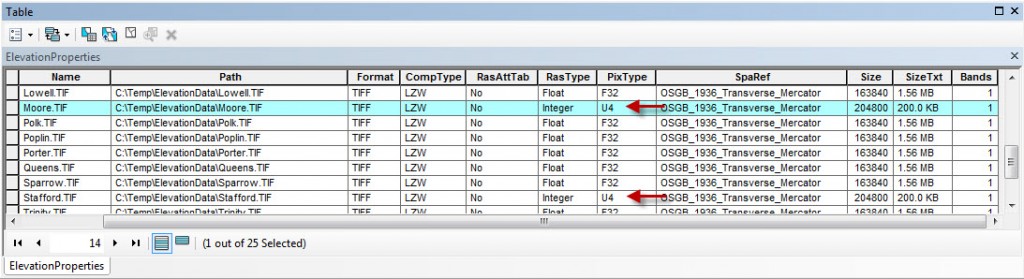

Bit-depth is a characteristic of a raster that defines the possible cell values allowed for the dataset (for more information,

click here). If the bit-depths of two or more rasters that you are running a geoprocessing operation on do not match, you may run into errors or issues. For instance, if you create a mosaic dataset containing rasters from multiple sources, you may want to confirm that the bit-depths of the rasters are the same. You can determine the bit-depth of a raster by navigating to the raster properties. If you must change the bit-depth of your raster, you can use the Copy Raster

tool to manually set the necessary bit-depth and create a new output raster with those parameters.

When adding a raster dataset to a map document or creating a new one, you are given the option to build pyramids that control how the dataset is viewed at different scale levels. If you are unable to view your raster dataset at some scale levels, but not at other levels, the raster pyramids may have become corrupt. Exporting the raster into a different format or deleting and rebuilding pyramids may help resolve this issue. If you would like more information about deleting and rebuilding pyramids,

click here.

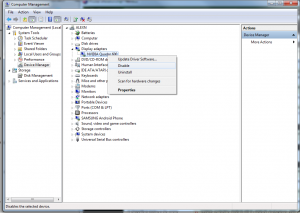

Contact Esri SupportThese steps can help to begin narrowing down potential causes to an issue, but they may not resolve every potential problem. If you need additional assistance with diagnosing or resolving an issue, feel free to contact

Esri Support. We are happy to assist our customers resolve any technical issue they encounter. When contacting Esri Support, please be prepared to provide the following information so that an analyst can assist you as efficiently as possible.

- Software version and license level

- Operating system

- Device, if using a mobile application

- Synopsis of the issue

- Detailed workflow

- Error message

- Test data

Krista M. - Desktop Support Analyst