Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Cancel

Esri Technical Support Blog

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

- Home

- :

- All Communities

- :

- Services

- :

- Esri Technical Support

- :

- Esri Technical Support Blog

Options

- Mark all as New

- Mark all as Read

- Float this item to the top

- Subscribe to This Board

- Bookmark

- Subscribe to RSS Feed

Subscribe to This Board

Other Boards in This Place

37

53.6K

4

Esri Technical Support Blog

43

1.1M

383

Showing articles with label ArcGIS Pro.

Show all articles

Latest Activity

(383 Posts)

Esri Contributor

10-17-2018

10:45 AM

3

0

4,939

Occasional Contributor

07-23-2018

11:43 AM

0

0

1,896

Esri Contributor

06-23-2017

02:50 AM

2

0

5,250

Esri Regular Contributor

11-22-2016

03:21 AM

2

0

3,305

Occasional Contributor III

09-21-2016

03:47 AM

0

0

2,414

Esri Contributor

07-13-2016

11:58 AM

2

0

6,277

Esri Contributor

06-09-2016

03:23 AM

0

0

1,284

43 Subscribers

Labels

-

Announcements

70 -

ArcGIS Desktop

87 -

ArcGIS Enterprise

43 -

ArcGIS Mobile

7 -

ArcGIS Online

22 -

ArcGIS Pro

14 -

ArcPad

4 -

ArcSDE

16 -

CityEngine

9 -

Geodatabase

25 -

High Priority

9 -

Location Analytics

4 -

People

3 -

Raster

17 -

SDK

29 -

Support

3 -

Support.Esri.com

60

- « Previous

- Next »

Popular Articles

Understanding Software Issues: Hangry software

TinaMorgan1

Occasional Contributor II

24 Kudos

9 Comments

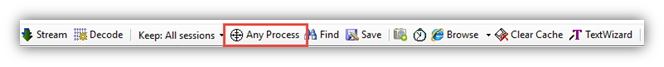

Monitoring web service requests using Fiddler

AlanRex1

Esri Contributor

23 Kudos

11 Comments

Thank You for Contacting Esri Support, How Can We Help You?

TinaMorgan1

Occasional Contributor II

22 Kudos

10 Comments

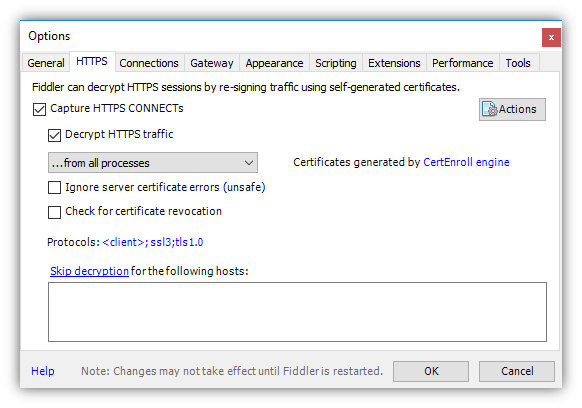

occur is to enable it to capture traffic over HTTPS.

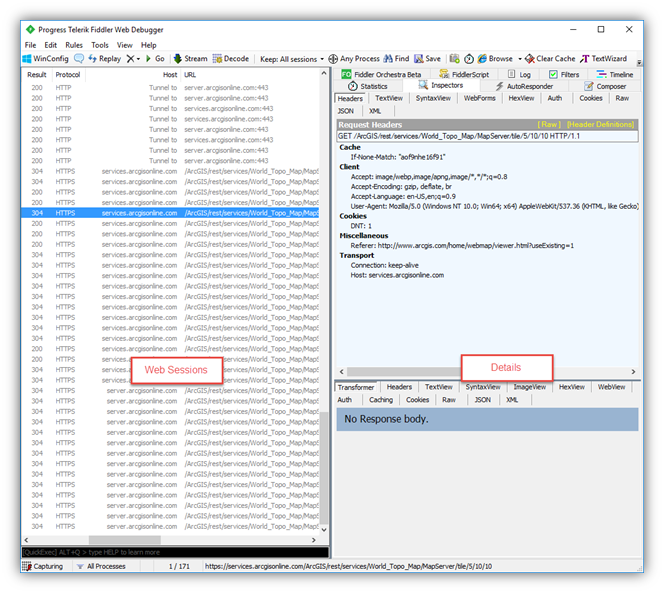

occur is to enable it to capture traffic over HTTPS. s list and the…other pane (I couldn’t find an official name so for the purposes of this blog we’ll refer to it as the Details pane).

s list and the…other pane (I couldn’t find an official name so for the purposes of this blog we’ll refer to it as the Details pane).

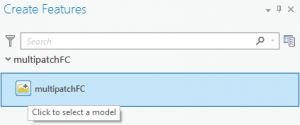

Note: There is known issue with the Import 3D files tool. The placement points parameter is not honored so as of ArcGIS 10.4.1 or ArcGIS Pro 1.3, this tool is not a viable option. This issue is planned to be fixed in a future release.

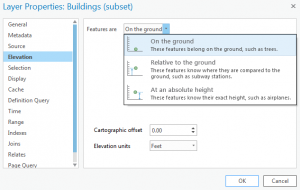

Note: There is known issue with the Import 3D files tool. The placement points parameter is not honored so as of ArcGIS 10.4.1 or ArcGIS Pro 1.3, this tool is not a viable option. This issue is planned to be fixed in a future release. set the multipatch data "on the ground" and use the

set the multipatch data "on the ground" and use the