- Home

- :

- All Communities

- :

- Services

- :

- Implementing ArcGIS

- :

- Implementing ArcGIS Blog

- :

- Configure a Replicated ArcGIS Enterprise Deploymen...

Configure a Replicated ArcGIS Enterprise Deployment in AWS using the WebGIS DR Tool

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

Many organizations have installed and configured their deployments of ArcGIS Enterprise in the cloud and are required to incorporate disaster recovery plans to ensure the least amount of downtime in the event of a failure or catastrophe. There are various options out there for organizations to choose from to plan for a disaster recovery scenario. For the purpose of this blog post, I will walk through the steps for replicating a primary Enterprise deployment specifically in AWS to a warm, geographically redundant standby site using the WebGIS DR Utility.

Learn more on disaster recovery and replication in ArcGIS Enterprise

Much of this workflow has been covered extensively by my colleagues for on-premise deployments in another blog post – Migrate to a new machine in ArcGIS Enterprise using the WebGIS DR tool. The general workflow for our scenario is very similar to the on-premise deployments with some added steps utilizing AWS services* – Application Load Balancer (ALB), Route 53, and S3 buckets – as well as the exclusion of using etc\host file entries in EC2 instances. Please read the blog post above to understand the overall workflow and prerequisites before proceeding with this workflow, as it will contain information not covered in this post.

* I will assume readers will have some prior experience with AWS and its services mentioned above.

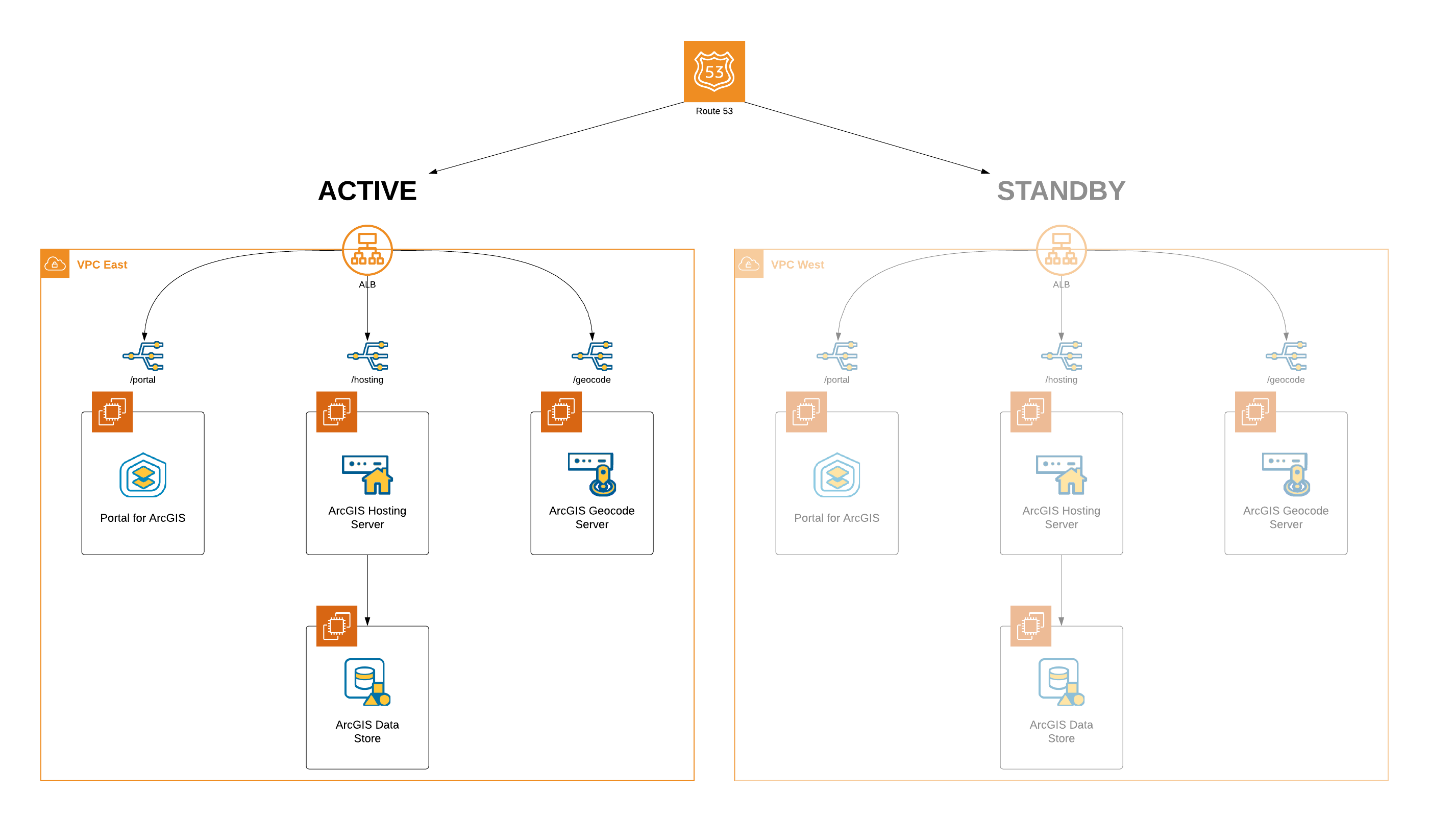

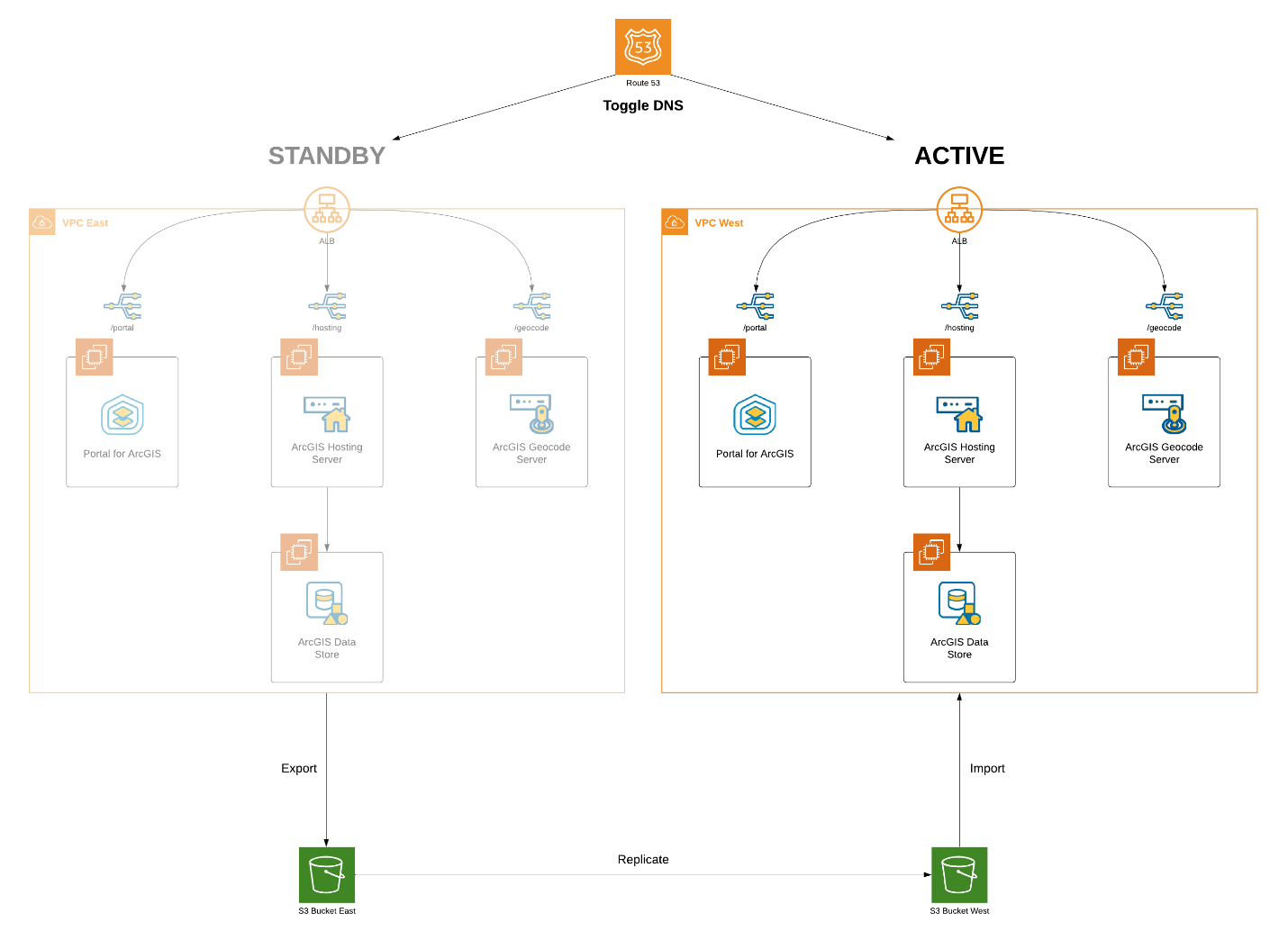

Scenario

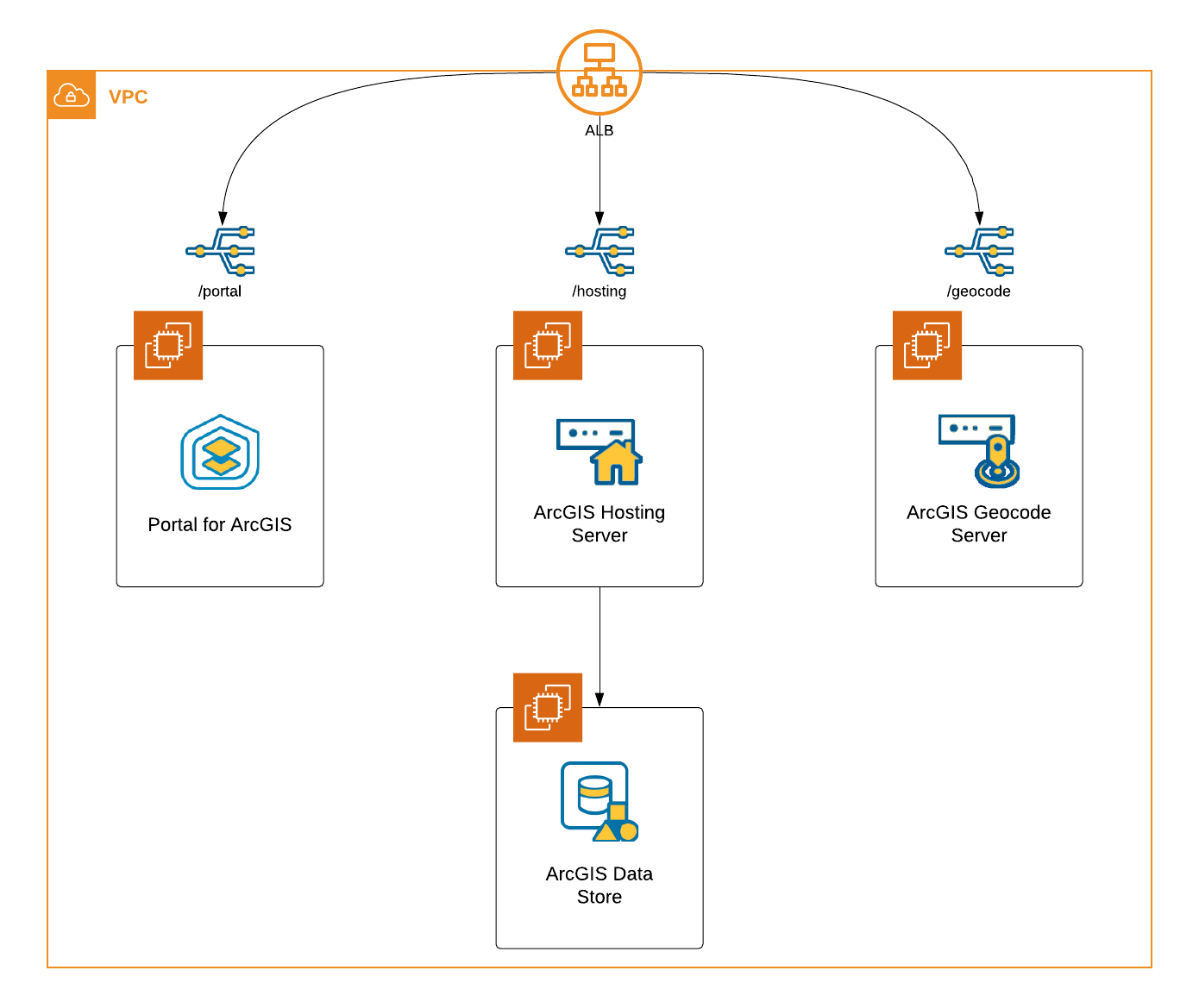

Let’s say we want to have a replicated and geographically redundant multi-machine deployment with the following components setup in AWS in the US East and US West regions:

- Portal for ArcGIS

- ArcGIS Hosting Server

- ArcGIS Data Store

- ArcGIS Geocode Server

Route 53 Hosted Zones

Route 53 is a scalable Domain Name System (DNS) that utilizes a combination of public and private hosted zones, which are containers for records about how you want to route traffic for a specific domain. Public hosted zones are used to route traffic on the internet, while private hosted zones are used to route traffic within an Amazon VPC. The following workflow will be utilizing both types of hosted zones with overlapping namespaces. Therefore, in our scenario when we are logged into an EC2 instance in a VPC that is associated with a private hosted zone:

- The Resolver will evaluate whether the name of the private hosted zone matches the domain name in the request. If there is no match, the Resolver will forward the request to a public DNS resolver.

- If there is a match in the domain name of the request, the hosted zone is searched for a record that matches the domain name. If there is no record in the matching private hosted zone, the Resolver does not forward the request to a public DNS resolver, but will return a non-existent domain (NXDOMAIN) to the client.

That last bullet is very important! If you have other applications in the same VPC and you setup a private hosted zone, please ensure to add records for those applications so that those application remain completely operational.

Before Deployment

Before proceeding with deploying ArcGIS Enterprise, we need to complete the following tasks using the AWS services mentioned above in order to maintain a consistent DNS across the primary and standby sites:

- Create an ALB in both regions that will manage traffic for the ArcGIS Enterprise deployments. A few things to note:

- Ensure to attach the appropriate certificate from the public domain registered in Route 53.

- A target group must be created when configuring a new load balancer. Create a target group for Portal at this time. It does not need to be registered to any targets with this target group.

- In Route 53 under your Public Hosted Zone:

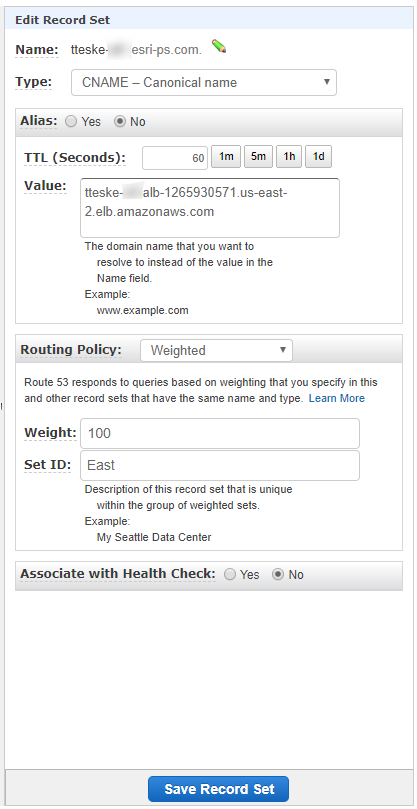

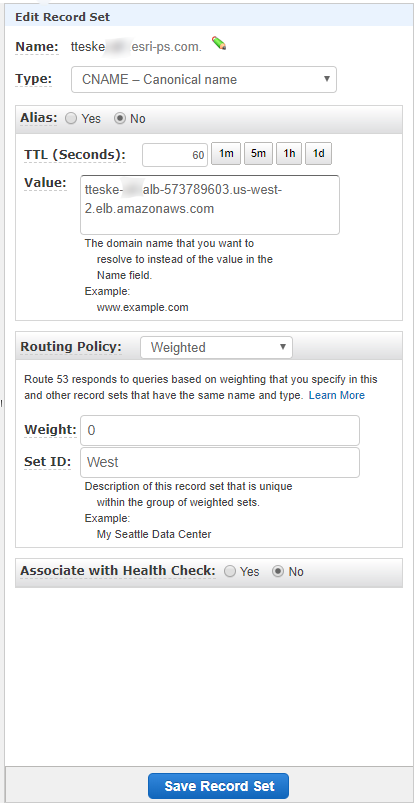

- Create two identical Record Sets for each of the load balancers using CNAME types. One thing to note here:

- Having multiple Record Sets using the Weighted Routing Policy is not a requirement; it is more a matter of preference. It is possible to have just one Record Set and replace the ALB value when the switch is needed.

- Set the time to live (TTL) to the recommended 60 seconds, which will "minimize the amount of time it takes for traffic to stop being routed to your failed endpoint."

- Set the Routing Policy to Weighted:

- Assign a weight of 100 to the primary site.

- Assign a weight of 0 to the standby site.

- Create two identical Record Sets for each of the load balancers using CNAME types. One thing to note here:

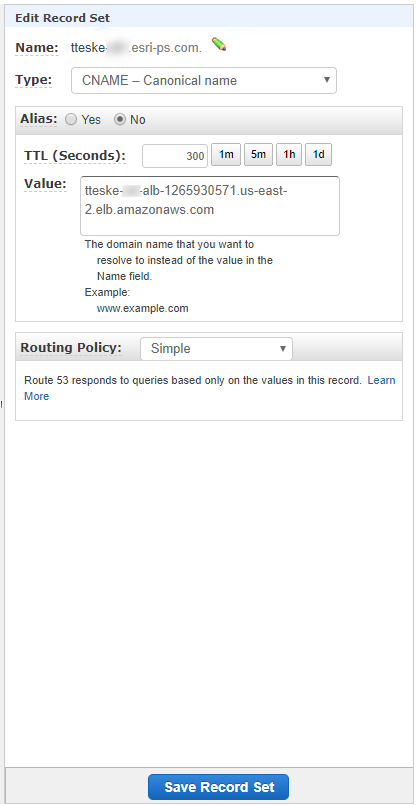

- Create a Private Hosted Zone for each region using the same Domain Name as the Public Hosted Zone in Route 53.

- Attach the private hosted zone to the VPC assigned to your ALB during time of creation.

- Attach the private hosted zone to the VPC assigned to your ALB during time of creation.

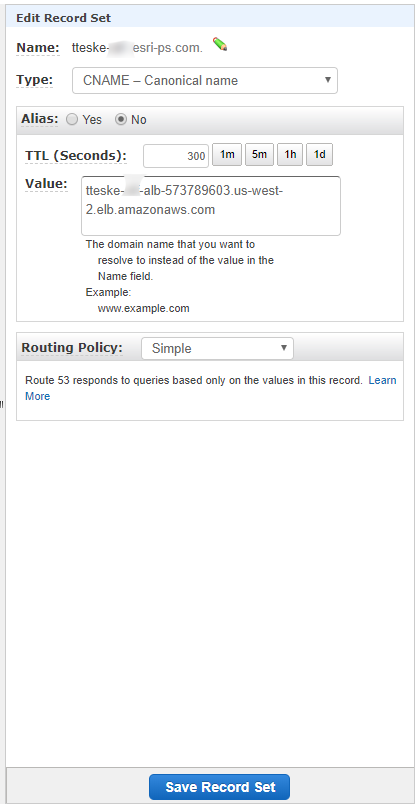

- In Route 53 under each of the Private Hosted Zones:

- Create an identical Record Set to match the one created in the Public Hosted Zone.

- TTL can remain as the default value of 300 seconds.

- The Routing Policy can remain the default value of Simple.

The last two steps of this pre-deployment process are vital to the success of the WebGIS DR utility. Having the two Private Hosted Zones with identical Domain Names and Record Sets that match the Record Sets in the Public Hosted Zone ensures the ability to configure identical ArcGIS Enterprise deployments. Additionally, the deployments will remain in an operational state to perform consistent backups and restores on the appropriate systems without issue due to being on separate regions and VPCs.

ArcGIS Enterprise Deployment

It is finally time to deploy the components of our architecture to EC2 instances (repeat for each region):

- To setup the base deployment (Portal for ArcGIS, ArcGIS Hosting Server, and ArcGIS Data Store), follow steps 1-20 from our documentation on deploying Portal for ArcGIS on AWS, which utilizes Esri’s Amazon Machine Images (AMIs).

- Make sure to assign the EC2 instances to the same VPC and Security Group as the ALB.

- Repeat steps 11-19 in the linked documentation from the previous step to setup the additional ArcGIS Geocode Server.

- Create the two remaining target groups for the ArcGIS Hosting Server and Geocode Server (the Portal target group was created during the ALB creation in the pre-deployment steps).

- Register each instance with its appropriate target group.

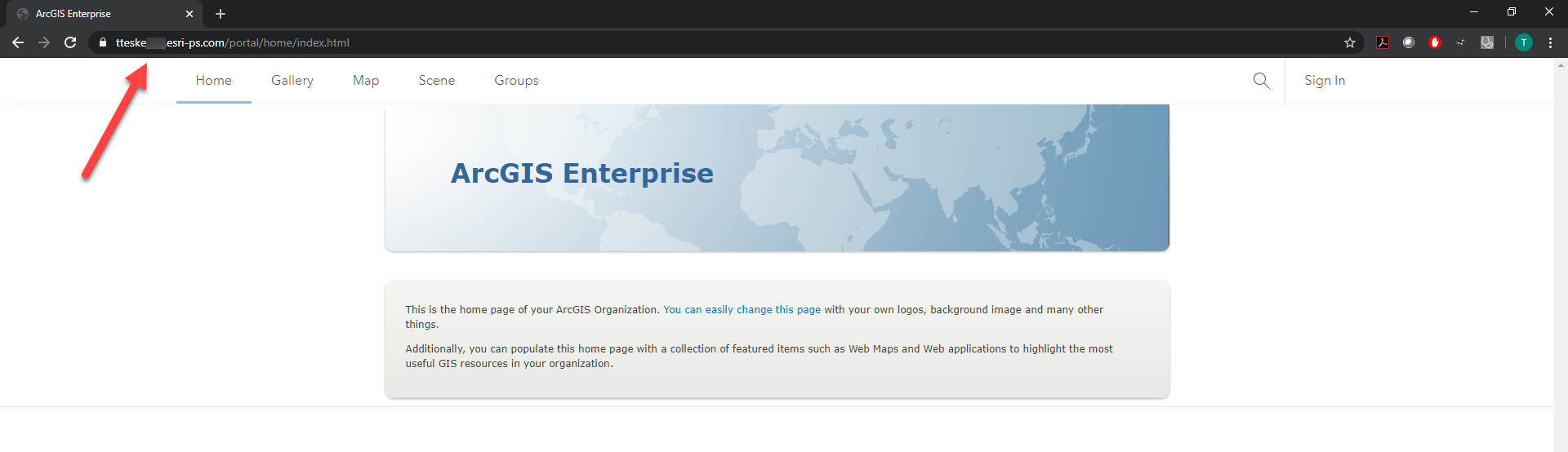

- Configure the appropriate forwarding rules in the ALB to match the target groups. If everything is setup correctly, we should be able to successfully access portal through the DNS alias (Name property) created in the Record Sets.

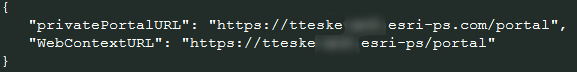

- Follow steps 21-23 in the linked documentation above using the DNS alias from the Record Sets. For example, my portal’s system properties would have the following entries:

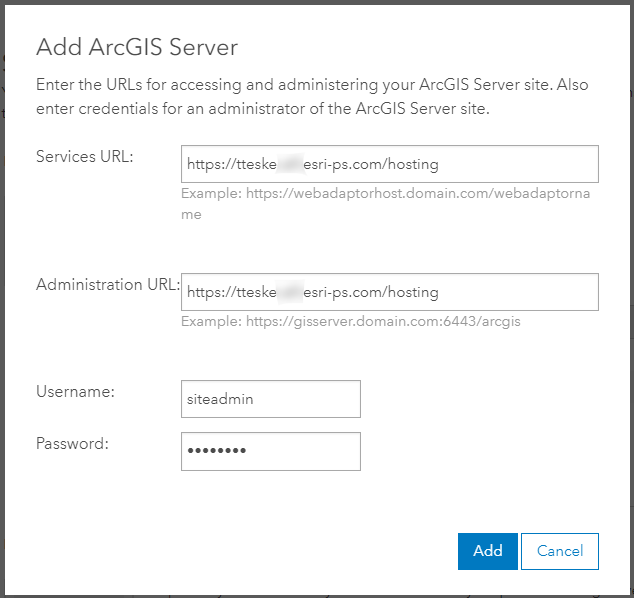

- And federating my hosting server (or any others) would look like so (the admin URL may vary depending on the level of administrative access setting placed on the Web Adaptor):

- And federating my hosting server (or any others) would look like so (the admin URL may vary depending on the level of administrative access setting placed on the Web Adaptor):

- Repeat step 22 for the ArcGIS Geocode Server.

We now have two identical and fully functional ArcGIS Enterprise deployments in each region. The deployment that has a Weighted Policy of 100 in the Public Hosted Zone will be accessible over the internet and act as the primary site, while the other deployment (with Weighted Policy of 0) in the Public Hosted Zone can only be accessible within its own VPC and act as the standby site.

Replication

Now that we have our primary and standby sites, we have to setup two replicating S3 buckets in the appropriate regions to have a fully replicated and geographically redundant deployment prepared for a disaster recovery scenario.

In Amazon S3, create two buckets with easily recognizable naming conventions, like the following:

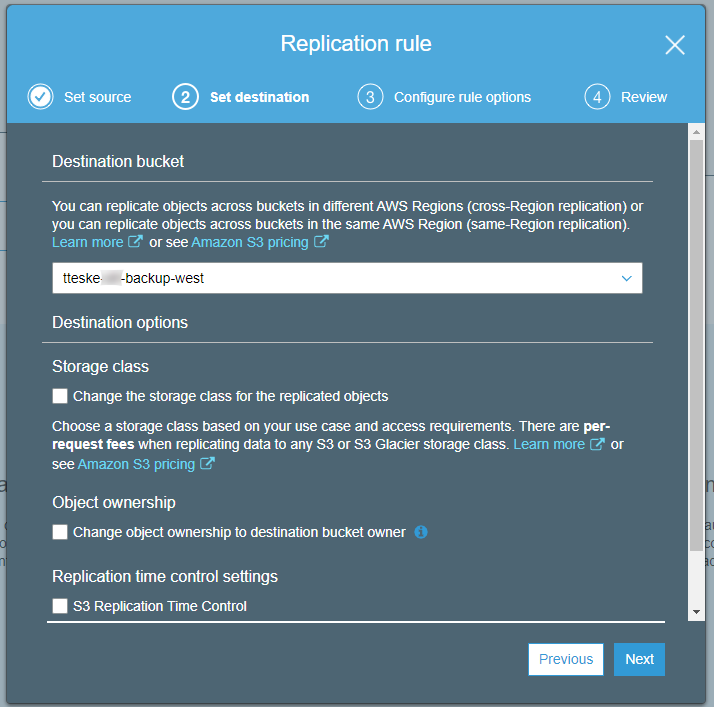

In the second step of the creation process for each bucket under Configure Options, check the box under Versioning. This is a requirement to enable Cross-Region Replication. Once the buckets have been created, select the bucket that will be utilized by the primary site and navigate to Replication under the Management tab and select Get Started. We can leave the defaults selected for all settings throughout this process. We just need to ensure we select our designated standby site bucket for the destination.

Amazon S3 provides a selectable option (seen in the screenshot above) called S3 Replication Time Control, which will replicate 99.99% of new objects within 15 minutes. This may be a valuable option for organizations with large-scale ArcGIS Enterprise deployments with lots of content who need minimal down time. In my tests, I have found replication to be fast without that option selected, granted my backups ran around 5-6 GBs, which amounted to ~300 hosted services and a Geocode service (and its data) copied to ArcGIS Server.

Disaster Recovery

We are now able to create backups of the primary site and restore the standby site from said backups.

- Create a backup with the WebGIS DR tool of your primary site on the instance where Portal is installed. Make sure to point to the appropriate S3 bucket (should be the one in the same region as the primary site) in the properties file. The backup will be replicated to the S3 bucket in the other region.

- Restore the replicated backup with the DR tool on the standby instance where Portal is installed. Be mindful of the appropriate S3 bucket in the properties file.

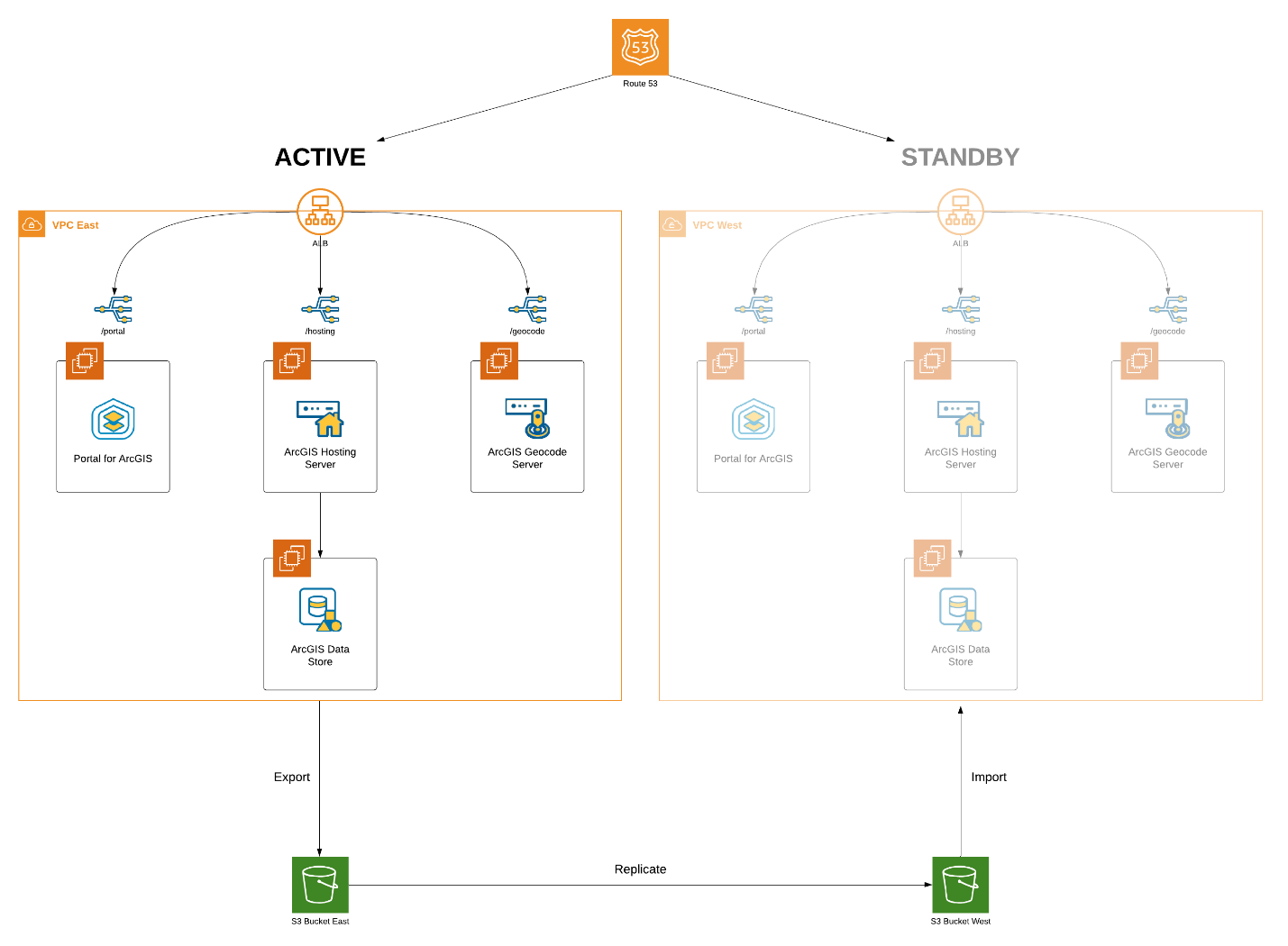

This is the final architecture and workflow:

In the event of a disaster, we now have the ability to “failover” to our warm, standby deployment by just toggling the values of the Weighted Policies of the Record Sets in our Public Hosted Zone with downtime dependent upon the TTL of the Route 53 record set.* Additionally, depending on the length of downtime for the original hot site, you may need to modify how your backups and restores are handled on the two environments, so that any new items created in the new hot site can be maintained when your original data center is restored.

* It should be heavily emphasized that this workflow with the WebGIS DR tool is not considered a highly available ArcGIS Enterprise deployment. The “failover” may be quick in this scenario, but the standby deployment will only have content available from the last WebGIS DR backup/restore. Please see our documentation on configuring a highly available ArcGIS Enterprise.

Since both deployments are also identical, there should be no issues with services integrated into other business systems. Most importantly, this entire workflow – running WebGIS DR backups and restores, DNS toggling, as well as detecting who is acting as the primary site at any given moment – can be completely scripted and automated, ensuring that mission critical systems remain operational.

Learn more about preventing data loss and downtime with ArcGIS Enterprise.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.