- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS QuickCapture

- :

- ArcGIS QuickCapture Blog

- :

- Voice Controlled Data Collection

Voice Controlled Data Collection

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

It's been proven over and over that when technology becomes more accessible, technology gets better for everyone. Voice Control is a new accessibility feature in iOS 13 that lets you speak commands to your iPhone or iPad. This is huge for people with limited dexterity, mobility, and countless other conditions, but it's also a fantastic new way to interact with your device hands-free. When this advancement is paired with Esri software it becomes even more powerful!!!

We at Esri are actively working on bringing this functionality into QuickCapture itself. Without the need for 3rd party software or ios configurations. The theoretical advantages of this functionality added would be, but not limited to:

- It will come pre-configured.

- You only need to enable it with the switch of a button in the app.

- It works across all supported platforms

- It will also work offline.

So, would you like to collect data hands free now?

Here is how to utilize ArcGIS QuickCapture & Voice Controls in Apple IOS 13 to collect data hands free!

1.) - Download and install QuickCapture, Create a Project, and share accordingly.

2.) - Within the QuickCapture project under Settings set Sound to Text to speech.

(This enables QuickCapture to beep instead of repeat the label of the button.)

3.) - Enable Voice Controls in IOS - Settings>Accessibility>Voice Control>On

Also within Voice Control setting on your device, you can review the full list of commands, turn specific commands on or off, and even create custom commands.

So ultimately if you want to enable voice controls that work seamlessly with QuickCapture on your apple device. All you need to do is configure your QuickCapture to use a beep instead of repeating the word then enable Voice Controls in ios settings, and under basic navigation enable <item name>. Familiarize yourself with the pre-configured voice control phrases, and off you go. Hands free data collection enabled.

Below is the video of how to set it up from start to finish with beep instead of text to speech configured.

Here are some things you should know:

Limitation: You have to know exactly where to tap on the screen without seeing the screen. You will be programming what Apple calls a Custom Gesture. Essentially commanding the ios to tap on the screen where you tell it too. (scrolling then tapping however possible to program, would be difficult)

I suggest tracing the screen and buttons onto a blank sheet of printer paper to be used as a template when configuring the custom gesture.

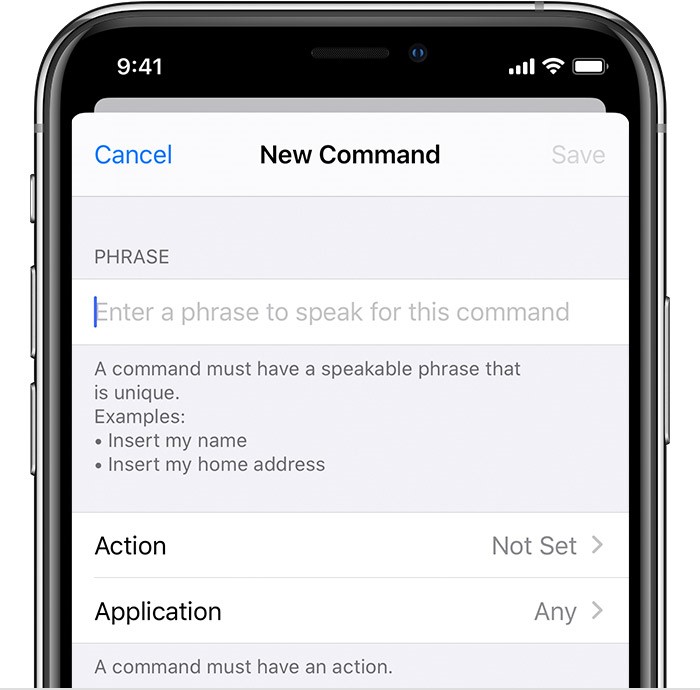

How to Create a custom voice command on your device.

Go to Settings and select Accessibility.

Select Voice Control, then Customize Commands.

Select Create New Command, then enter a phrase for your command.

(on the video I used the custom phrase “Collect Pothole” this is where I put “Collect Pothole”)

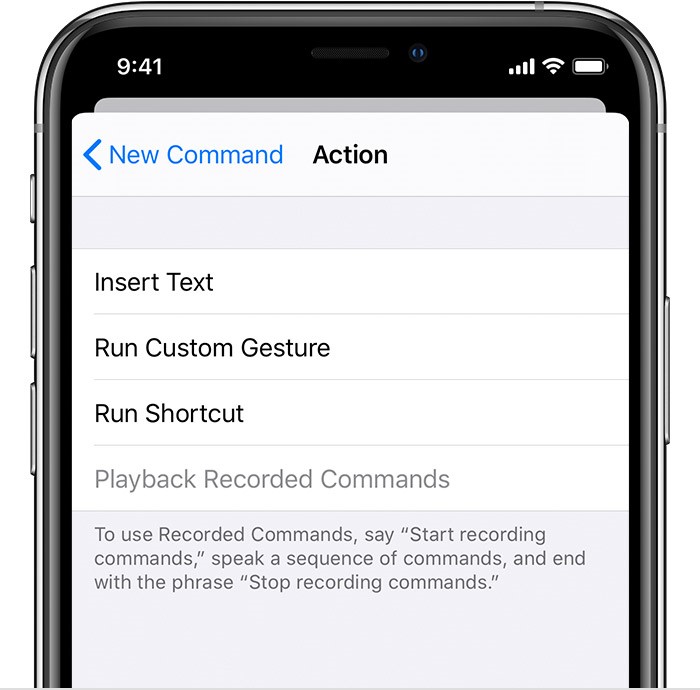

Give your command an action by choosing Action and selecting Run Custom Gesture:

This is where you can use the template you traced on the printer paper, to determine where the gesture needs to be placed so that it will select the correct QuickCapture button. Place the template over your phone and tap the button. Your custom gesture will be recorded through the paper. Repeat the process for each custom voice command you want to program…

FAQ

Q - Does this work offline?

A - Yes as long as you have GPS to collect the location information voice control will work offline.

Q- Can I somehow transfer custom gestures across phones?

A - No, at this time there is no easy way to transfer custom gestures across phones.

Here is a video of voice commands being used with Google on an Android device.

Posted By: Esri Solution Engineer - Jason Schroeder & Esri Account Manager - Robert Hathcock

Limitation: With default voice commands you will be required to say the words written on the screen that the operating system can identify. i.e. Pothole, Sign Repair etc.

*Notice*: If you toggle the option <item name> under Basic Navigation on. This option will only require you to say the word on the screen i.e. “Pothole”, however this can create an issue. QuickCapture natively repeats out loud what is written on a button when a button is pushed to let the user know that the data was collected. Thus, you say “Pothole” apple taps Pothole on the screen, then QuickCapture says “Pothole and then apple taps Pothole on the screen again, then QuickCapture says “Pothole…….. You can see where this is going.. This configuration throws the process into an endless loop…

Solution: If you go into the settings on QuickCapture mobile and set the response to be a beep instead of repeating the title of the QuickCapture button, then enable <item name> under the Basic Navigation configuration of Voice Controls in ios13. This will allow you to capture data by just saying the title of each button.

**Notice and Update**

(Currently Apple Voice Control is available only in the United States) *according to Apple's website*

However... I contacted Apple Accessibility to verify the above statement, and I was Informed that:

"Voice Control on iOS is currently language-specific, but not region-specific.".

"While we cannot comment on any future plans for Voice Control, we can let you know that you can currently use Voice Control in your region by switching your system language to U.S. English. This should allow you to download the necessary files for Voice Control, and begin using the feature."

Here is a link to apple’s support page giving you all the information about voice controls and the capabilities therein. https://support.apple.com/en-us/HT210417

Disclaimer* "The opinions expressed or postings on this site are my own, and don't necessarily represent Esri's position, strategies, or opinions."

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.