- Home

- :

- All Communities

- :

- Developers

- :

- Native Maps SDKs

- :

- Java Maps SDK Questions

- :

- Re: Java Maps SDK Memory Leak?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Java Maps SDK Memory Leak?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

. . .

//map here is an esri ArcGISMap object that holds some data and must be loaded fully

map.addDoneLoadingListener(() -> {

if (map.getLoadStatus() == LoadStatus.LOADED) {

String mapJson = map.toJson();

FileWriter jsonFileWriter = null;

try {

//file here is a valid File already created

jsonFileWriter = new FileWriter(file);

jsonFileWriter.write(mapJson);

mapDoneWriting = true;

} catch (IOException e) {

e.printStackTrace();

} finally {

try {

if (jsonFileWriter != null) {

jsonFileWriter.flush();

jsonFileWriter.close();

}

} catch (IOException e) {

e.printStackTrace();

}

}

} else

throw new IllegalArgumentException("Error writing json map file");

});

. . .

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi,

Are you able to provide some reproducer code?

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Yes I will send once done. I am working on creating the minimal reproducible code right now.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I have created a maven project on github that you should be able to fork. I have tested this simple code on our server and see that each consecutive run the memory shoots up, which further makes me believe there is a memory leak on the Esri SDK side (or I didn't close something that is supposed to be closed, but I do not see any explicit documentation about closing any of the methods I am using). Thanks

Here is the link: https://github.com/jmfaulkFPI/MemoryLeakTest

Let me know if there are any issues.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Jared, I've tried the reproducer, but I find it fails to build because I don't have the apjibe dependency. Could you remove that dependency, or just tell me what value to use for ApiFieldTags.GEOID?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Sorry about that, I have removed the dependency and pushed. I will be online all day if you need help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thanks. I'm can run the reproducer now. What do you suggest I do with/to it to get it to crash?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hey Alan,

So it looks like with my minimal code I cannot get it to crash, but I simplified our system quite heavily so I am going back through to add ALL references and operations we use with ArcGIS to be sure. If I cannot get it to crash once I add all references in, then it is clearly on our side. I will let you know my findings.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Alan,

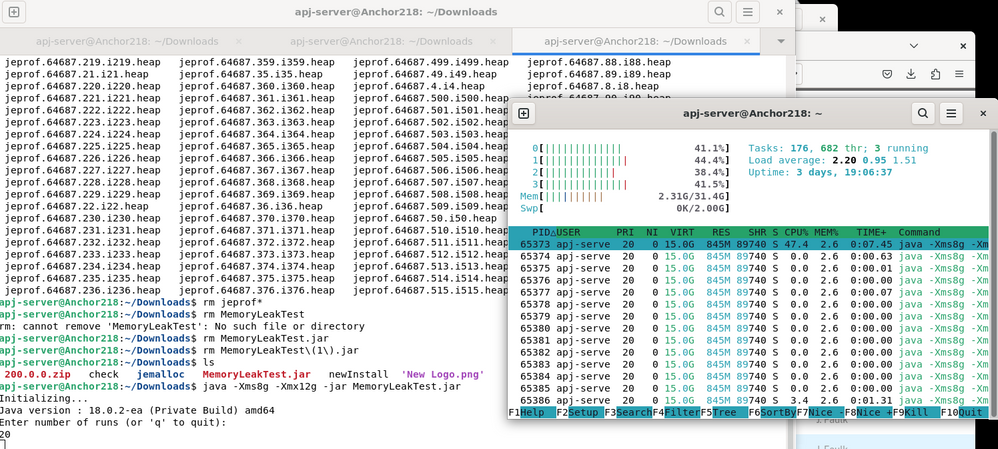

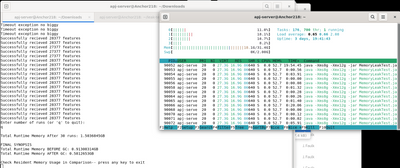

I was able to reproduce our issue exactly as seen in our production code when I added all remaining references to the ArcGIS SDK and added the parallelization we use similar to our system. I have pushed my code to the github. Just add an api key, and then depending on the size of your system, it will take more or less runs to get it to crash. about 30 runs for me got my resident memory usage near 17 GB, so just keep running the program until you crash. I have added prompts to guide you through, as well as added JVM heap memory print statements detailing how much Heap memory is used at the end of each calculation.

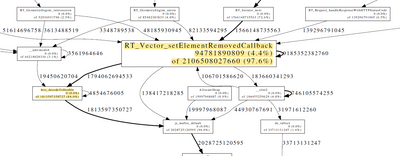

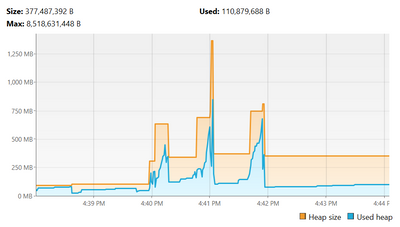

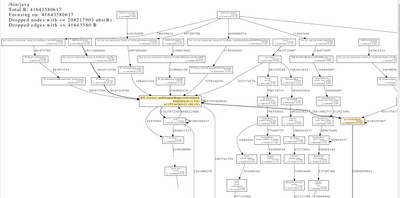

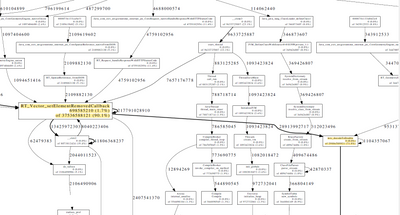

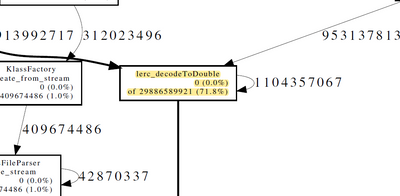

Here are the graphs produced from the minimally reproduced code (I see the same call to these two methods):

Here is a screenshot of the initial memory usage (I am using 'htop' in linux to check memory usage filtered on my program):

Here's the final memory usage:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Check README for more exact instructions of running the program,