Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Cancel

Imagery and Remote Sensing Blog

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

- Home

- :

- All Communities

- :

- Products

- :

- Imagery and Remote Sensing

- :

- Imagery Blog

Options

- Mark all as New

- Mark all as Read

- Float this item to the top

- Subscribe to This Board

- Bookmark

- Subscribe to RSS Feed

Subscribe to This Board

Other Boards in This Place

176

1.8M

65

Imagery Questions

175

9.6M

1.2K

Imagery Documents

168

46.9K

12

Imagery Ideas

176

229.5K

98

Showing articles with label Motion imagery.

Show all articles

Latest Activity

(65 Posts)

Esri Contributor

11-09-2023

12:11 PM

0

0

301

Esri Contributor

02-07-2020

02:07 PM

0

0

978

by

Anonymous User

Not applicable

04-04-2019

12:37 PM

2

0

783

Esri Regular Contributor

09-09-2018

07:27 PM

6

23

14K

Esri Regular Contributor

07-05-2017

04:01 PM

4

3

3,355

Esri Regular Contributor

01-27-2017

04:04 PM

2

7

4,127

176 Subscribers

Labels

-

Analysis

10 -

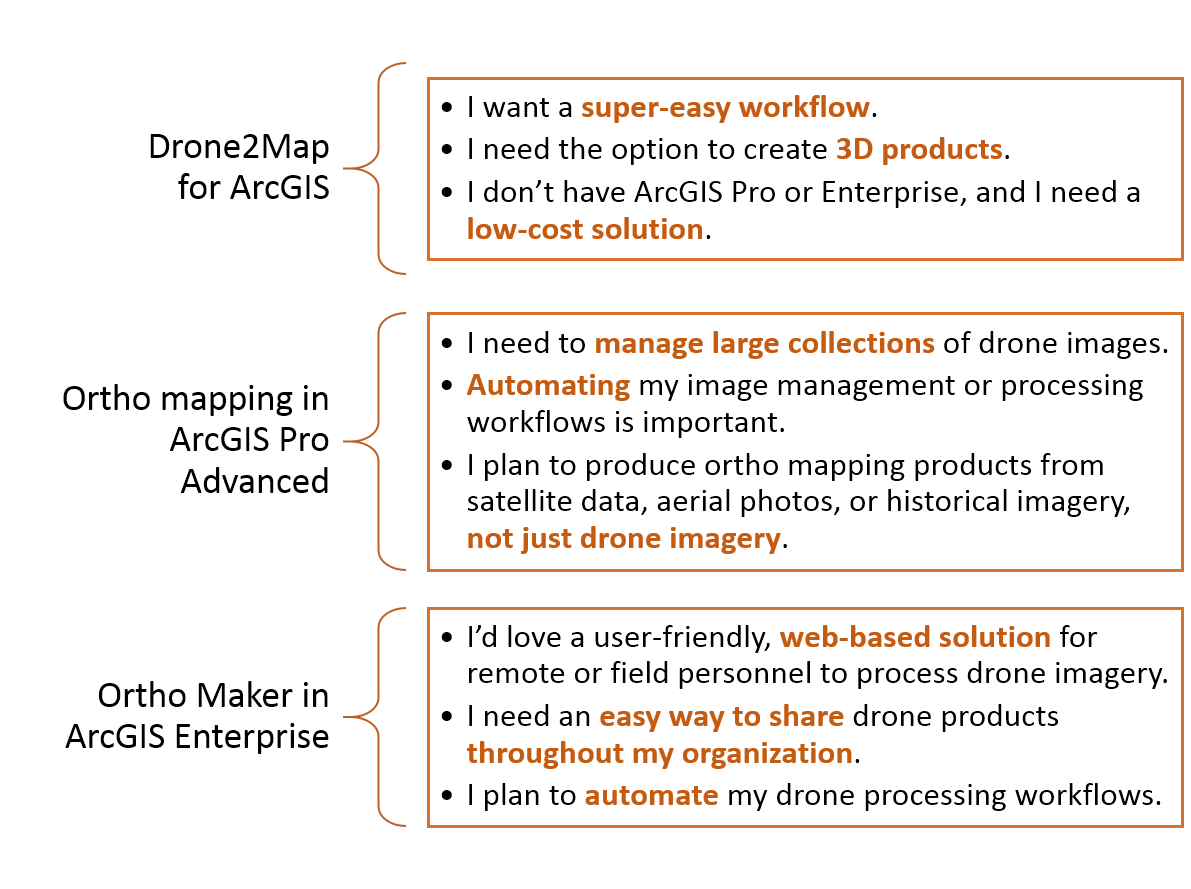

ArcGIS Drone2Map

3 -

ArcGIS Excalibur

1 -

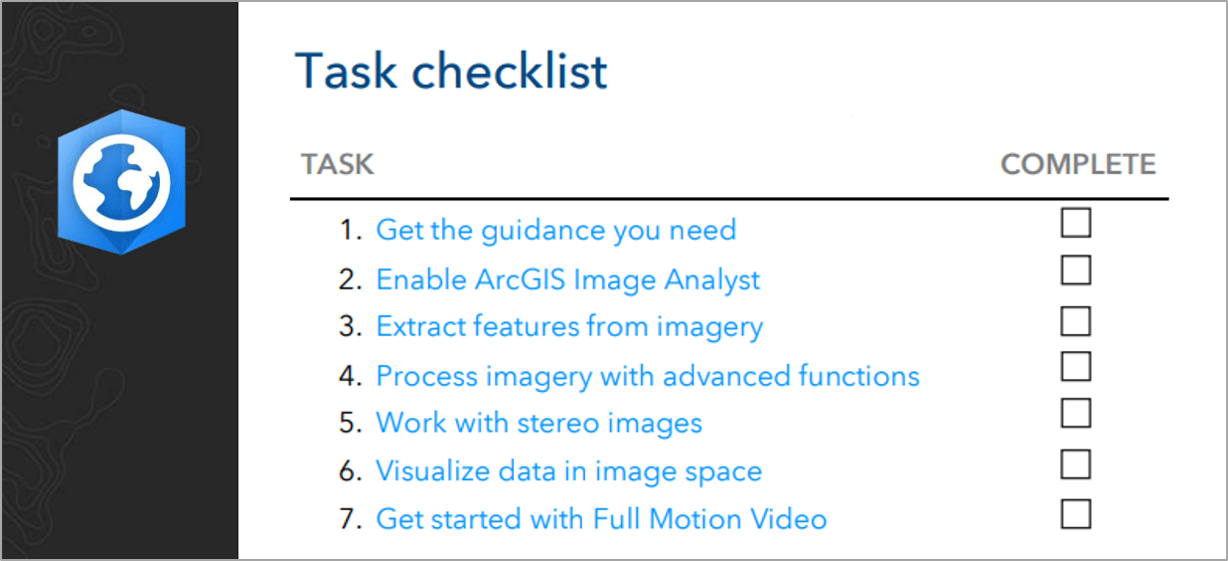

ArcGIS Image Analyst

1 -

ArcGIS Image for ArcGIS Online

1 -

ArcGIS Pro

1 -

Change detection

1 -

Deep learning

4 -

Elevation and lidar

8 -

Image classification

1 -

Image management

2 -

Image Mapping

8 -

Image Services

3 -

Mosaic datasets

4 -

Motion imagery

10 -

Multidimensional

2 -

Oriented Imagery

2 -

Raster functions

4 -

Site Scan for ArcGIS

1 -

Visualization

9

- « Previous

- Next »

Popular Articles

Arthur's Feature Extraction from LiDAR, DEMs and Imagery

ArthurCrawford

Esri Contributor

24 Kudos

47 Comments

New Imagery and Remote Sensing Story Maps

ReneeBrandt

Occasional Contributor

13 Kudos

0 Comments

Use Sentinel 2 Imagery with ArcGIS

ReneeBrandt

Occasional Contributor

11 Kudos

29 Comments