This blog is developing, just starting and will grow with time:

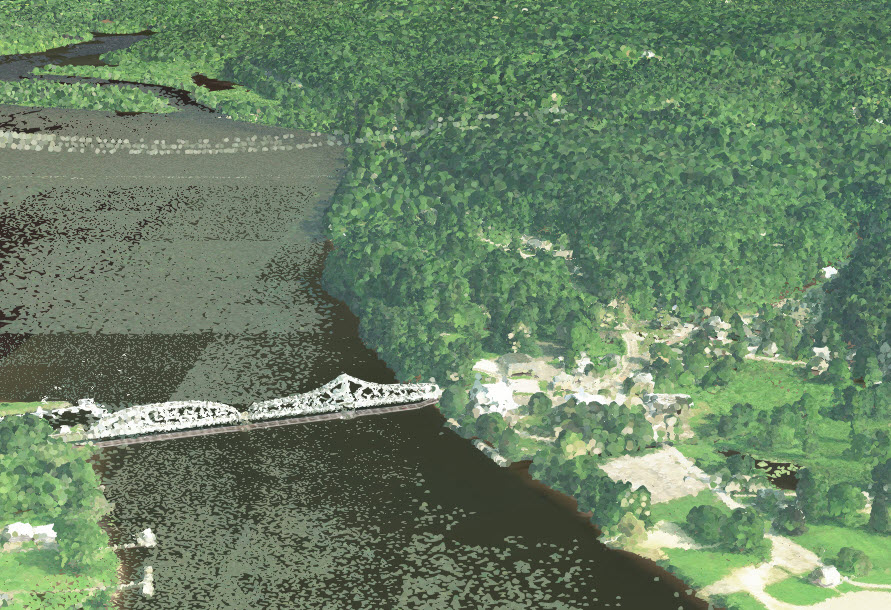

Over the last few years, I have been publishing lidar colorized point clouds as supplements to the 3D cities, like St. Louis area, that I have created using the 3D Basemap solution and extracting buildings from lidar. Sean Morrish wrote a wonderful blog on the mechanics of publishing lidar. Lately, I have looked into using the colorized lidar point clouds as a effective and relatively cheap way to create 3D Basemaps. It has some advantages as most states, counties and cities already have lidar available, most have high resolution imagery and all have NAIP imagery that is needed to create these scenes.

Mantiowoc County lidar scenes:

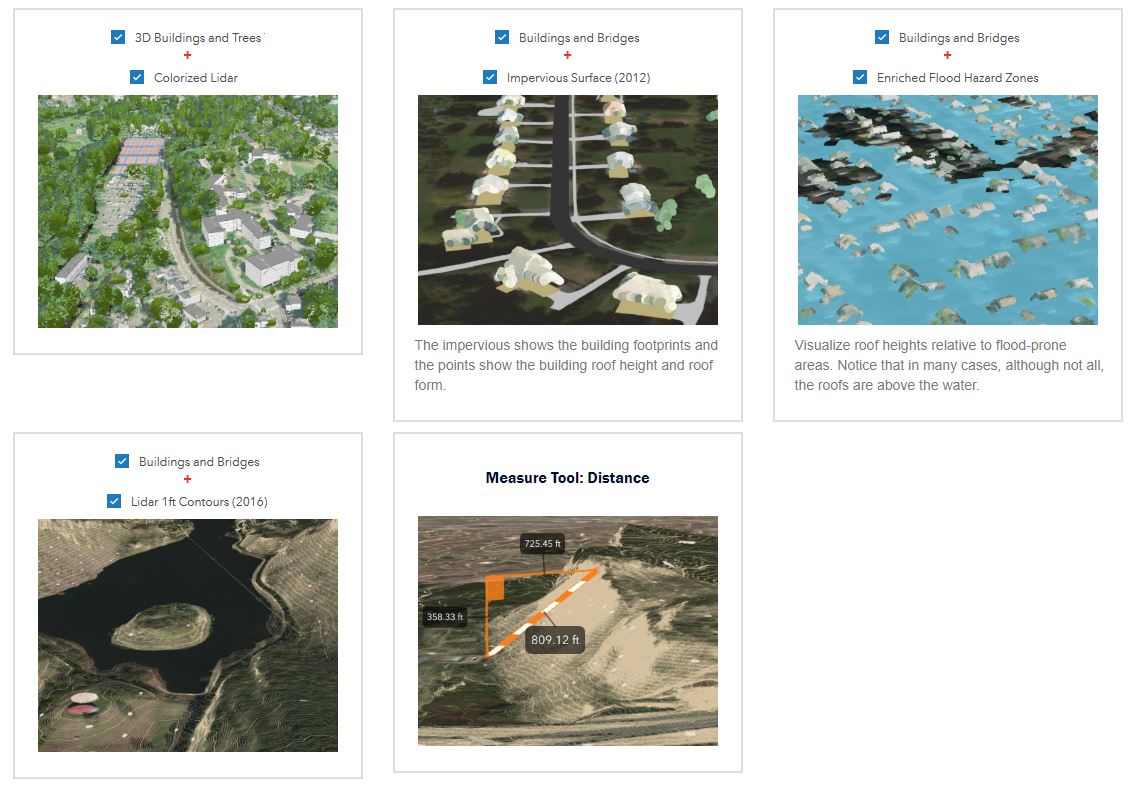

Some counties, cites and states are doing this already. Manitowoc County, WI., has a 3D LiDAR Point Cloud and also creating scenes with the data. Manitowoc County also did a great story map showing how their lidar is used as a colorized lidar point cloud here and highly recommend taking a look at it.

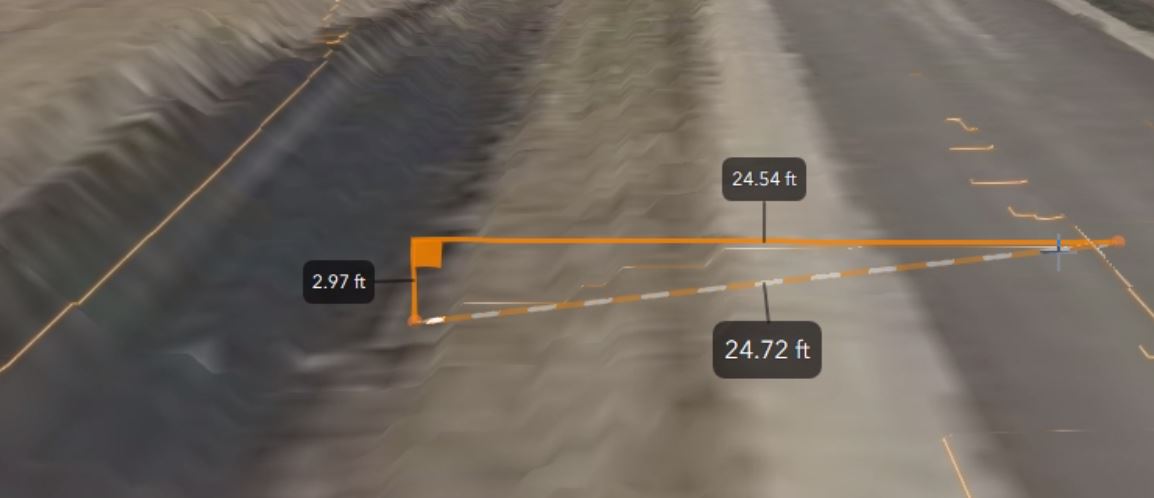

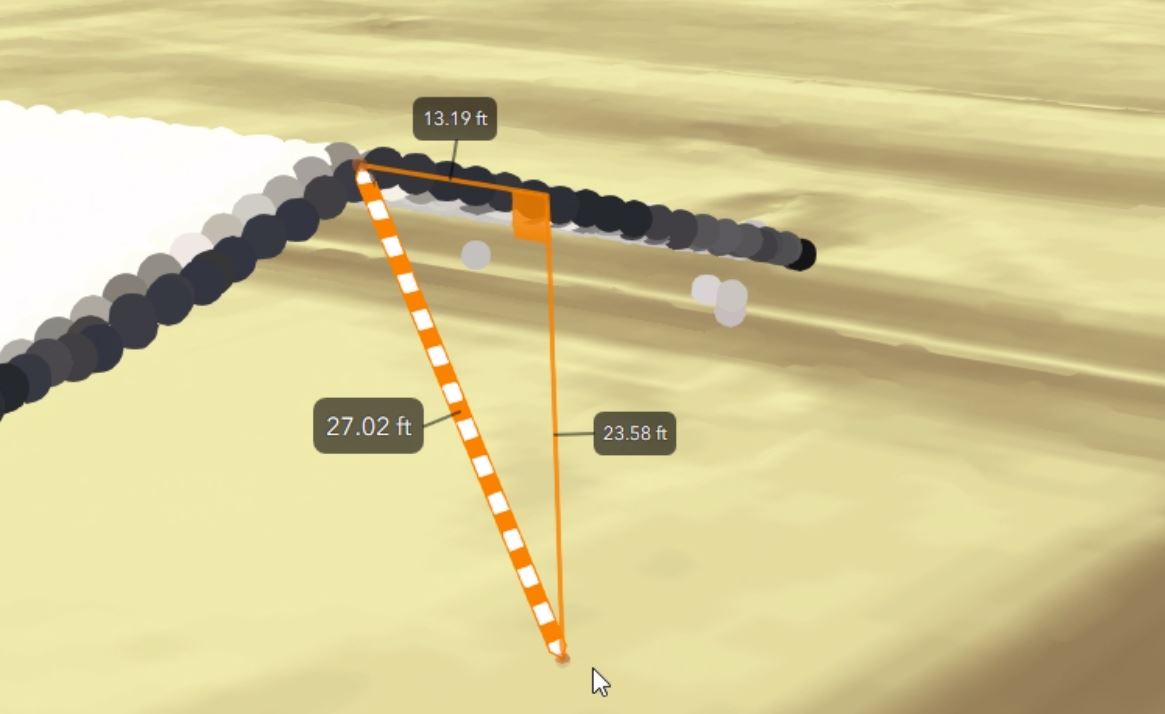

The StoryMap shows with a video how to do capture vertical, horizontal, and direct distances of LiDAR ground surfaces at any location Countywide. How to obtain detailed measurements of small LiDAR surface features such as depth of ditches to the road.

Measure LiDAR point clouds distances relative to LiDAR ground surfaces using house roofs as an example to see the height of the building.

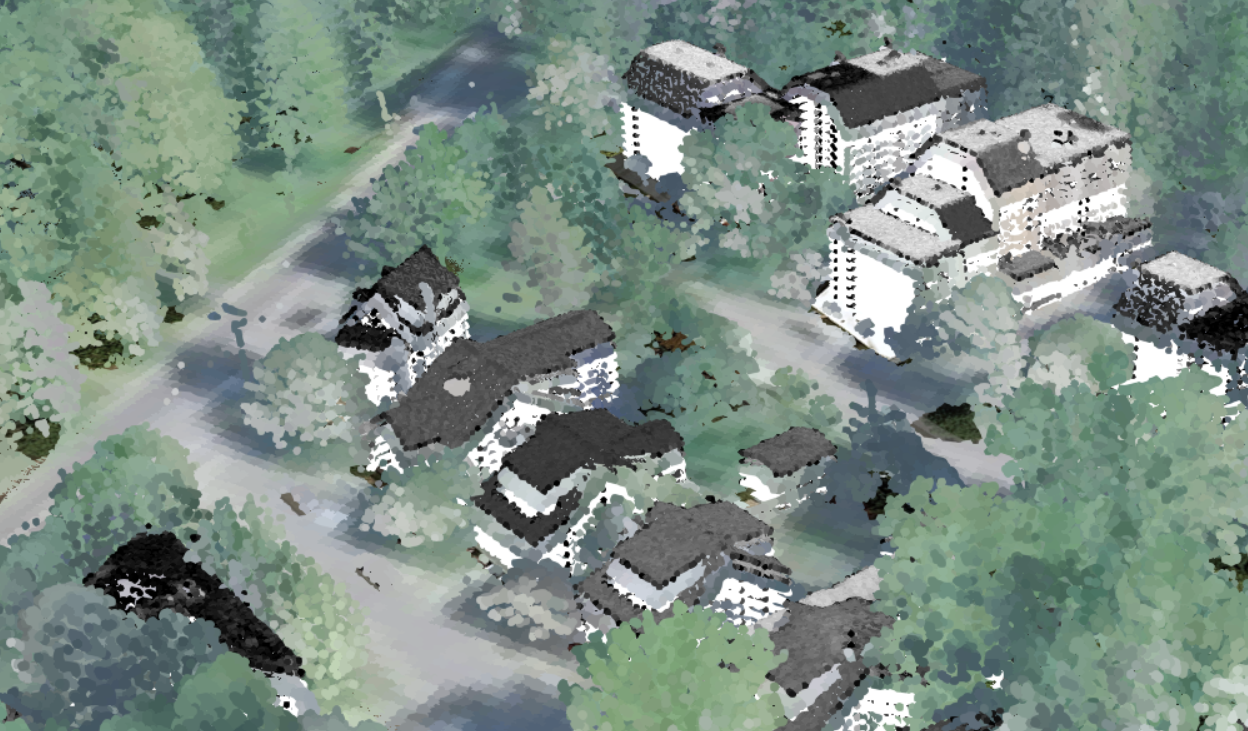

Here's one of Manitowac Buildings scene layer where they had the creative idea of using the same colorized lidar to give fake building sides by showing the lidar classified as buildings several times over, each with a slightly less elevation by changing the offset in the symbology. Further down in the blog, I show how to do this.

Hats off to Bruce Riesterer of Manitowoc County who put this all together before retiring, including coming up with the idea to use the building points multiple times with different colors to show the sides of buildings and now is working for private industry, see his new work at RiestererB_AyresAGO.

.

State of Connecticut 3D Viewer:

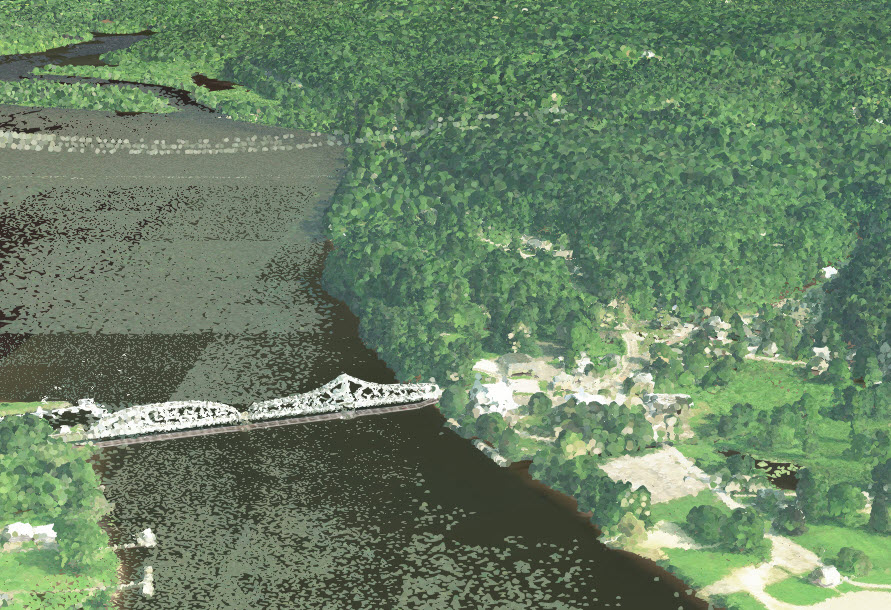

I helped the State of Connecticut CLEAR colorize their lidar using NAIP imagery as the first statewide lidar point cloud published to ArcGIS Online. It turned out to be about 650GBs of lidar broken into two scene layer packages. The time spent on it was mainly processing time and loading time. The State of Connecticut CLEAR sent me the newest NAIP imagery they had and with all the data on a computer, I just let it run colorizing the lidar. With that layer and other layers CLEAR had online, a web scene was created. A feature class was added that has links to their laz files, to the imagery, DEM and other layers. This allows users to preview the lidar in a 3D viewer before downloading. Users can even do measurements with lines or areas in 3D that allow most users to view it before.

Here's several views using different symbology and filters on the published lidar point cloud for Connecticut.

Colorized with NAIP:

Class Code modulated with intensity: Modulated Intensity allows the features to show up like roads, sidewalks, details in roof tops and trees.

Elevation modulated with intensity:

Color filtered to show buildings:

Here's some examples of how to display from their blog:

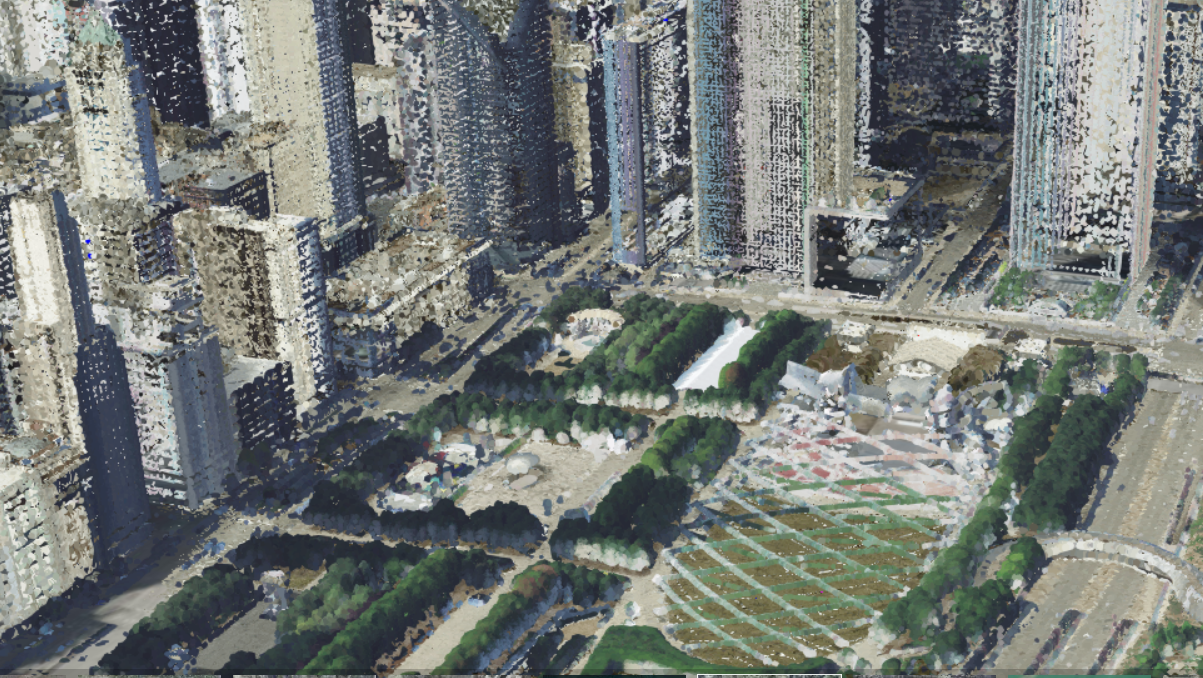

Chicago 3D:

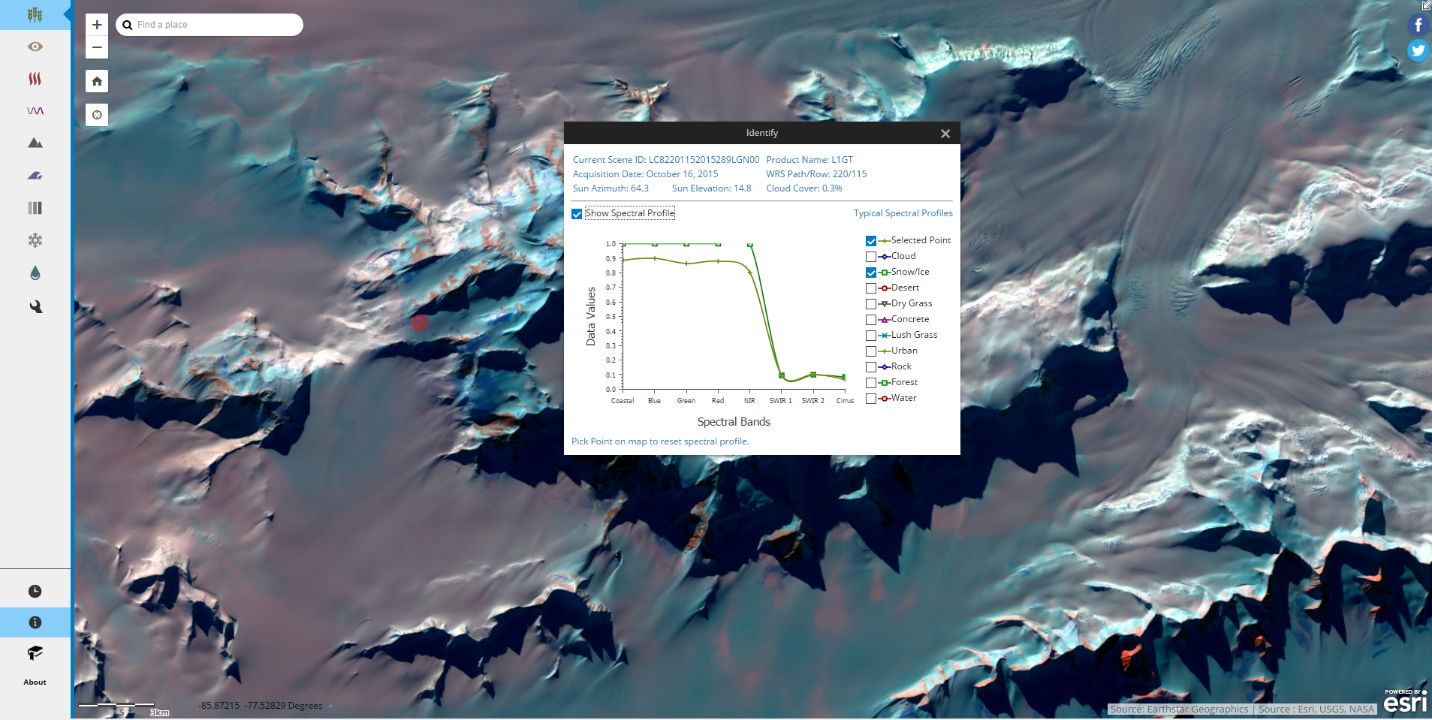

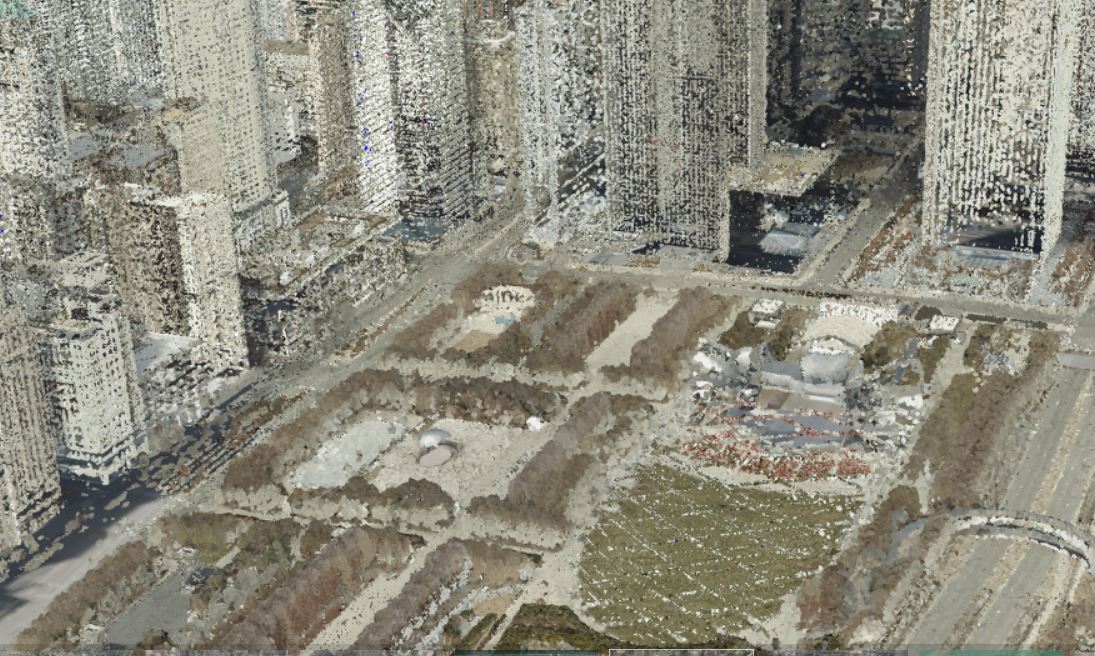

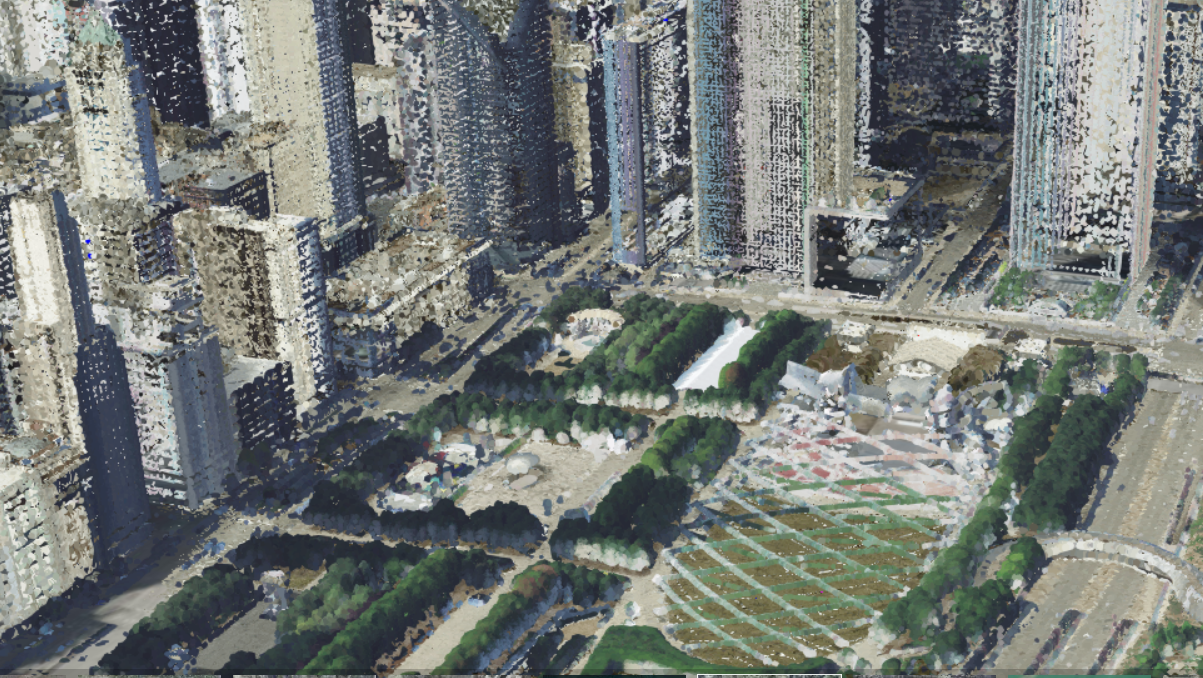

Recently, I was asked by the Urban team to help with Chicago and created basic 3D Building footprints from the Chicago Data Portal building footprints. I used the 8ppm Geiger lidar to create the DSM, nDSM and DTM for the 3D Basemap Solution. I then colorized the lidar using NAIP imagery and again the high resolution leaf off 2018 imagery. I then extracted the points classified as vegetation and used it to replace the high resolution leaf off imagery in the scene layer to show trees as green, but to get the roof tops using the high resolution.

Above is the high resolution leaf off imagery used to colorize the lidar in the scene. Below is he same area with the lidar colorized with NAIP for the vegetation (some building sides were classified by the vendor as vegetation in delivery to the USGS of the 8ppm Geiger lidar). You can see how the trees are much more identifiable using the lidar colorized with NAIP.

This could be used as a 3D Basemap. The 3D buildings do not have segmented roofs (divided by height), but the lidar shows the detail of the buildings. Below is the John Hancock Building identified in blue with a basic multipatch polygon in a scene layer with transparency applied.

Here's a view of the Navy Pier with both the NAIP colorized trees and High Resolution colorized lidar on at the same time.

Picking the Imagery to use:

Using the Chicago Lidar, I also built a scene to compare the different types of imagery used to colorize the lidar. It's a guide using the the video below to show how high resolution imagery leaf on vs. high resolution imagery leaf off vs. 1m NAIP leaf on. You can see below how leaf on imagery is great for showing trees. Your imagery does not have to meet exactly the year of the lidar, but the closer the better. But making it visual appealing is import in 3D cartography with colorized point clouds.

Chicago Lidar Colorized Comparison (High Res Leaf On vs. High Res Leaf Off vs. NAIP Leaf On).

Leaf On High Resolution:

Leaf On NAIP:

Leaf Off High Resolution:

Sometimes colorful fall imagery before the leaves drop can be a great for scenes, but it all depends on what you want to show.

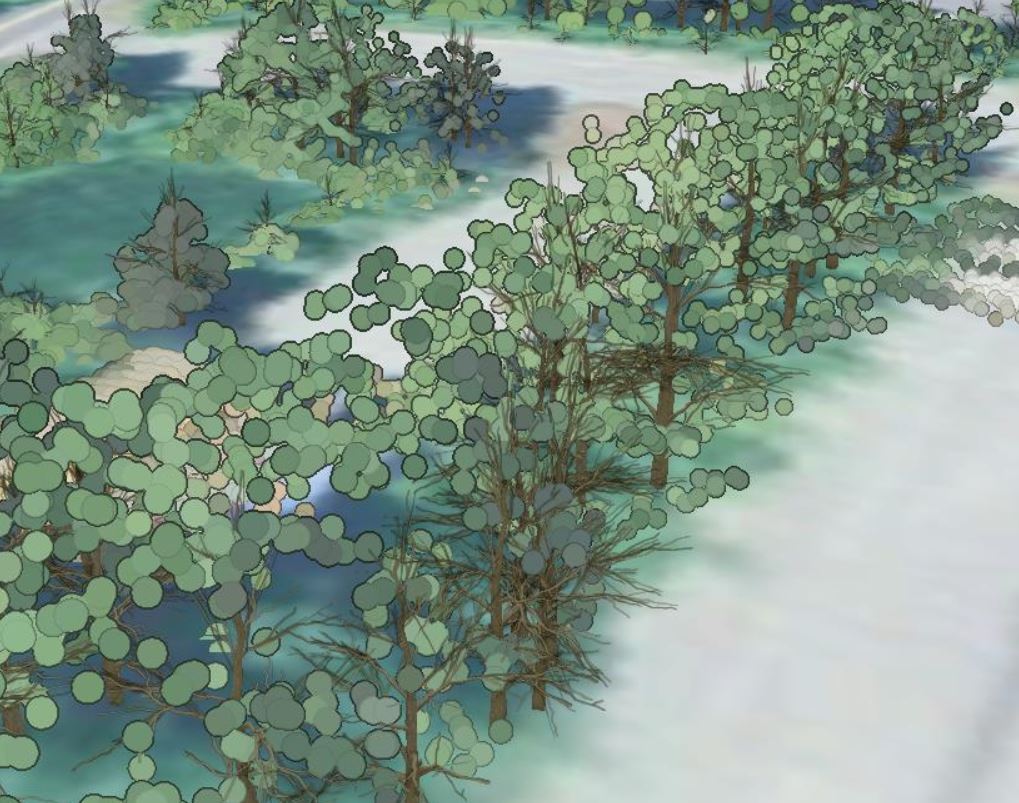

Way to add trees with limbs and points as leaves:

Here's a test I did using Dead Tree symbology to represent the trunk and branches, while the leaves come from the lidar colorized. My tool Trees from LIdar or 3D Basemap Solution tree tools can create the trees from lidar and then the points can be symbolized with the Dead Tree symbol. This image was done in ArcGIS Pro, not a scene.

City of Klaipėda 3D viewer (Lithuania):

The City of Klaipėda in Lithuania has used the colorized point clouds of trees with cylinders to represent trunks. The mesh here is very detailed. Because of being zoomed in so far, the points appear fairly large.

Here's another view zoomed out:

And another further zoomed out:

Some of the scenes above use NAIP imagery instead of higher resolution imagery. Why, when higher resolution is available. Often high resolution imagery is somewhat oblique where NAIP is collected usually from a higher altitude. With the higher altitude, you get less leans of buildings in the imagery and often the spacing of the lidar does not support the higher resolution imagery. In these cases NAIP is often preferred, but make point clouds with a couple las files to see what the differences are.

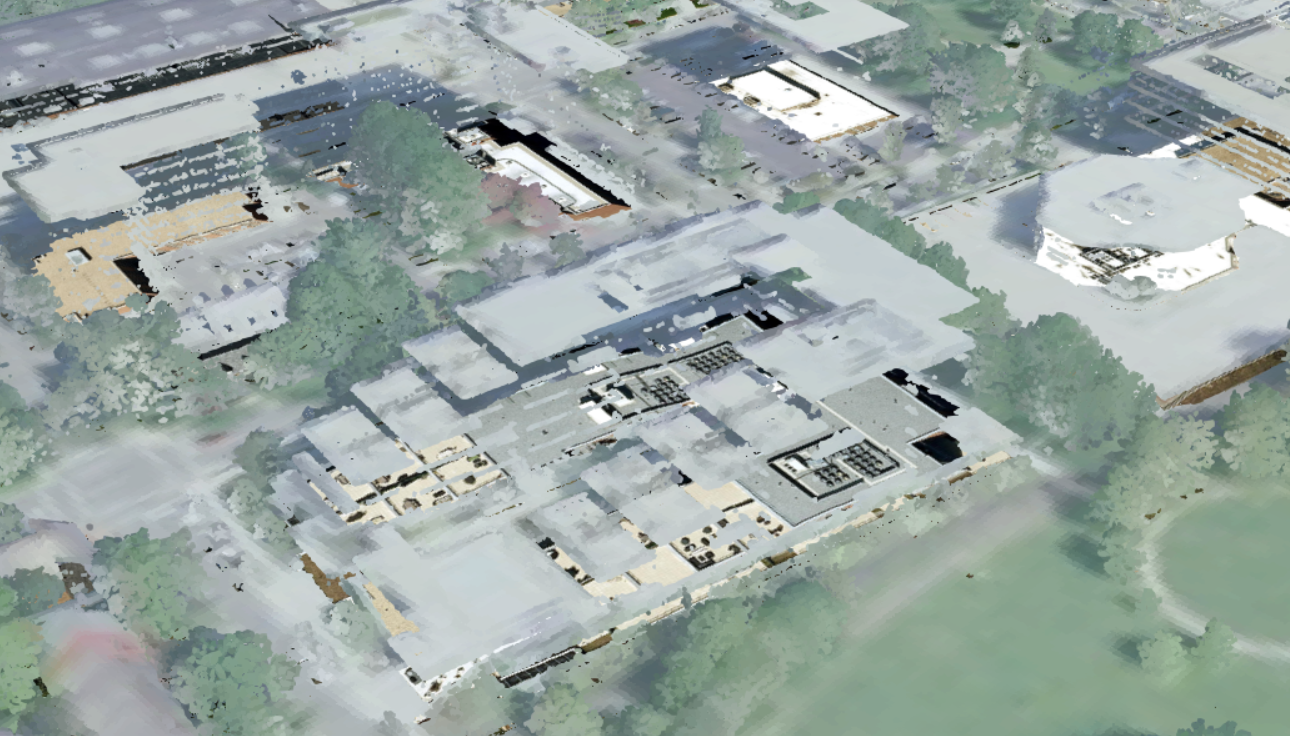

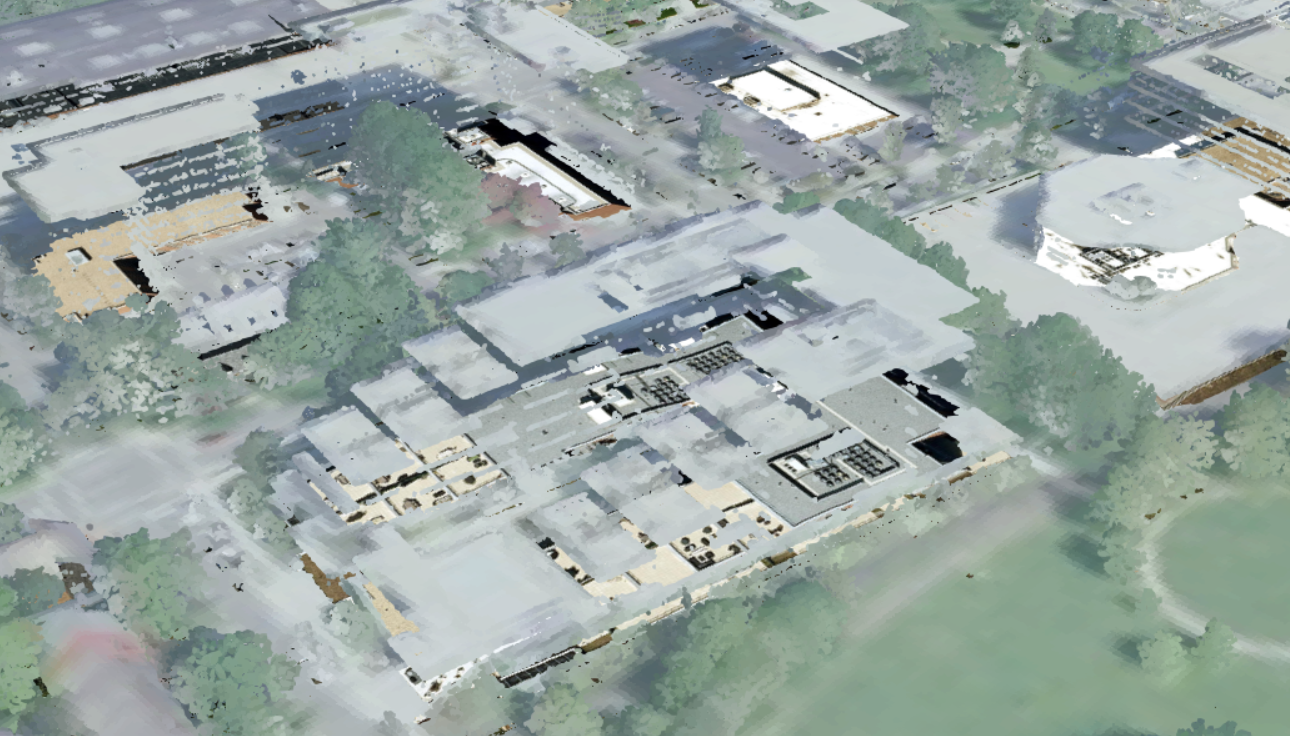

Here's a one over Fairfax County, VA, using their published lidar colorized (from their 3D LiDAR Viewer) and using intensity to show the roofs. With intensity, you can see the roof details better than with the NAIP imagery.

Scene Viewer

With large buildings, the building sides and roofs using intensity for symbology really shows off the detail. The sides of the buildings are just representative, not showing what the actual sides look like.

Scene Viewer

Here it is below with just using NAIP colorized points without the building sides and roofs using intensity for symbology.

The missing sides of the buildings make it hard to see where the building actually is and much of the roof detail is lost. Colorizing with intensity allows more detail because intensity is a measure, collected for every point, of the return strength of the laser pulse that generated the point. It is based, in part, on the reflectivity of the object struck by the laser pulse. The imagery often does not match exactly with the lidar due to collection maybe slightly off and slight oblique angle found in the imagery orthorectification.

Colorizing Redlands 2018 lidar:

Here's an example of colorizing the City of Redlands with the 2018 lidar that I worked on to support one of the groups here at Esri. Highly recommend taking just one las file to begin with, run through the process all the way to publishing to make sure there are no issues. I have in the past run through hours of download and colorization, just to learn a projection is wrong or something else is not correct (bad imagery, too dark or too light, etc.).

1. Downloaded the 2018 lidar from the USGS.

a. Download the meta data file with footprints, unzipped it and added the Tile shapefile to ArcGIS Pro

b. I got the full path and name of the laz files from the USGS site. Here's an example:of the path for the file to download:

ftp://rockyftp.cr.usgs.gov/vdelivery/Datasets/Staged/Elevation/LPC/Projects/USGS_LPC_CA_SoCal_Wildfires_B1_2018_LAS_2019/laz/USGS_LPC_CA_SoCal_Wildfires_B1_2018_w1879n1438_LAS_2019.laz

c. Added a path field to the Tiles and calculated the path with the individual name file to replace the original path copied in b. This is the formula I used in calculate field:

"ftp://rockyftp.cr.usgs.gov/vdelivery/Datasets/Staged/Elevation/LPC/Projects/USGS_LPC_CA_SoCal_Wildfires_B1_2018_LAS_2019/laz/USGS_LPC_CA_SoCal_Wildfires_B1_2018_" & !Name! & "_LAS_2019.laz"

d. Then exported to a text file and used an free download software using FTP to mass download all the files from the USGS lidar laz files for the tiles that intersected the City of Redlands Limits feature class.

2. Use Convert LAS to convert the data from laz format to LAS. LAS is required to colorize the lidar. Got an error, but it was because the projection was not supported. Turned off the rearrange and it converted from laz to las.

3. Looked at the projection in the reports in the meta data downloaded and found it was not supported because it used meters instead of feet, modified a projection and then copied it using a simple model builder tool to give a projection file to each LAS file.

4. Evaluated the lidar by creating a populating a las dataset: ground, bridge decks, water, overlap, etc. were classified. Buildings and vegetation were not.

5. Used Classify LAS Building with aggressive option to classify the buildings. Classifying the buildings allows you to filter it in the future scene. It's also good for using to extract building footprints, another operation I commonly do.

6. Used Classify LAS by Height to classify the vegetation. (3) Low Vegetation set to 2m, (4) Medium Vegetation set to 5m, (5) High Vegetation set to 50m. This caused the Low Vegetation to be non-ground from 0 to 2m, Medium >2m to 5m and High Vegetation >5m to 50m. This is done so you can turn off the vegetation in a scene.

7. Used the ArcGIS Pro NAIP imagery service with the Template set to None and then Split Raster tool to download the area I need based on the Redlands City Limits.

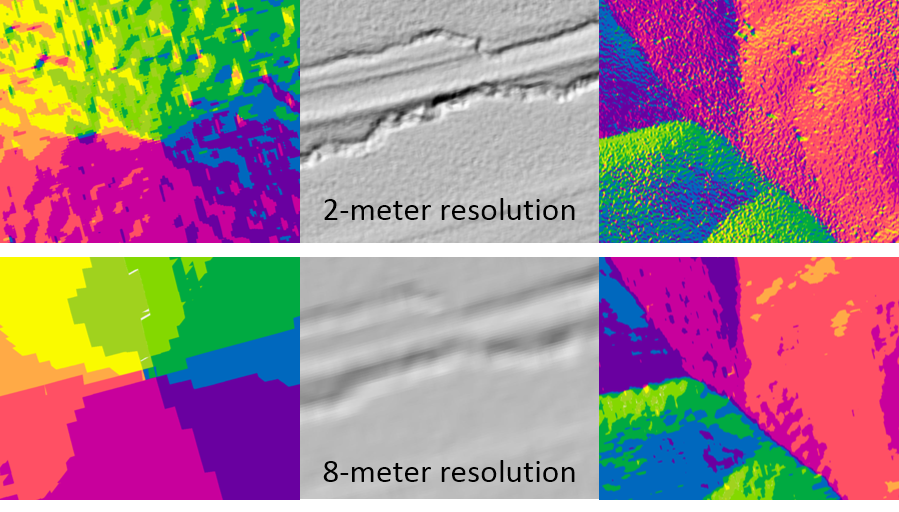

8. Created a mosaic dataset of the NAIP imagery. Applied function to resamples from 0.6m to 0.2m and applied a statistics with a 2 circle on it. This will take the course 0.6m (or 1m) NAIP imagery and smooth it for better colorization.

9. Colorized the lidar with the Color LAS tool. Set it to colorized RGB and Infrared with the 1,2,3,4 bands and an output folder. The default puts a "_colorized" on it, I usually do not do this and simply have the output folder to be called colorlas. Set the output colorized lasd to be one directory above with the same name.

10. Added to Pro and reviewed the las files lasd. Found it covered the area, the lidar was colorized properly and it had a good look to it. Set the symbology to RGB.

11. Create Point Cloud Scene Layer Package Tool with the output lidar to create a slpk file and then added to ArcGIS Pro to make sure it was working correctly. Change the defaults.

12. Used my ArcGIS Online account to load the slpk or the Share Package tool.

13. Publish the scene and view it online.

14. Add the lidar scene layers multiple times and use symbolize or properties to show it the what you would like. You can see an example at the Chicago 3D. If you open it and save it as your own (logged in), you can access the layers in the scene layers to see how they are set up with Symbology and Properties.

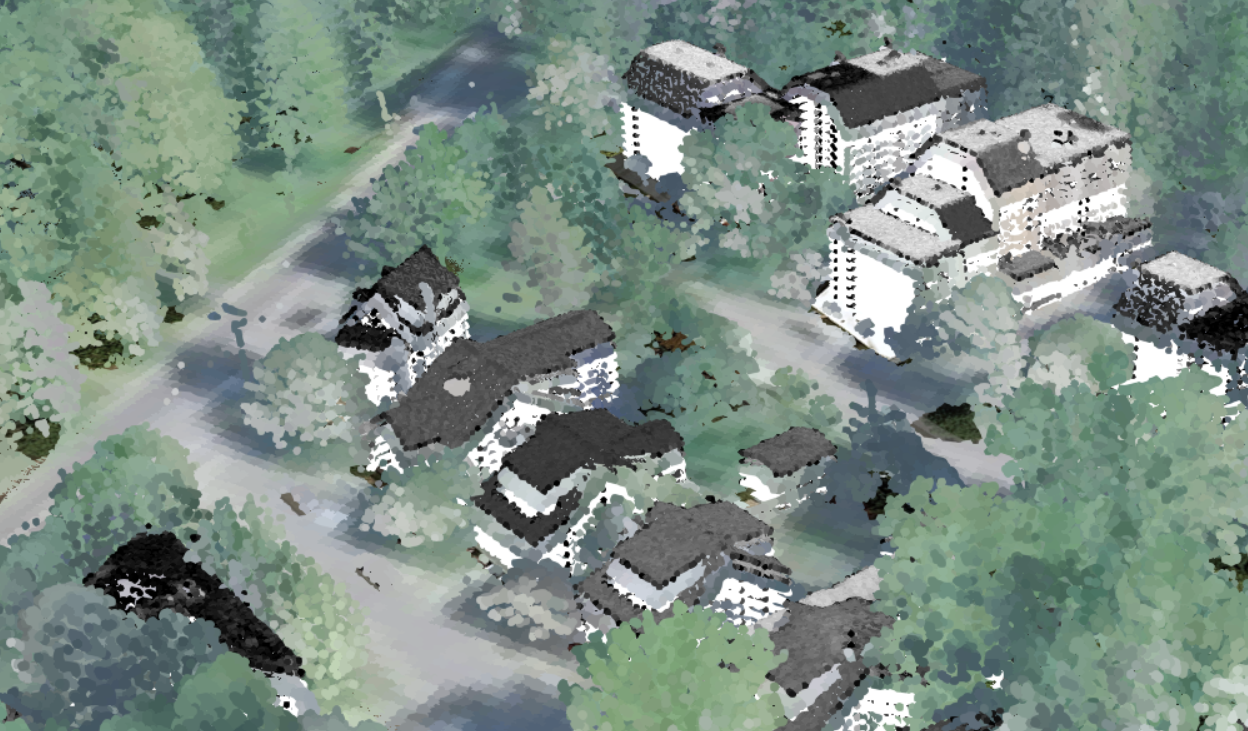

Kentucky lidar test:

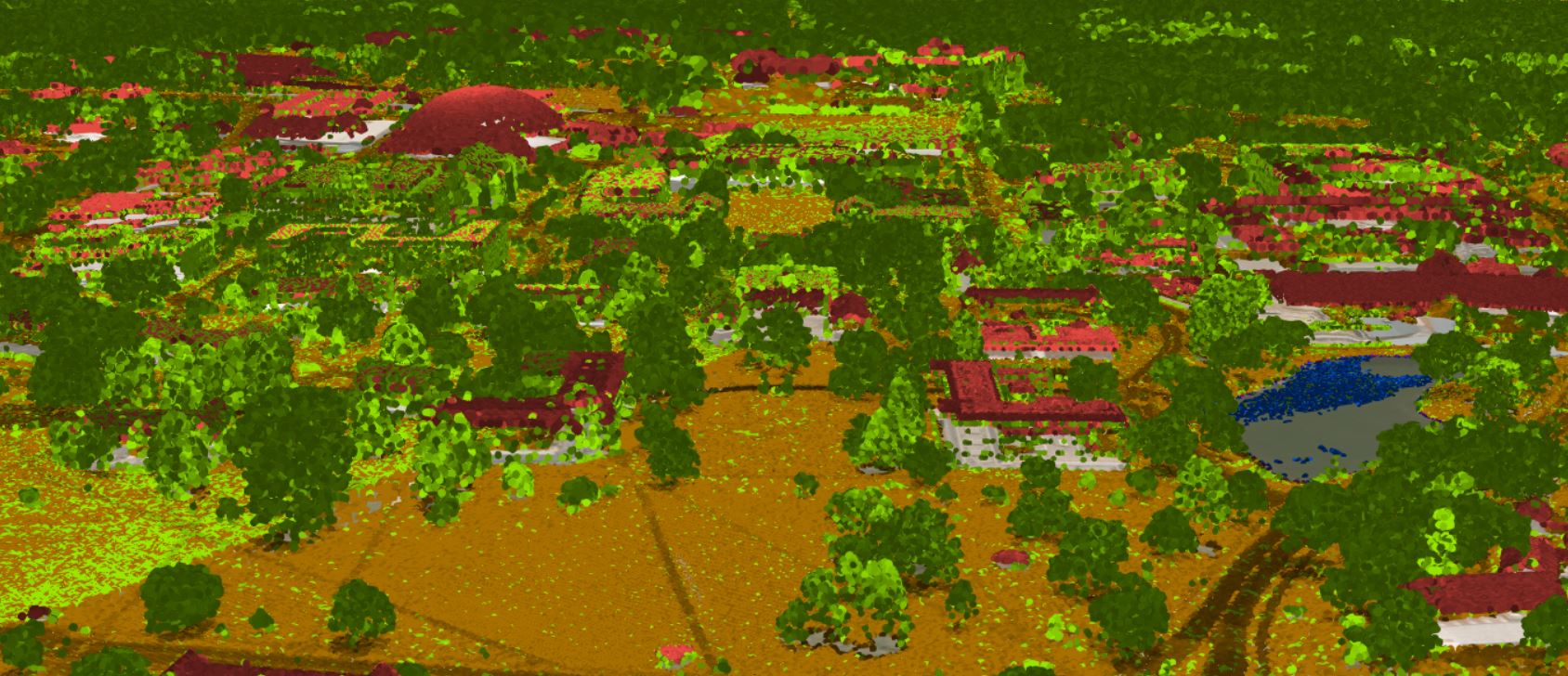

Below is some work I helped the State of Kentucky with. The result is similar in someways to the work of neo-Impressionists Georges Seurat and Paul Signac that pioneered a painting technique, dubbed Pointillism.

Kentucky lidar colorized:

Georges Seurat's Seascape at Port en Bessin Normandy below:

Adding Tree Trunks:

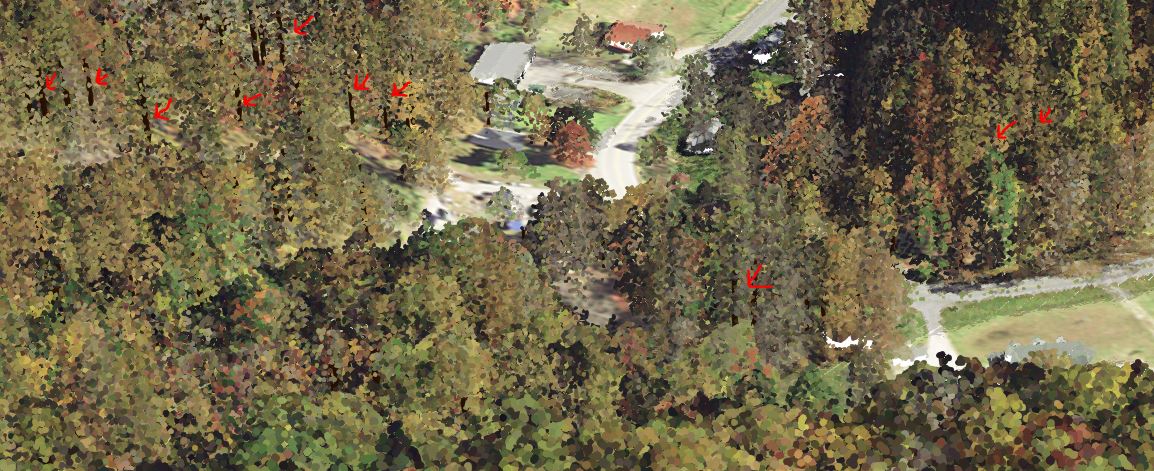

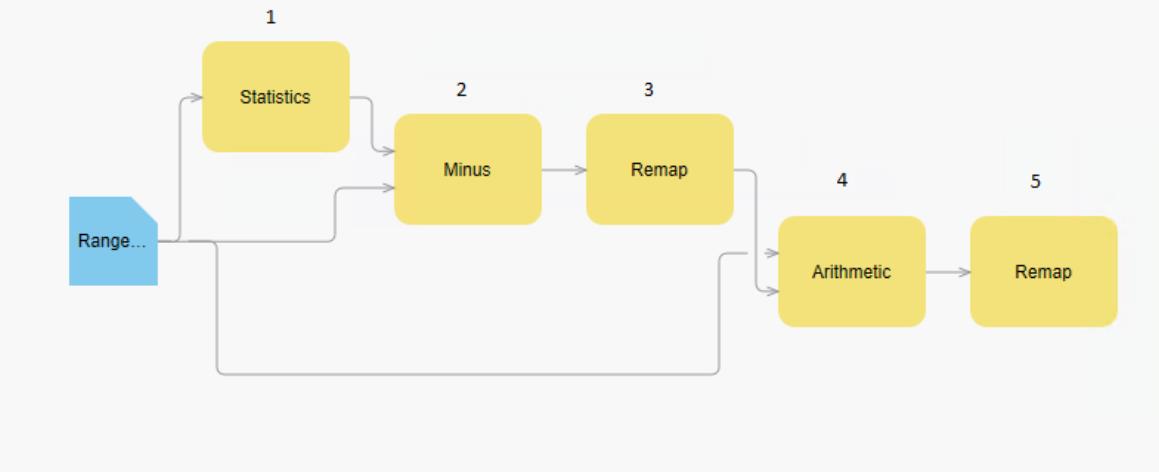

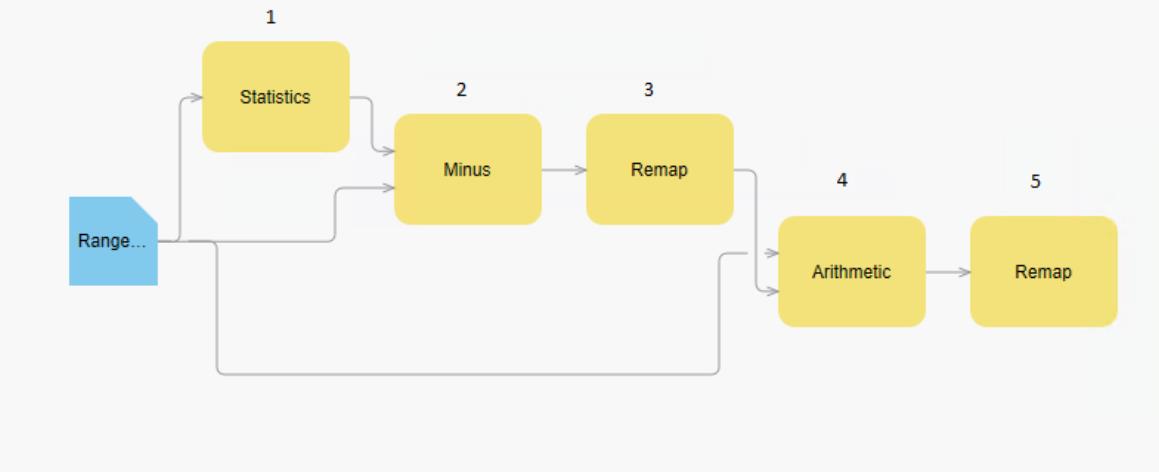

Adding tree trunks in the background makes the point cloud scenes just look a little more real and allows viewers to better view that it's a tree. I recently worked on a park area in Kentucky with supporting Division of Geographic Information (DGI) to try to create tree trunks like the one in the City of Klaipėda scene in Lithuania and then add sides of buildings as a test. I did not want the tree trunks to overwhelm the scene, just to be a background item to make the scenery more realistic. The image and link to the scene shows the red arrows point to the tree trunks. I used raster functions applied to the Range of Values using Las Point Statistics as Raster. First I created a Range of Elevation Values using 5ft sampling value using Las Point Statistics as Raster. Then I used raster functions to create a raster showing the high points as show below. (1).Statistics: 5x5 mean. (2). Minus: Statistics – Range, Max Of, Intersection Of. (3). Remap: Minimum -0.1 to 0.1, Output 0, Change missing values to NoData. (4). Plus: Range plus Max Of, Intersection Of . (5). Remap: 0 to 26 set to no data. I then used Raster to Points to create a point layer of the raster. Add field and calculate it to be Height from gridcode, Add field TreeTrunkRadius and calculate it to be (!Height!/60) + .5, this gave me a width to use later for the 3D polygons. Placed the points into the 3D Layers and applied the Type – Base height and applied field to Height and US Feet. Ise an expression for the Height using the Expression Builder $feature.Height *0.66, because I wanted the tree trunks to go up only 2/3 of the height of the trees. I used 3D Layer to Feature Class to create a line feature class and then use it as input to Buffer 3D using the Field option for distance and the TreeTrunkRadius as the input field. I then use Create 3D Object Scene Layer Package to output the TreeTrunks.slpk. I used Add item to your ArcGIS Online Account and publish it. Once online, I played with the colors to get it brown. This process could also have use the Trees from Lidar tool, but the NAIP imagery was fall and did not have the same NDVI reflectivity for that process.

(1).Statistics: 5x5 mean. (2). Minus: Statistics – Range, Max Of, Intersection Of. (3). Remap: Minimum -0.1 to 0.1, Output 0, Change missing values to NoData. (4). Plus: Range plus Max Of, Intersection Of . (5). Remap: 0 to 26 set to no data. I then used Raster to Points to create a point layer of the raster. Add field and calculate it to be Height from gridcode, Add field TreeTrunkRadius and calculate it to be (!Height!/60) + .5, this gave me a width to use later for the 3D polygons. Placed the points into the 3D Layers and applied the Type – Base height and applied field to Height and US Feet. Ise an expression for the Height using the Expression Builder $feature.Height *0.66, because I wanted the tree trunks to go up only 2/3 of the height of the trees. I used 3D Layer to Feature Class to create a line feature class and then use it as input to Buffer 3D using the Field option for distance and the TreeTrunkRadius as the input field. I then use Create 3D Object Scene Layer Package to output the TreeTrunks.slpk. I used Add item to your ArcGIS Online Account and publish it. Once online, I played with the colors to get it brown. This process could also have use the Trees from Lidar tool, but the NAIP imagery was fall and did not have the same NDVI reflectivity for that process.

Adding Building sides:

To fill the building sides, I classified the buildings using Classify LAS Building. Then used Extract LAS with a filter on the lidar dataset point cloud to extract only the ones with the building class code. I published this and added it 9 times to a group in the scene. The first three building layers, I adjusted them up .5, 0 and -0.5 meters and left them colorized with the imagery. This make the roof slightly more solid looking as there were gaps. Second, I took the remaining 6 layers and set them to intensity color with increments of -1m, -2m, -3m, -4m, -5m and -6m. This created the walls of the buildings. I changed the range of colors for the -3m to give a line to go across it that was slightly darker. I grouped all the layers together so they would be one layer. You can also use the filter for buildings and colorize with Class Code, then set the class code color to be what ever color you wish for building sides. If you set the modulated with intensity, this too change change the appearance, often giving shadowed the sides of buildings or what looks like windows at times.

Standard look before adding building sides and thickness to the roof:

With the illusion of walls added and roof thickened (Cumberland, KY):

Here's another view showing the building sides:

It is an illusion with the sides of the buildings, the colors do not match the real side of the buildings and the differences in the intensity of the lidar used do not show the true differences in the sides like the perceived windows above. Like most illusions or representations, it tricks the eyes into thinking it's the sides of the buildings. Sometimes, like most illusions or representations, it does not work from all angles as this building did with a low amount of points:

Overall, I think the representative sides help you to identify what is a building and what is not. The colors of the building sides will not match reality, nor do not show true windows or true doors. Doing so is usually a very costly and time consuming process after generating 3D models of the buildings. You could still generate the 3D buildings with the Local Government 3D Basemap Solution for ArcGIS Pro and have them semi-transparent and use for selection and analysis without any coloring. You could also use rpks applied to the buildings to represent the 3D models more accurately, but this would again be a representation without very detailed data.

How to get Lidar easily for large area: Here's a video of how to download high volumes of Lidar tiles easily from the USGS for a project area. Includes how to get a list of the laz files to download. Once downloaded, use Convert LAS to take the files from LAZ format to LAS. LAS format is needed to run classification, colorization and manual editing of the point cloud.

Covering the basics of Colorized Lidar Point Clouds, creating scene layer packages and visualizing:

Here's a video where I go through the process of Colorizing Lidar and what things I look for with times below to go to certain items (26 minutes long):

Link: https://esri-imagery.s3.us-east-1.amazonaws.com/events/UC2020/videos/ColorizingLidar.mp4

0:30 Seconds talking lidar input and some in classification of lidar.

1:05 Adding imagery services and review of the imagery alignment to use for the colorization of lidar.

1:49:Adding Getting NAIP imagery service.

2:20: Hi Resolution imagery services.

3:35: Talking about the colorizing trees with leaf off vs. leaf on imagery.

4:55: Comparing imagery, Sidewalks coloring the trees with leaf off.

6:00: Using Split raster to download imagery to use from a service.

8:25: Mosaic to New Raster the split images downloaded.

10:50: Check download mosiac

11:40: Colorize LAS tool

13:52: Talking about lidar, how big it is, how long it takes to colorized, download times from services using Split Raster, size of scene layer package in relation to the before uncolorized lidar.

15:40: Adding the colorized lidar and seeing it in 3D.

16:40: Upping the Display Limit to allow more points to be seen in the 3D viewer.

17:00: Adding imagery and reviewing the lidar colorized.

17:56: Increasing Point Size of the points to better see the roofs, trees and sides of buildings in the colorized lidar. Talking about how color does not match for sides of buildings.

19:00: Looking at trees, shadows.

19:25: Looking at roofs and down into the streets.

20:10: Creating a Create Point Cloud Scene Layer Package.

21:30: Adding Scene Layer Package to Pro and seeing the increased speed because of tilling and formatting. Review it this way to speed.

22:30: Looking at it with different settings in symbology, Elevation, Class, Intensity, Return (Geiger lidar does not have returns).

24:35: Looking at a single tree.

24:50: Share Package tool.

26:35: Showing the web scene

There is also this video done a couple years ago that covers the topic in a different ways.

DEMs:

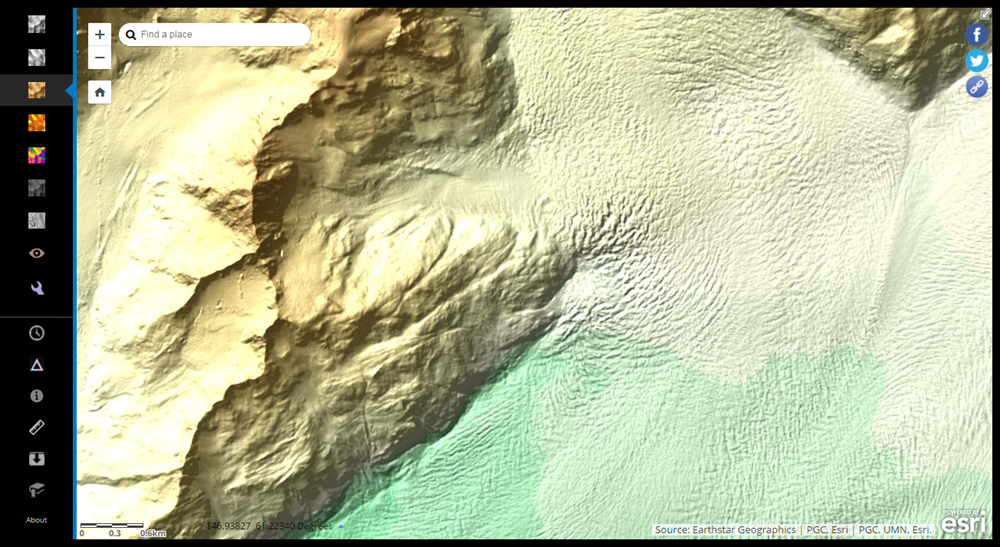

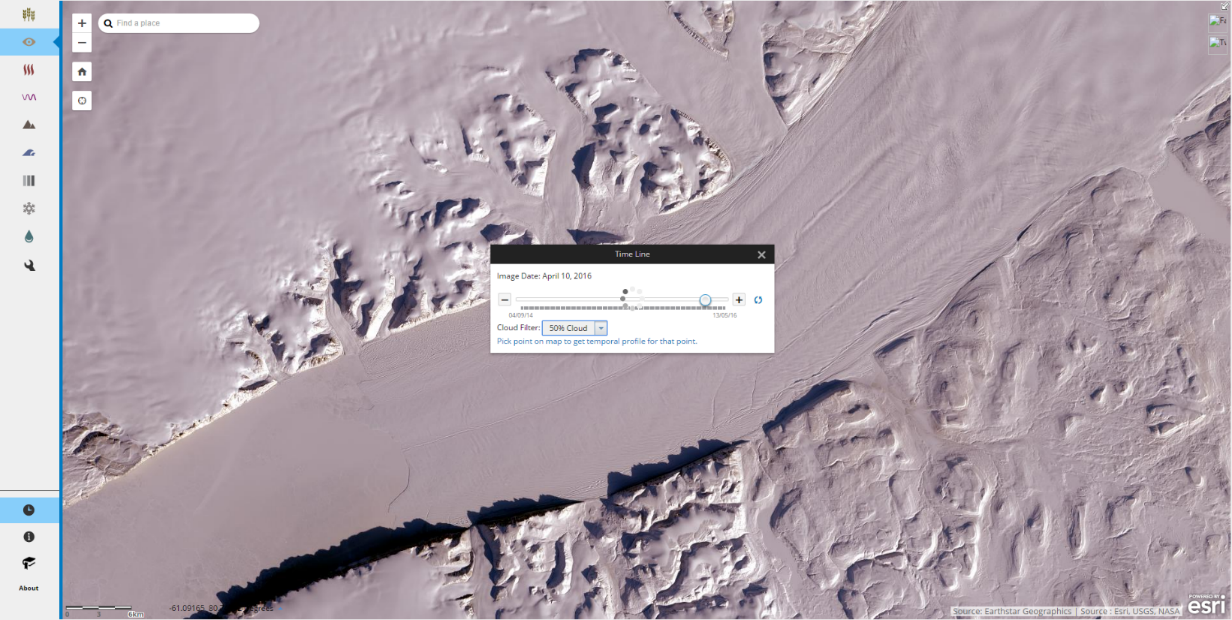

Loading a higher resolution DTM (DEM) as the ground is often needed. In this link you can turn off the DTM1m_tif whidh is the lidar ground DTM vs. the World Terrain Service to see the difference. In the US, most of the time NED is usually good enough that your colorized las will be very close, but sometimes your colorized buildings will go into the ground and the lidar points near the ground will be under it or float on top of it. You can take your las files and in ArcGIS Pro see if the ground points fall below or above the terrain. If the difference is too much, you need to publish your DTM. The 3D Basemap solution (called Local Government 3D Basemap solution right now) can guide you through this process and might in the future guide you through the colorization and publication of lidar. Here's a blog that goes through how to do it and the help. The Local Government 3D Basemaps solution has tasks that can also walk you through this process for publishing an elevation.

Import tools for building colorized lidar packages:

Edit LAS file classification codes - Every lidar point can have a classification code assigned to it that defines the type of object that has reflected the laser pulse. Lidar points can be classified into a number of categories, including bare earth or ground, top of canopy, and water. The different classes are defined using numeric integer codes in the LAS files.

Covert LAS - Converts LAS files between different compression methods, file versions, and point record formats.

Extract LAS - Filters, clips, and reprojects the collection of lidar data referenced by a LAS dataset.

Colorize LAS - Applies colors and near-infrared values from orthographic imagery to LAS points.

Classify LAS Building - Classifies building rooftops and sides in LAS data.

Classify Ground - Classifies ground points in aerial lidar data.

Classify LAS by Height - Reclassifies lidar points based on their height from the ground surface. Primarily used for classifying vegetation.

Classify LAS Noise - Classifies LAS points with anomalous spatial characteristics as noise.

Classify LAS Overlap - Classifies LAS points from overlapping scans of aerial lidar surveys.

Change LAS Class Codes - Reassigns the classification codes and flags of LAS files.

Create Point Cloud Scene Layer Package - Creates a point cloud scene layer package (.slpk file) from LAS, zLAS, LAZ, or LAS dataset input.

Share Package - Shares a package by uploading to ArcGIS Online or ArcGIS Enterprise.

Share a web elevation layer

Here's some lidar colorized to look at:

Helsinki point cloud (Finland) Scene Viewer

Barneget Bay (New Jersey, US) Scene Viewer

City of Denver Point Cloud (Colorado, US) Scene Viewer

City of Redlands (California, US) Scene Viewer Has a comparison of high resolution vs. NAIP colorized lidar.

Kentucky Lidar Test (Cumberland, Kentucky, US) Scene Viewer Fall imagery applied to trees, building sides added, tree trunks added.

Lewisville, TX, US (near Dallas) Scene Viewer

I'll be adding more to this blog in the future, stay tuned.

Arthur Crawford - Living Atlas/Content Product Engineer

(1).Statistics: 5x5 mean. (2). Minus: Statistics – Range, Max Of, Intersection Of. (3). Remap: Minimum -0.1 to 0.1, Output 0, Change missing values to NoData. (4). Plus: Range plus Max Of, Intersection Of . (5). Remap: 0 to 26 set to no data. I then used Raster to Points to create a point layer of the raster. Add field and calculate it to be Height from gridcode, Add field TreeTrunkRadius and calculate it to be (!Height!/60) + .5, this gave me a width to use later for the 3D polygons. Placed the points into the 3D Layers and applied the Type – Base height and applied field to Height and US Feet. Ise an expression for the Height using the Expression Builder $feature.Height *0.66, because I wanted the tree trunks to go up only 2/3 of the height of the trees. I used 3D Layer to Feature Class to create a line feature class and then use it as input to Buffer 3D using the Field option for distance and the TreeTrunkRadius as the input field. I then use Create 3D Object Scene Layer Package to output the TreeTrunks.slpk. I used Add item to your ArcGIS Online Account and publish it. Once online, I played with the colors to get it brown. This process could also have use the

(1).Statistics: 5x5 mean. (2). Minus: Statistics – Range, Max Of, Intersection Of. (3). Remap: Minimum -0.1 to 0.1, Output 0, Change missing values to NoData. (4). Plus: Range plus Max Of, Intersection Of . (5). Remap: 0 to 26 set to no data. I then used Raster to Points to create a point layer of the raster. Add field and calculate it to be Height from gridcode, Add field TreeTrunkRadius and calculate it to be (!Height!/60) + .5, this gave me a width to use later for the 3D polygons. Placed the points into the 3D Layers and applied the Type – Base height and applied field to Height and US Feet. Ise an expression for the Height using the Expression Builder $feature.Height *0.66, because I wanted the tree trunks to go up only 2/3 of the height of the trees. I used 3D Layer to Feature Class to create a line feature class and then use it as input to Buffer 3D using the Field option for distance and the TreeTrunkRadius as the input field. I then use Create 3D Object Scene Layer Package to output the TreeTrunks.slpk. I used Add item to your ArcGIS Online Account and publish it. Once online, I played with the colors to get it brown. This process could also have use the