- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Image Analyst

- :

- ArcGIS Image Analyst Questions

- :

- Re: ERROR WHEN TRAINING DEEP MODEL

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

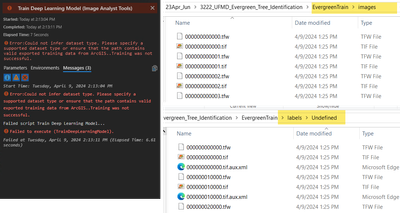

ERROR WHEN TRAINING DEEP MODEL

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello everyone...

I'm new in arcgis pro and am trying to create a deep learning model so now im stuck in training the model and im getting this error:

Traceback (most recent call last):

File "c:\program files\arcgis\pro\Resources\ArcToolbox\toolboxes\Image Analyst Tools.tbx\TrainDeepLearningModel.tool\tool.script.execute.py", line 308, in execute

data_bunch = prepare_data(in_folders, working_dir=out_folder, **prepare_data_kwargs)

File "C:\Program Files\ArcGIS\Pro\bin\Python\envs\arcgispro-py3-clone-Athu_DL\Lib\site-packages\arcgis\learn\_data.py", line 1440, in prepare_data

raise Exception(

Exception: Could not infer dataset type. Please specify a supported dataset type or ensure that the path contains valid esri files

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "c:\program files\arcgis\pro\Resources\ArcToolbox\toolboxes\Image Analyst Tools.tbx\TrainDeepLearningModel.tool\tool.script.execute.py", line 390, in <module>

execute()

File "c:\program files\arcgis\pro\Resources\ArcToolbox\toolboxes\Image Analyst Tools.tbx\TrainDeepLearningModel.tool\tool.script.execute.py", line 384, in execute

del data_bunch

UnboundLocalError: local variable 'data_bunch' referenced before assignment

Failed script (null)...

Failed to execute (TrainDeepLearningModel).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

My failed message

Traceback (most recent call last):

File "c:\program files\arcgis\pro\Resources\ArcToolbox\toolboxes\Image Analyst Tools.tbx\TrainDeepLearningModel.tool\tool.script.execute.py", line 308, in execute

data_bunch = prepare_data(in_folders, working_dir=out_folder, **prepare_data_kwargs)

File "C:\Program Files\ArcGIS\Pro\bin\Python\envs\arcgispro-py3\lib\site-packages\arcgis\learn\_data.py", line 1440, in prepare_data

raise Exception(

Exception: Could not infer dataset type. Please specify a supported dataset type or ensure that the path contains valid esri files

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "c:\program files\arcgis\pro\Resources\ArcToolbox\toolboxes\Image Analyst Tools.tbx\TrainDeepLearningModel.tool\tool.script.execute.py", line 390, in <module>

execute()

File "c:\program files\arcgis\pro\Resources\ArcToolbox\toolboxes\Image Analyst Tools.tbx\TrainDeepLearningModel.tool\tool.script.execute.py", line 384, in execute

del data_bunch

UnboundLocalError: local variable 'data_bunch' referenced before assignment

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

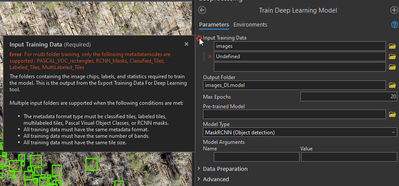

I'm having the same issue in Pro v3.2.2. There are tiffs in my \images folder and xml files in my \undefined\labels folder. I've tried running the tool with/without the labels folder. Without the labels folder, I get this error, making me think it needs the labels folder.

However, when I try to include my labels folder, I get the error below even though the exported training files used RCNN masks for the metadata format.

@PavanYadav I appreciate any assistance you can offer.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

hello @GBacon sorry that I missed to see your message.

In the Train Deep Learning Model tool, you need to input the entire folder that is created by the Export Training Data For Deep Learning tool

Product Engineer at Esri

AI for Imagery

Connect with me on LinkedIn!

Contact Esri Support Services

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thanks, Pavan. I'm all set now and I have a trained model.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

@PavanYadav can you assist things I can do to get the accurate model? I have have been developing the Unet models since last week and my F1, Precision and Recall are so low. See the attached.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello @AthuleSali

Not sure if you have already considered the following but there are some general considerations to improve a deep learning model:

1. Utilize the max potential of your training data by using a max possible numbers of epochs (e.g. 100 or 500) and use the STOP_TRAINING—Early parameter. The model training will stop when the model is no longer improving, regardless of the max_epochs parameter value specified. This is the default. Let's say you entered 500 epochs but the tool does not find the loss decreasing after 75th epoch so it will stop. Now, here if you are still not satisfied with the quality, please consider following.

2. Improve the model by adding more training data in terms of both variety and number of samples.

3. You can consider changing the training and validation ratio, by default the tool uses 10% of data for validation. You may consider making this to 20%, in case the model is overfitting on the training data.

4. Each classes of sample should have enough samples.

The Train Deep Learning tool does have addition functionalities like data augmentation (randomly rotating, zooming) to create variety. There are other hyperparameters that you can experiment like Learning rate, but I will first try out the above.

Product Engineer at Esri

AI for Imagery

Connect with me on LinkedIn!

Contact Esri Support Services

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Getting these errors left and right. It seems the deep learning tools in Pro weren't refined and debugged enough before being released. It's disappointing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

thank you. I will try.

Have a nice day.

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »