- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Enterprise

- :

- ArcGIS Enterprise Questions

- :

- Why won't my Feature Service extract?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Why won't my Feature Service extract?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I have successfully published a Feature Service from a feature class containing several thousand records. I know that the feature class in the database that the service is published from, is approximately 60MB.

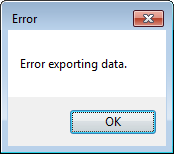

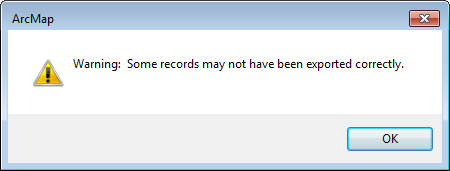

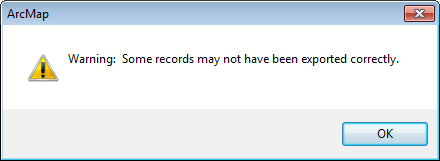

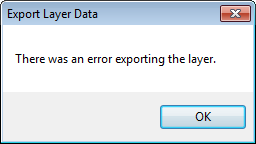

When I am attempting to extract the entirety of the Feature Service either from ArcMap (via right click > export to feature class) or from the Analysis tools in Map Viewer, I am presented with the following errors after approximately 60 seconds:

No output is generated.

Investigating the ArcGIS Server Manager logs reveals the following:

| Level | Message | Source |

|---|---|---|

| SEVERE | Error performing query operation Error handling service request :java.lang.OutofMemoryError: Java heap space | Rest |

| SEVERE | Instance of service 'Folder/NameOfService.MapServer' failed to process a request | Folder/NameOfService.MapServer |

I suspected the issue could be related to the heap size of the SOCs noting that ~60MB might have been a heavy load to request from my server. A bit more investigation led me to this Technical Support article which suggests bumping the maximum SOC heap size from the default 64MB. However, even after bumping up the maximum SOC heap size, the same timeout issues persist.

I am able to extract a smaller selection of features from the Feature Service, just not the entirety. What might be causing this problem I am experiencing?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Justin,

How many records does the source feature class have?

How many records is the server returning upon invoking the extract function?

~Shan

~Shan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

The source feature class has approximately 25,000 records.

Because no output is generated, I'm not able to determine how many records the server is returning.

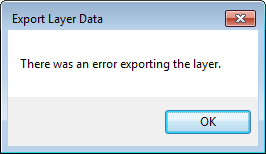

Today I even got a slightly different error message in ArcMap:

The message received in the Portal Map Viewer when attempting Analysis > Manage Data > Extract Data (extract entire contents to a FGDB) is:

"Error. Accessing URL http://....../FeatureServer/0/query failed with error ERROR: code: 500, Error performing query operation, Internal server error.. ExtractData failed."

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

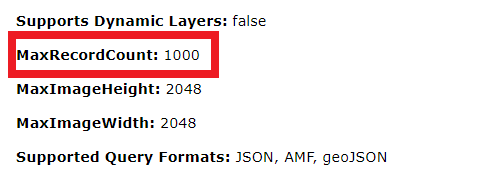

You can check for the max record count from the REST page

I am curious to see if you are using the default value or is it modified to a different value.

~Shan

~Shan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Shan,

Yes, I think I neglected to mention this in my initial post. The MaxRecordCount and Maximum Sample Size was bumped up to 100,000 respectively to account for the extant ~25,000 records and anticipated 'rate of growth'. I understand the Default settings for these parameters are 1,000.

I tried playing around with the 'pooling' parameters as well, but still not having much luck being able to extract this dataset in it's entirety, or run analysis on the entire dataset without the process falling over.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Justin,

Have you also enabled Sync capabilities on this service? If you have, can you try to add this service in arcmap and try to make a local copy of this and see if it creates a file geodatabase with all the records?

~Shan

~Shan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I hadn't initially enabled the 'Sync' operation because my users won't be able to 'Update' the feature service. I do want them to be able to extract the feature layer in its entirety in the event the performance of the service is preventing them from performing their analysis effectively. Does this mean the 'Sync' option must be enabled?

I ran through the process of republishing the service with 'Sync' checked on, but when I analyzed my MXD I had a high severity warning stating 'Layer is not configured for or cannot be use with the Sync capability', so I did not progress any further. I'm guessing this is because the feature class is not registered with my enterprise database. I'm reluctant to go through the process of registering the feature class just to test if enabling the 'Sync' operation allows me to do what I want to do.

Surely a user should be able to extract a feature service without the need to enable the 'Sync' operation?