- Home

- :

- All Communities

- :

- Developers

- :

- ArcGIS API for Python

- :

- ArcGIS API for Python Questions

- :

- Upload against a Hosted Feature Service?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Upload against a Hosted Feature Service?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- I have a polygon featureclass in a local file Geodatabase.

- I want to Append-Upsert this against an existing Hosted Feature Service in AGO (same schema)

- I know that you can upload the fGDB against the hosted feature service and then use this in an Append with appendUploadId

- I might be missing it, but the the ArcGIS Python API seems to be missing this capability - to perform an upload against an existing hosted feature service?

I know there are other options:

- Upload the fGDB to the portal as an item, and then use this in an append (prefer to avoid this approach)

- Convert the layer to a featurecollection and pass this in directly with the edits param on append

- Not entirely sure how to convert my featureclass to a featurecollection (?) in order to do this, happy to attempt this approach as then I can skip out on any uploading.

Thanks for any pointers.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Simon,

Is this what you are looking?

arcgis.features module — arcgis 1.5.2 documentation

Or you can use edit_features(adds=None, updates=None,....)

arcgis.features module — arcgis 1.5.2 documentation

There is an example for it

updating_features_in_a_feature_layer | ArcGIS for Developers

Hope these information are useful for you

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hey again Simo

No its not so much the actual appending, its the uploading of a fGDB against the Hosted Feature Service itself (as opposed to an item in the portal) which I cant spot an easy way of doing without using the requests library and working directly with the REST endpoint.

Will see if I can share some code. Here is a Javascript equivalent.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello Simon,

It seems we have to do it in two steps:

Step 1: update the File Geodatabase item

Step 2: use the File Geodatabase item to update the Feature Service via append function which supports upsert.

I did a quick test here, and it seems works fine.

It would be good if we can update the feature service using zipped FGDB directly. and I don't know why the API doesn't. Hope someone from the development team can comment on this.

But we can workaround it by delete the zipped FGDB item after the update. I am just guessing, it really depends on your own workflow

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

it by delete the zipped FGDB item after the update

You can actually bypass the above approach of uploading directly to the portal as an item (still a very valid approach for appending) and upload your fGDB directly against a hosted feature service.

Benefit is that this data is manually cleaned automatically by the back-end Server and no reliance on you having to delete.

Appending definitely supports using this via the appendUploadID parameter on the REST endpoint.

But with the Python API:

- Cant spot anything that might help me upload to begin with

- The append method does not have an equivalent appendUploadID, just a Portal Item ID - item_id

I am ok working around this by using the requests module, but might be worth including in a future release?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I see.

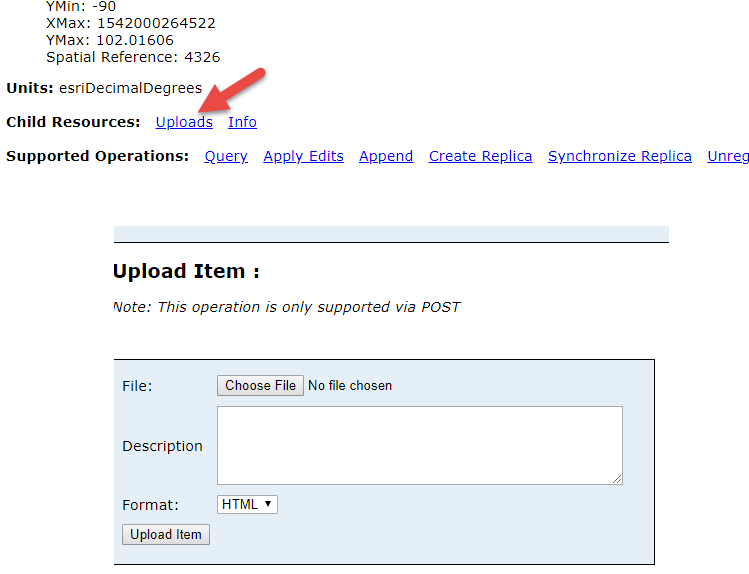

There is a method called upload for the FeatureLayerCollection class.

arcgis.features module — arcgis 1.5.2 documentation

but the append function for FeatureLayer does not have a upload_id parameter, and in the source code I can see the upload_id was intentionally set to None. So I assume the upload_id was deliberately disabled for the function.