- Home

- :

- All Communities

- :

- Developers

- :

- ArcGIS API for Python

- :

- ArcGIS API for Python Questions

- :

- Re: arcpy.TableToTable_conversion wrong field name...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

arcpy.TableToTable_conversion wrong field name and data type conversion

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello,

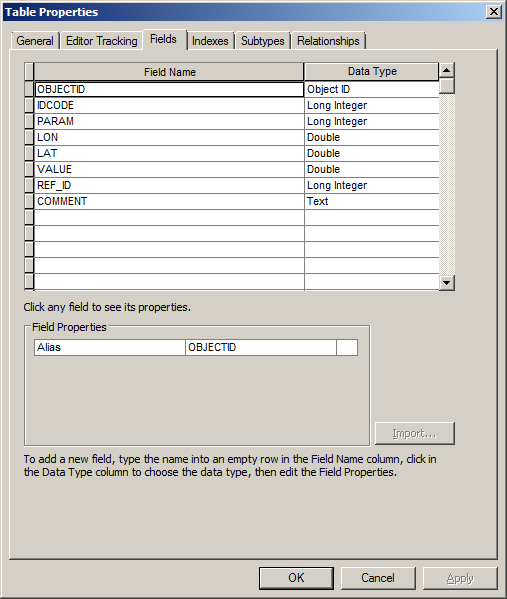

I created a script in Python to convert a txt tab-delimited table into a Geodatabase table using the ‘Table to Table’ tool. When I run the script it creates the table, but field names and data types are not following the script parameters. Fields in the output table are generally named ‘field1’ ‘field2’ etc… and the ‘Double’ data type are converted as ‘text’.

This is the script:

arcpy.TableToTable_conversion(TXT, GDB, 'Output_Table', '#', r'IDCODE "Field1" true true false 4 Long 0 0 ,First,#,TXT,Field1,-1,-1;PARAM "Field2" true true false 255 Double 5 10 ,First,#,TXT,Field2,-1,-1;LON "Field3" true true false 255 Double 5 10 ,First,#,TXT,Field3,-1,-1;LAT "Field4" true true false 255 Double 5 10 ,First,#,TXT,Field4,-1,-1;VALUE "Field5" true true false 255 Double 5 10 ,First,#,TXT,Field5,-1,-1;REF_ID "Field6" true true false 4 Long 0 0 ,First,#,TXT,Field6,-1,-1;COMMENT "Field7" true true false 255 Text 0 0 ,First,#,TXT.txt,Field7,-1,-1', '#')

...

TXT and GDB are two variables containing the paths for the .txt file and the output .gdb

The 7 fields should be named: IDCODE, PARAM, LON, LAT, VALUE, REF_ID, COMMENT (I also tried to replace every 'field1,2..etc' in the code above, but I got the same outcome)

Could someone help with this please? is there something wrong in the script os is it a bug in arcpy.TableToTable_conversion?

Thanks

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

There were a couple of things in the field mapping that I noticed.

First, the "255 Double" should probably be "8 Double" as the field size of a double is 8 bytes. In my tests, the 255 didn't seem to matter as Double may have overridden. But if the field names from the source table are not correct, this may be a problem.

Second, you are using "TXT.txt" in the comment row mapping. This should probably be the variable TXT which is referencing your source txt/csv file.

In the field mapping the first reference is to the field name in the new table, followed by (in double quotes) the alias for that field. The source table name is near the end of the mapping; in this case you are using the variable TXT. Following the source table name is the field name in the source table, in this case you appear to be using Field1...Field7 in your txt/csv table.

I would try:

arcpy.TableToTable_conversion(TXT, GDB, 'Output_Table', '#', r'IDCODE "Field1" true true false 4 Long 0 0 ,First,#,TXT,Field1,-1,-1;PARAM "Field2" true true false 8 Double 5 10 ,First,#,TXT,Field2,-1,-1;LON "Field3" true true false 8 Double 5 10 ,First,#,TXT,Field3,-1,-1;LAT "Field4" true true false 8 Double 5 10 ,First,#,TXT,Field4,-1,-1;VALUE "Field5" true true false 8 Double 5 10 ,First,#,TXT,Field5,-1,-1;REF_ID "Field6" true true false 4 Long 0 0 ,First,#,TXT,Field6,-1,-1;COMMENT "Field7" true true false 255 Text 0 0 ,First,#,TXT,Field7,-1,-1', '#')- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I Randy,

many thanks for your support.

I tried your code, but I am still getting the same issue ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Can you share the first few rows of the table you are trying to convert? Also a few more lines of code showing how TXT and GDB are being set?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi,

the table looks like this

Some rows have values with 5 decimals in the 3rd and 4th columns.

Since my original code was created simply dropping the session results outcome of the stand-alond toolbox into the python shell, I fear there could be a bug when arcpy recalls the table to table tool.

I also noticed that the table to table tool sometime leaves the 3 central coloumn empty and sometimes populates them correctly even if the parameters are all identical, so I often need to repeat twice the same procedure to get a correct conversion.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thanks for the image of the table. It was key to the answer. And, the tool acts differently outside ArcMap; it creates its own data map apparently ignoring the parameter (using version 10.2.1). *** EDIT: By inside ArcMap, I mean running the tool from ArcToolbox. By outside, I mean from a Python IDE with ArcMap closed, although running the results snippet in ArcMap's Python window showed similar issues. ***

So, regarding the table (a tab delimited text file): ArcMap sees a column with a 0 (integer) in the first row and 22.32 (double) in the second as "text". My first recommendation is to change the numbers in the first row from this:

100 0 0 0 0 200 !!

101 10 22.32 67.58 5.12 200 !!

102 20 50.55 84.12 3.65 200 !!To this (so ArcMap will see them as doubles):

100 0 0.0 0.0 0.0 200 !!

101 10 22.32 67.58 5.12 200 !!

102 20 50.55 84.12 3.65 200 !!

Since there is no header row with field names, ArcMap will default to "Field1", "Field2", etc. So my second suggestion is to add a tab delimited header row, such as:

IDCODE PARAM LON LAT VALUE REF_ID COMMENT

100 0 0.0 0.0 0.0 200 !!

101 10 22.32 67.58 5.12 200 !!

102 20 50.55 84.12 3.65 200 !!

This will name your fields with something meaningful.

When I ran the tool outside ArcMap, I first used the following code with a data file without a header:

import arcpy

TXT = r"C:/Path/To/data.txt"

GDB = r"C:/Path/To/Default.gdb"

TBL = "table2table"

arcpy.TableToTable_conversion(TXT,GDB,TBL,"#","""IDCODE "Field1" true true false 4 Long 0 0 ,First,#,TXT,Field1,-1,-1;PARAM "Field2" true true false 4 Long 0 0 ,First,#,TXT,Field2,-1,-1;LON "Field3" true true false 255 Double 0 0 ,First,#,TXT,Field3,-1,-1;LAT "Field4" true true false 255 Double 0 0 ,First,#,TXT,Field4,-1,-1;VALUE "Field5" true true false 255 Double 0 0 ,First,#,TXT,Field5,-1,-1;REF_ID "Field6" true true false 4 Long 0 0 ,First,#,TXT,Field6,-1,-1;COMMENT "Field7" true true false 255 Text 0 0 ,First,#,TXT,Field7,-1,-1""","#")Although the map contains the target field names ("IDCODE", etc.), when the table was created, "Field1"..."Field7" were used.

I then used the version of the data table with the header (field names) and removed the field mapping, and it created the expected result. NOTE: This was tested with ArcMap version 10.2.1.

import arcpy

TXT = r"C:/Path/To/data.txt"

GDB = r"C:/Path/To/Default.gdb"

TBL = "table2table"

# tested with ArcMap version 10.2.1

arcpy.TableToTable_conversion(TXT,GDB,TBL,"#","""#""","#")

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Randy,

that's super!!! Awesome ![]() It worked perfectly.

It worked perfectly.

Many thanks for you help

Today I solved the problem and learned something new!

Cheers ![]()

![]()

![]()

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »