- Home

- :

- All Communities

- :

- Developers

- :

- Python

- :

- Python Questions

- :

- Each arcpy loop takes a bit longer to finish

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Problem is that each loop of arcpy script takes longer to finish. What I want to achive with the script is to see how many polylines cross a polygon square (basically create density map). Polyline feature class has 215560 and polygon has 1878 rows. I have 3D Analyst and Data Interoperability standard license so i can not use density map geoprocessing tool. I also tested with intersect tool but that seemed to have worse performance.

PC specs:

CPU Xeon E5-1620

RAM 16 GB

GRAPH NVIDIA Quadro K2000

WINDOWS 8.1 Pro

PYTHON 3.6.6 64-bit

ArcGIS Pro 2.3.2

Script logic goes like this:

1. Loop through polygon fc using unique id.

2. Select polygon square based on unique id (SelectLayerByAttribute_management) and create in_memory polygon fc with selected square.

3. Select polylines that intersect with in_memory polygon square (SelectLayerByLocation_management) and create in_memory polyline fc with selected polylines.

4. Get count of how many polylines are in in_memory polyline fc.

5. Update the polygon square row (that was selected in step 2) with count value.

6. Move to next polygon square based on unique id.

import arcpy

arcpy.env.overwriteOutput=True

arcpy.env.workspace=r"C:\Users\user\TOO_FAILID\ArcMap\density_map_vaina.gdb"

arcpy.env.outputCoordinateSystem=arcpy.SpatialReference("Estonia 1997 Estonia National Grid")

arcpy.env.parallelProcessingFactor="100%"

arcpy.SetLogHistory(False)

grid_oid_max=int(arcpy.GetCount_management("density_map_grid_vaina").getOutput(0))

for grid_oid in range(1,grid_oid_max+1,1):

arcpy.CopyFeatures_management(arcpy.SelectLayerByAttribute_management("density_map_grid_vaina","NEW_SELECTION","New_oid="+str(grid_oid)),"temporary_grid_vaina")

arcpy.CopyFeatures_management(arcpy.SelectLayerByLocation_management(ais_2018_line180","INTERSECT","temporary_grid_vaina","","NEW_SELECTION",""),"temporary_vaina_ais_line_fc")

count_all=int(arcpy.GetCount_management("temporary_vaina_ais_line_fc").getOutput(0))

with arcpy.da.UpdateCursor("density_map_grid_vaina",["New_oid","All_count"]) as cursor:

for row in cursor:

if row[0]==grid_oid:

row[1]=count_all

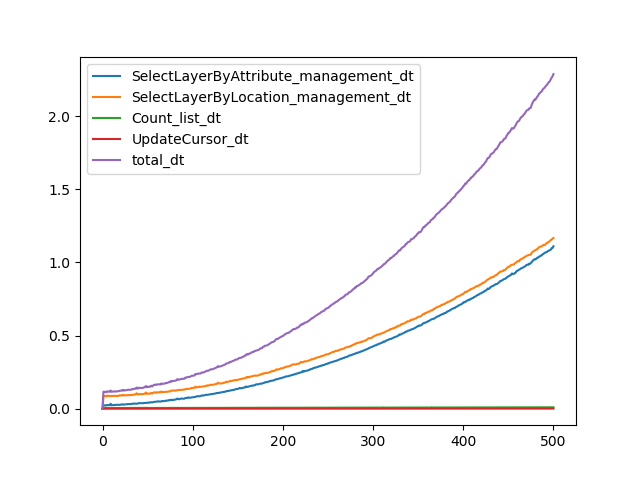

cursor.updateRow(row)Next plot shows the total time taken (y-axis in minutes) by each loop and time that each processes took in that loop. Loop was set to end at loop or New_oid 500 (x-axis). Time taken for each loop grows exponentially.

Does someone have an idea of what I'm doing wrong, what might be causing this or is there another solution to achive my goal? Can it be a python issue? I've read that using arcpy.SetLogHistory(False) will improve performance but that had little to no effect and that ArcGIS has to keep track of things and so the increased time with every loop is inevitable. (https://community.esri.com/thread/206440-loop-processing-gradually-slow-down-with-arcpy-script)

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It appears you are passing a feature class (not a layer) to your Select Layer tools. This means you are creating a layer (behind the scenes) on every loop and you are most likely filling up memory, slowing things down.

I believe you may have better luck I think if you create layers at line 8 before you start and do your selections on those layers. Passing layers (instead of datasets) to tools is generally faster as it speeds up data validation required before the tool can run.

I also do not see the purpose the time consuming creation of temporary datasets, I believe layers with selections on them would do just as well, without having to create additional temporary datasets.

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It appears you are passing a feature class (not a layer) to your Select Layer tools. This means you are creating a layer (behind the scenes) on every loop and you are most likely filling up memory, slowing things down.

I believe you may have better luck I think if you create layers at line 8 before you start and do your selections on those layers. Passing layers (instead of datasets) to tools is generally faster as it speeds up data validation required before the tool can run.

I also do not see the purpose the time consuming creation of temporary datasets, I believe layers with selections on them would do just as well, without having to create additional temporary datasets.

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for your ideas. Creating feature layers from feature classes and then running tools on feature layers solved the problem and it's always useful to use in_memory workspace when possible. Final script looked like this:

import arcpy

arcpy.env.overwriteOutput=True

arcpy.env.workspace=r"C:\Users\user\TOO_FAILID\ArcMap\density_map_vaina.gdb"

arcpy.env.outputCoordinateSystem=arcpy.SpatialReference("Estonia 1997 Estonia National Grid")

arcpy.env.parallelProcessingFactor="100%"

arcpy.SetLogHistory(False)

arcpy.MakeFeatureLayer_management("density_map_grid_vaina",

r"in_memory\density_map_grid_vaina_layer")

arcpy.MakeFeatureLayer_management(arcgisfolder+projectfolder+databasename+"ais_2018_line180",

r"in_memory\ais_data_layer")

grid_oid_max=int(arcpy.GetCount_management("density_map_grid_vaina").getOutput(0))

for grid_oid in range(1,grid_oid_max+1,1):

arcpy.MakeFeatureLayer_management(arcpy.SelectLayerByLocation_management(r"in_memory\ais_data_layer",

"INTERSECT",

arcpy.SelectLayerByAttribute_management(r"in_memory\density_map_grid_vaina_layer",

"NEW_SELECTION",

"New_oid="+str(grid_oid)),

"",

"NEW_SELECTION",

""),

r"in_memory\temporary_vaina_ais_line_fc")

count_all=int(arcpy.GetCount_management(r"in_memory\temporary_vaina_ais_line_fc").getOutput(0))

with arcpy.da.UpdateCursor("density_map_grid_vaina",["New_oid","All_count"]) as cursor:

for row in cursor:

if row[0]==grid_oid:

row[1]=count_all

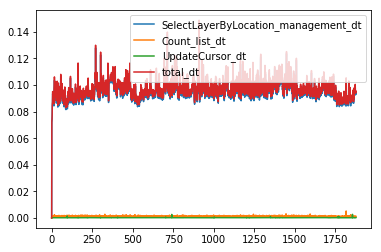

cursor.updateRow(row)And time taken by each loop was consistent throughout the iteration:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I’m really glad this helped you out!

However, I do not understand why you are placing a path for the output of the make feature layer tool. The second parameter is a string used to label the layer, not a dataset in the in_memory area or anywhere else. For more details, read the tool help for MakeFeatureLayer. The help talks about “layers in memory” but is important to realize that layers aren’t data, they are an object in memory that points to a source dataset with some wrapper properties of the layer’s own (visible/hidden fields, aliases, selections, etc).

I often use the following code pattern to work with layers in Python, the result object lyrFoo gets ‘str()’d when passed a parameter to a tool layer, so I could just as well use “lyrFoo” as lyrFoo -- but I think this way of doing it is very easy to read (and debug):

# create a layer "lyrFoo"

lyrFoo = arcpy.MakeFeatureLayer_management("my_points", "lyrFoo")

arcpy.SelectLayerByAttribute(lyrFoo, "", "MOJO > 50000")

# create a new feature class in the current workspace

arcpy.CopyFeatures_management(lyrFoo, "foo_copy")

# delete the layer from memory; the dataset it points to is not affected!

arcpy.Delete_management(lyrFoo)