- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Pro

- :

- ArcGIS Pro Questions

- :

- Collector for ArcGIS Accuracy Discrepancy?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Collector for ArcGIS Accuracy Discrepancy?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

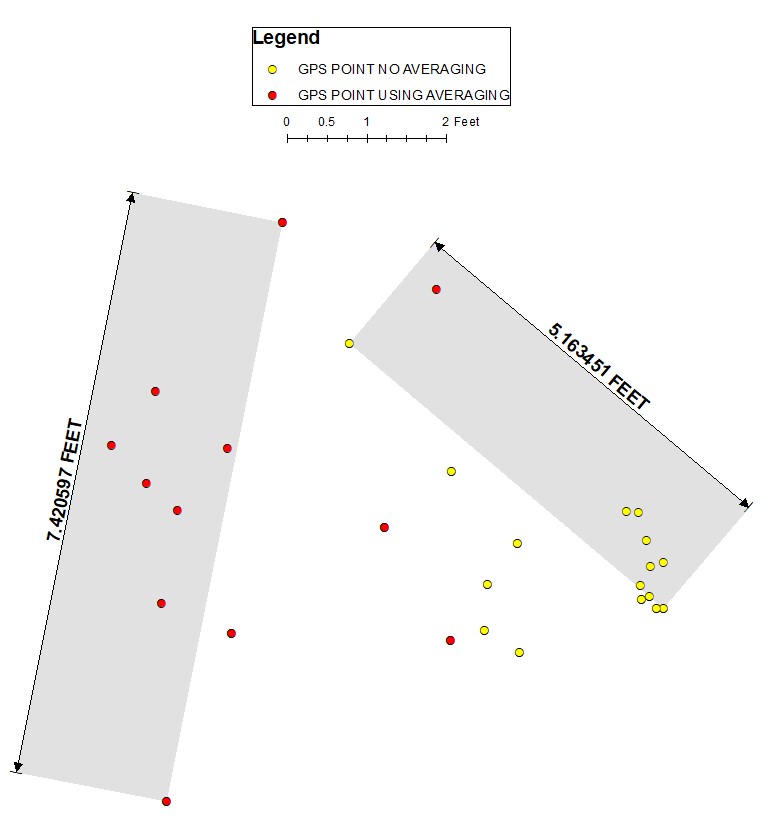

Can someone please explain the below Collector for ArcGIS accuracy discrepancies to me? I took a number of points shown below using the Collector App and an R1 external GPS receiver. About half of the points were logged using GPS averaging and half of the point I did not use GPS averaging. The R1 unit should provide sub-meter location accuracy using SBAS real time corrections. I made sure that the GPS source was set to the Trimble unit in Collector and also made sure I was using the correct Location profile in Collector. While logging all points the GPS accuracy within the app read 1 to 2 feet but never above 3 feet. The accuracy for the points that did not use GPS averaging never reached a horizontal accuracy of 0.664 meters. The points that were logged using GPS averaging never reached an average horizontal accuracy of 0.600 meters. I have attached a table with GPS metadata that shows this.

I have two main questions:

1) Shouldn’t logging with GPS averaging give a tighter cluster of points?? My test points show a cluster of points that is more spread out than the cluster of points that were logged not using GPS averaging. Why is this?

2) Because I am using an external GPS device that should log points with sub-meter (<1 meter) accuracy, why are the two clusters of points showing the points with the greatest distance between them in their respective cluster greater than 1 meter (3.28 feet)?? Shouldn’t all the points in each cluster be within 1 meter (3.28 feet) of each other?

It is important to point out that I set the R1 external device on a rigid surface and was not moving the unit around at all while logging all points. The GPS unit was in the exact same place while logging all points. Also while using averaging, I set the number of averaging points to 30.

Please help me wrap my brain around this because it’s making me question the accuracy of our data and the validity of both claims made by Collector and the R1 unit as a high accuracy GPS data collection alternative.

Collector Version: 18.1.0

IOS: 12.1.3

Thanks,