- Home

- :

- All Communities

- :

- Developers

- :

- Python

- :

- Python Questions

- :

- Script works on one PC but not 2nd PC

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Script works on one PC but not 2nd PC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

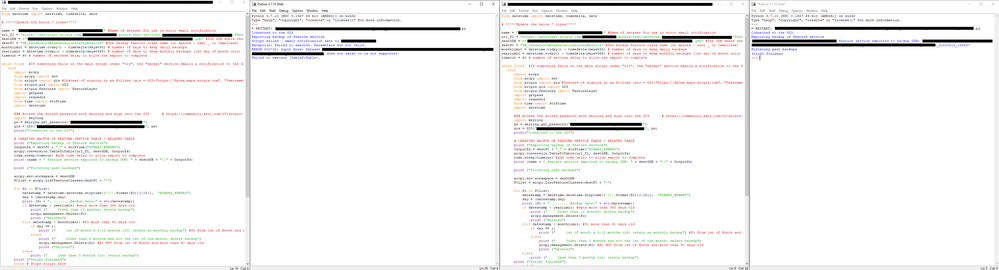

Hi brains trust. I need a bit of support here. I have a python script that accesses an ArcOnline feature service and downloads the data, saving it as a feature class in a local (network) GDB. I know the script works as I have it running on one PC, but on another, despite running it in the same software (IDLE for ArcGIS Pro) it fails. The error is that the parameters are not valid and the input dataset does not exist or is not supported. This is despite the same login credentials for ArcOnline being used. I'm at a loss as to why it won't work. It works fine for all my other scripts which are point/line/polygon feature services. The only difference is this one is a related table for a line feature service. The image below shows the same script and the results (left screen is the failure on the remote PC - right screen is on my PC).

GIS Officer

Forest Products Commission WA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Using the keyring module seems to work fine on my end.

I typically leverage the Python API stored connection Profiles:

To create:

- gis = arcgis.GIS( "<site>", '<username>", "<password>", profile="<profile name>")

To access thereafter, simple reference the profile:

- gis = arcgis.GIS( profile="<profile name>")

Once you've made your connection, try checking the user properties:

- gis.properties.user

This should display the details on the user you connected with. If there is no 'user' attribute, you are connected as anonymous.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi. Wouldn't doing this require storing the password in clear text in the script? We shifted away from doing this to remove that vulnerability from our scripts and instead storing them in a password protected environment.

GIS Officer

Forest Products Commission WA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Are you able to access r"\\a\folder\on\our\server\Data.gdb" from the failing computer - running under the same Windows user that is running when you run the toolbox code? I expect access to the shared drive is controlled by Windows so it has nothing to do with the ArcGIS credentials. I'd also try to recreate the error running the TableToTable tool interactively to eliminate any idea that there is something in your toolbox code that is causing the problem.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I've just tried running the tool directly through Pro on both machines...and it works fine - but with the below warning:

This has me even more confused now as both machines are running on a Basic License, yet the data was exported as expected. I suspect this might have something to do with the python error, but I still don't understand why one will complain when the other doesn't and both are supposedly accessing the same Basic license.

GIS Officer

Forest Products Commission WA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I've modified the tool to create a new table based on the feature service and then append the data instead. It loses the GlobalID info which is a bit annoying but not overly important (couldn't figure out how to preserve it) but overall, it works for what I need.

# CREATING BACKUP OF FEATURE SERVICE TABLE / RELATED TABLE

print ("Exporting backup of feature service")

Outputfs = destFC + "_" + strftime("%Y%m%d_%H%M%S")

arcpy.management.CreateTable(destGDB, Outputfs, url_fl)

arcpy.management.Append(url_fl, destGDB + "\\" + Outputfs, "NO_TEST")

time.sleep(timeout) #add time delay to allow export to complete

print (name + " feature service exported to backup GDB: " + destGDB + "\\" + Outputfs)GIS Officer

Forest Products Commission WA

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »