- Home

- :

- All Communities

- :

- Developers

- :

- Python

- :

- Python Questions

- :

- Re: Arcpy -- Edit Spatial Reference Object Propert...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Arcpy -- Edit Spatial Reference Object Properties

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello All,

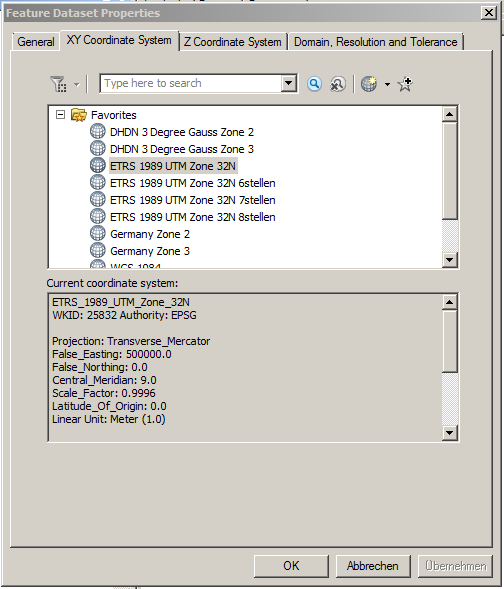

I'm trying to develop a python script to edit the name property of several spatial reference objects found while iterating through a folder and its subdirectories. The projection of the data is identical to ETRS_1989_UTM_Zone_32N, but is named something else -- I'm just trying to reset the name to ETRS_1989_UTM_Zone_32N. According to the help, this property is both readable and writable in ArcGIS 10.2, which is what I'm running. I'm just not sure how to go about writing in the new projection name via the script...anybody have any ideas? Any feedback is greaty appreciated!

Here's my code so far:

import os import arcpy workspace = r"C:\Scripts\Reproject" newProjection = "ETRS_1989_UTM_Zone_32N" def recursiveProjections(workspace): """ Function iterates through a workspace and its subfolders using arcpy.da.walk and returns a list of spatial reference objects of all geographic datasets (including those inside geodatabases). Parameter: target workspace """ projections = [] for dirpath, dirnames, filenames in arcpy.da.Walk(workspace): for filename in filenames: desc = arcpy.Describe(os.path.join(dirpath, filename)) srObject = desc.spatialReference projections.append(srObject) return projections def editProjection(workspace): projections = recursiveProjections(workspace) for projection in projections: print projection.name editProjection(workspace)

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

where is newprojection defined? it isn't passed to your function

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

oops, it didn't get pasted in -- it and the workspace are defined as a global variables after the import statements:

workspace = r"C:\Scripts\Reproject" newProjection = arcpy.SpatialReference(25832) # wkid for 'ETRS_1989_UTM_Zone_32N'

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Perhaps of note is that my directory contains a geodatabase, with a feature dataset composed of feature classes. According to the help for the define projection tool, once a feature dataset contains feature classes, its projection cannot be changed. Even after removing the geodatabase from my test directory, however, the script still generates the error.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Sorry for the long delay, been away at the esri Africa UC...![]()

Yes, if you have a pile of Fc's inside a fd, the SR is defined at the fd level. You can only change it by copying out the fc to another fd or using the project tool (and putting the output somewhere else).

Not sure what the error is, but instead of redefining each fc did you try to redefine just the sr of the fd?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Sorry for the delay, I was just now able to try Neil's sollution.

According to the Help: All feature classes in a geodatabase feature dataset will be in the same coordinate system. The coordinate system for a geodatabase dataset should be determined when it is created. Once it contains feature classes, its coordinate system cannot be changed.

This is not the case, however -- a feature dataset can indeed be redefined if it already contains feature classes (which will also be redefined along with the FD). The redefine tool simply generates a warning, but it still works. ESRI needs to update their help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

So I have a workable sollution to this based on everyone's feedback (see code below). The only nagging issue is that when I run recursiveDatasets in main() after redefining all feature classes and datasets, when I print spatialReference.name, it's still printing the old spatial reference I checked the SR in ArcCatalog for each FC and FD, and they have indeed been redefined:

So why is it still printing the old SR? Is that output being drawn from a different parameter than that being displayed in the XY Coordinate System tab? I have also tried refreshing the geodatabase following the redefinition, but that makes no difference.

Code:

# -*- coding: utf-8 -*- import os from os import path import arcpy from arcpy import env from sets import Set import time, datetime from datetime import date arcpy.env.overwriteOutput = True def recursiveDatasets(workspace): """ Function iterates through a file geodatabase using arcpy.da.walk and returns a tuple containing three lists: 1) uniqueFD, containing paths to each feature dataset in the geodatabase; 2) miscDatasets, containing all feature classes in a geodatabase not inside a feature dataset; 3) descriptions, containing description objects for every feature class and feature dataset in the target geodatabase. Parameter: Workspace """ miscDatasets = [] featureDatasets = [] descriptions = [] for dirpath, path, filenames in arcpy.da.Walk(workspace): for filename in filenames: desc = arcpy.Describe(os.path.join(dirpath, filename)) if hasattr(desc, 'path'): descPth = arcpy.Describe(desc.path) descriptions.append(desc) if hasattr(descPth, 'dataType'): if descPth.dataType == 'FeatureDataset': featureDatasets.append(dirpath) else: miscDatasets.append(os.path.join(dirpath, filename)) uniqueFD = list(set(featureDatasets)) return uniqueFD, miscDatasets, descriptions def defineProjection(workspace): """ Function redefines the existing projection for feature datasets and feature classes not residing in feature datasets in a geodatabase. Parameter: workspace """ uniqueFD, miscDatasets, descriptions = recursiveDatasets(workspace) for FC in miscDatasets: desc = arcpy.Describe(FC) if desc.datasetType == 'FeatureClass': arcpy.DefineProjection_management(FC, newProjection) for FD in uniqueFD: arcpy.DefineProjection_management(FD, newProjection) def main(): workspace = arcpy.env.workspace = os.path.join(r'C:\Scripts\Redefine Projection\MyGeodatabase.gdb') newProjection = arcpy.SpatialReference(25832) # wkid for 'ETRS_1989_UTM_Zone_32N' defineProjection(workspace) uniqueFD, miscDatasets, newDescriptions = recursiveDatasets(workspace) FCs = [] newSRs = [] for dataset in newDescriptions: if dataset.datasetType == 'FeatureClass': FCs.append(os.path.join(dataset.Path, dataset.Name)) newSRs.append(dataset.spatialReference.name) print newSRs if __name__ == '__main__': main()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I have the same problem. The dataset and feature classes seem to be changed, but the desc.spatialReference.name ist still named as the old spatial reference.

After copying the dataset in a new geodatabase, the desc.spatialReference.name ist correct and consistent.

Is there a solution/workround (without copying)?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Marco,

Sorry for the late answer, here's what I ended up with that works, hope it's helpful -- does a bit of logging for you too:

# -*- coding: utf-8 -*-

import os

from os import path

import arcpy

from arcpy import env

from sets import Set

import time, datetime

from datetime import date

arcpy.env.overwriteOutput = True

newProjection = arcpy.SpatialReference(25832) # wkid for 'ETRS_1989_UTM_Zone_32N'

# ################### #

# FUNCTIONS #

# ################### #

def recursiveDatasets(workspace):

"""

Function iterates through a workspace and its subfolders using arcpy.da.walk and returns

a list of spatial reference objects of all geographic datasets (including those inside

geodatabases). Parameter: target workspace

"""

paths = []

uniquePaths = []

for dirpath, dirnames, filenames in arcpy.da.Walk(workspace):

for filename in filenames:

desc = arcpy.Describe(os.path.join(dirpath, filename))

if hasattr(desc, 'path'):

descPth = arcpy.Describe(desc.path)

if hasattr(descPth, 'dataType'):

# return list of FD paths and FC paths not in FD's

if descPth.dataType == 'FeatureDataset':

paths.append(dirpath)

elif desc.datasetType == 'FeatureClass':

paths.append(os.path.join(dirpath, filename))

# make list without duplicates

uniquePaths = list(set(paths))

return uniquePaths

def defineProjection(workspace, toRedefine):

""" Function redefines datasets in a given projection in a geodatabase. Parameters: workspace, projection to consider

"""

paths = recursiveDatasets(workspace)

for path in paths:

# create a description object for every dataset (incl. tables, relationship classes) not residing in FD

desc = arcpy.Describe(path)

if desc.spatialReference.Name == toRedefine:

arcpy.management.DefineProjection(desc.Name, newProjection)

print desc.Name + ' wurde neu definiert.'

else:

print desc.Name + ' wurde nicht neu definiert.'

def Logging(myLog, writeFormat, runDate, headers, list1, list2, colWdth):

""" Function will generates a log file composed of two columns (list1 and list2) with defined

headers and date of execution. Parameters: full log filepath, write format, date of execution,

headers, list1, list2, column width

"""

with open(myLog, writeFormat) as myLog:

myLog.write(runDate)

myLog.write(headers)

for item in zip(list1, list2):

myLog.write(colWdth.format(*item))

# ################# #

# MAIN #

# ################# #

def main():

print 'Processing geodatabase...'

workspace = arcpy.env.workspace = r'C:\Scripts\Redefine_Projection\Thomas\2016-01-25_GeoDB03_gd_Export.gdb'

toRedefine = 'ETRS_1989_UTM_Zone_32N_6stellen'

defineProjection(workspace, toRedefine)

datasets = recursiveDatasets(workspace)

print 'Logging results...'

# list of projections

projections = []

for dataset in datasets:

desc = arcpy.Describe(dataset)

projections.append(desc.spatialReference.Name)

# logging

myLog = r"C:\Scripts\Redefine_Projection\Log\RedefineProjection.log"

today = date.today()

runDate = 'Tag der Durchführung: ' + today.strftime("%Y.%m.%d") + '\n'

# first column header is 'Feature Class' justified left w/125 character buffer before 'Neue Spatial Reference' header

headers = '{0: <125}'.format('Pfad der Datensatz') + 'Spatial Reference' + '\n'

colWdth ="{:<125}"*2 + "\n" # 2 columns with 125 character width

Logging(myLog, 'a', runDate, headers, datasets, projections, colWdth)

if __name__ == '__main__':

main()

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »