- Home

- :

- All Communities

- :

- Products

- :

- Imagery and Remote Sensing

- :

- Imagery Questions

- :

- Does the “orthophoto” term refer to both satellite...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Does the “orthophoto” term refer to both satellite images and aerial photos?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does the “orthophoto” term refer to both satellite images and aerial photos?

I’m not sure if the “orthophoto” term refer to both processed images\photos obtained from satellite and aircraft?

In other words,

Do the processed images obtained from satellite can be called orthophoto?

Do the processed images obtained by aircraft can be also called orthophoto?

Thank you

Best

Jamal

Jamal Numan

Geomolg Geoportal for Spatial Information

Ramallah, West Bank, Palestine

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jamal, at least as far as I have seen the terms used, orthophoto refers to photos obtained by aircraft. Satellite images are usually referred to as imagery. I think that aerial imagery may be the more general term though, and might refer to either.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think, Orthophoto is a generalized term for both processed aerial photograph as well as processed high resolution satellite imageries. Other synonyms could be orthophotographs and orthoimages. It can be termed as Aerial orthophotos and Satellite orthophotos.

Orthophotos are generated using stereo-images, which can be obtained using both aerial photography as well as through satellite.

Just an opinion!!!

Think Location

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

First of all, agree with the definition on a general term 'orthophoto'. Please refer to Orthophoto - Wikipedia, the free encyclopedia;

Secondly, the generation of orthophotos has many approaches in photogrammetry and remote sensing.

- The simplest and most common one is to use the high-resolution DTM/ DEM with Ground Control Points, combined with airphoto / image sensor models (like RPC), in order to remove perspective distortion and terrain distortion. Those can be high-resolution airphotos and satellite images.

- Lately, there are some new trends to combine photogrammetry with the computer vision to generate orthophotos. Here, worth to mention, the high-resolution oblique airphotos/ images also can be orthorectified in photogrammetry (or, with computer vision), in particular, produce perspective views of photos/ imagery and video from the different platforms (including UAV) in 3D imaging and 3D GIS ...

(See the snapshot and read the attachment)...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Reminds me of the question 'what is a GIS?'

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My answer to your question is YES and NO - when the images are first taken, they are regular images. Once they get processed, or orthorectified, they become orthophotos. So, in my experience, orthophotos are not derived from platform type, but by the process of "fixing" an image to get rid of the effects of shooting from a lens. Both aerial photography and satellites use some form of lens on their sensors, so both can produce orthophotos.

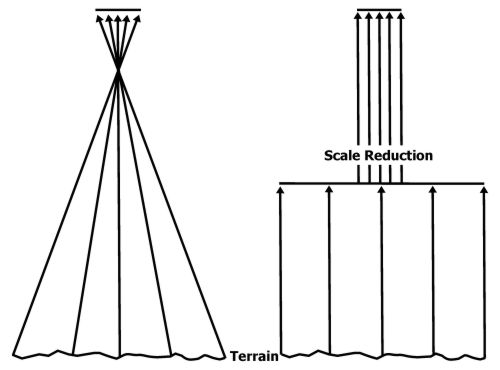

For example, see the following photo:

On the left, we see a traditional image being shot; the point where all the light rays cross each other being the focal point of the camera, and the flat surface at the top being the film or CCD of the imaging device. At this point, the only true representation of the terrain is the light beam being shot straight down ("nadir"), and every other return is giving us a slightly more distorted image the more we move away from center. This is why we see tall buildings "leaning" in imagery away from the focal point of the image - if the building was at the true center of the photo, we'd only see the top of it.

By orthorectifying imagery, we get what we see on the right, which is a mathematical transformation/interpolation of the imagery using known ground control points to create an image that (almost) eliminates the warp we get as we move away from center. It's almost as if every point in the image has now become nadir. Because of this, our scale remains constant throughout the entire image (ie 1cm=50m), whereas in an unprocessed image, our scale would decrease the more we moved away from the center of the image.

Hope this helps!

Todd

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think of "orthophotos" as rectified photos that are more or less straight down on the earth. Which could be produced by multiple methods.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you very much guys for the very useful input.

Based on the valuable answers, I can conclude that orthophoto can be generated from both aerial photos (Frame or Digital) or satellite images. The orthophoto:

1.Is located in its correct location in the ground

2. Has correct map scale for all its element

Best

Jamal

Jamal Numan

Geomolg Geoportal for Spatial Information

Ramallah, West Bank, Palestine