- Home

- :

- All Communities

- :

- Products

- :

- Geoprocessing

- :

- Geoprocessing Questions

- :

- Division in ArcMap Between Fields

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello,

I am currently trying to work on ArcMap for practice, and I keep running into a problem when trying to divide between two fields. Just to give people an idea with what I am working on, I created two short integer fields, and I created a third field to divide between the two and identify a decimal answer. I have tried using this field as a float and as a double, and I have had the Precision set to "0" and "3" with the Scale set to "0" and "2". However, every time I try to divide between the two short integer fields, I routinely receive the answer "There was a failure during processing." I don't understand why I keep getting this error, the only reason I can think of is since I'm dividing between two short integer fields to identify a decimal.

If someone could help me with this problem, it would be well appreciated.

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

You are obviously working with a shapefile.

Working with fields in shapefiles by adding a field in ArcCatalog—Help | ArcGIS for Desktop

- Precision—The number of digits that can be stored in a number field. For example, the number 56.78 has a precision of 4.

- Scale—The number of digits to the right of the decimal point in a number in a field of type float or double. For example, the number 56.78 has a scale of 2. Scale is only used for float and double field types.

Increase your precision to somehing ilke 6 and set your scale to 2/3.

Another good reason to work with FGDB feature classes instead.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

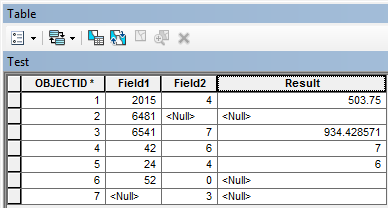

At first I thought this may be an issue with dividing by zero or null values in your input fields. However, after testing this in ArcMap 10.2 with a file geodatabase table the field calculator produces the following results without raising any errors:

What version of ArcMap are you using and what is your underlying data stored in?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

You are obviously working with a shapefile.

Working with fields in shapefiles by adding a field in ArcCatalog—Help | ArcGIS for Desktop

- Precision—The number of digits that can be stored in a number field. For example, the number 56.78 has a precision of 4.

- Scale—The number of digits to the right of the decimal point in a number in a field of type float or double. For example, the number 56.78 has a scale of 2. Scale is only used for float and double field types.

Increase your precision to somehing ilke 6 and set your scale to 2/3.

Another good reason to work with FGDB feature classes instead.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Integer fields should be used for nominal or ordinal data (categories or ranks). As a matter of course should that data fall into that category, it should never be used in any calculation involving division or multiplication and in the case of categorical data, no mathematical operation makes any sense at all. If you intend to use any field in any base mathematical operation (+, -,, /, or *) then you should be using double fields. The overhead in terms of memory is hardly an issue today unless you are working with large data sets...which you obviously are not. A tip to avoid any such issues for any programming environment is to always query, or account for in the calculation, the fields involved for valid data only prior to performing the calculating. Once these data are obtained, it is also an extra tip to ensure that you upcast at least one of the operands to floating point (ie it has a decimal, 0 or otherwise) prior to performing operations like division. An example in Python showing how a lack of knowledge about the rules can lead to error

Python 2.7

>>> 2/3 # the innocent calculation and its result 0 # integer division by default

Python 3.4

>>> 2/3 # numbers are upcast to floats 0.6666666666666666 >>> 2//3 # how to perform integer division in python 3.4 0

Back to python 2.7

>>> 2./3 # notice the decimal after 2 0.6666666666666666 >>> 2.0/3 # lets be extra clever 0.6666666666666666 >>> float(2)/3 # fancy-fancy 0.6666666666666666 >>> 2/float(3) # you can be fancy anywhere 0.6666666666666666 >>> float(2/3) # but just be clever and remember the rule about upcasting 0.0 >>>

So if you had

- checked for nulls,

- upcast one or both of your fields and

- checked which rules were being used

you wouldn't have any problems at all.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hey everyone,

First off, sorry for the late reply.

So I revisited my problem tonight from last week, and I used FC Basson's suggestion of changing my precision and scale, and I used Dan's suggestion of switching my fields to doubles. I tried this with a test run with data I merely made up on the spot and it worked, but then I went back to the data I wanted to evaluate and it didn't work.

Then I finally realized my problem - several of my denominator values were zeroes. In my test run with the made-up data my values all were greater than zero. So I finally changed all my "0" values to "1" and I got the data to work.

Owen - I am using ArcMap 10.3.1.

Thanks for the help everyone.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

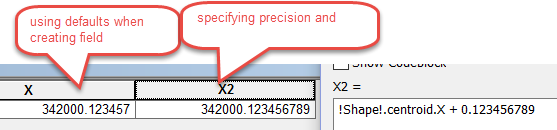

It might also be a good time to clear up any misconceptions about shapefile precision. If you fail to specify a precision and scale and leave them as the default of 0 and 0 when you select type Double, you get 6 decimal points. You can specify a precision and scale and you pretty well get what you want within the limits of the ability to record coordinates in a 64 bit environment (about 53 bits of information).

So what does that mean?

Well lets do a calculation. I added 2 fields and calculated the X coordinate of a rectangular polygon AND added 0.123456789 to the resultant value. The field on the left was created using a precision and scale of 0 and 0 and I got 6 decimal places, the one on the right was created using a set precision and scale values in an attempt to capture the decimals given. I got 9 decimal points. A millimeter? I would only need 3...we are well beyond that... this is precision NOT accuracy (totally different topic).

Now what does this mean again?

Well, these are real world coordinates in a Modified Transverse Mercator Projection (MTM zone 9 in Canada), the scale factor is 0.9999 for this projection and a zone width of 3 degrees longitude and the units are meters (metres... in Canadian and world spelling). Soooo my coordinates regardless of which field I choose can be represented to way more than I need. In fact, If I wanted to limit the value that would be considered to be equal, I would use a file geodatabase and not a shapefile (see XY resolution, environment settings).

These discussions have a long history so you need not worry about whether one storage system is more precise than the other as is often implied. Shapefiles will be around for a long time, they are a well recognized and used storage format even given their shortcomings. So when you choose a file format to show your data, make sure you have all the facts, understand the pros/cons and limitations of them and ... more importantly ... know the needs of the people that you want to distribute the data to... not everyone in the world uses Arc* products.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I had this issue and thought it was the zero denominator issue, but still didn't work even after setting the correct precision and scale, my problem was that I was going from two integers, dividing, to decimals. In the arcmap python code guide in the field calculator, there's a note that says in order to go from integers to decimals with dividing, one of the fields needs to be in decimal form which can be done by converting to a float. so like float(!field1!)/!field2! *100 worked... but !field1!/!field2! would not.

Olmsted County GIS

GIS Analyst - GIS Solutions