- Home

- :

- All Communities

- :

- Products

- :

- Data Management

- :

- Geodatabase Questions

- :

- Fundamental flaw when opening attribute tables in ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Fundamental flaw when opening attribute tables in ArcGIS Pro?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Can someone from ESRI please have a good look at this. @Kory Kramer, @George Thompson, @Vince Angelo

For some background:

- ArcGIS Pro 2.1.3 on Windows 10

- PostgreSQL 9.6 on Ubuntu 18.04 running on a Virtualbox VM executed on the Windows host

Situation:

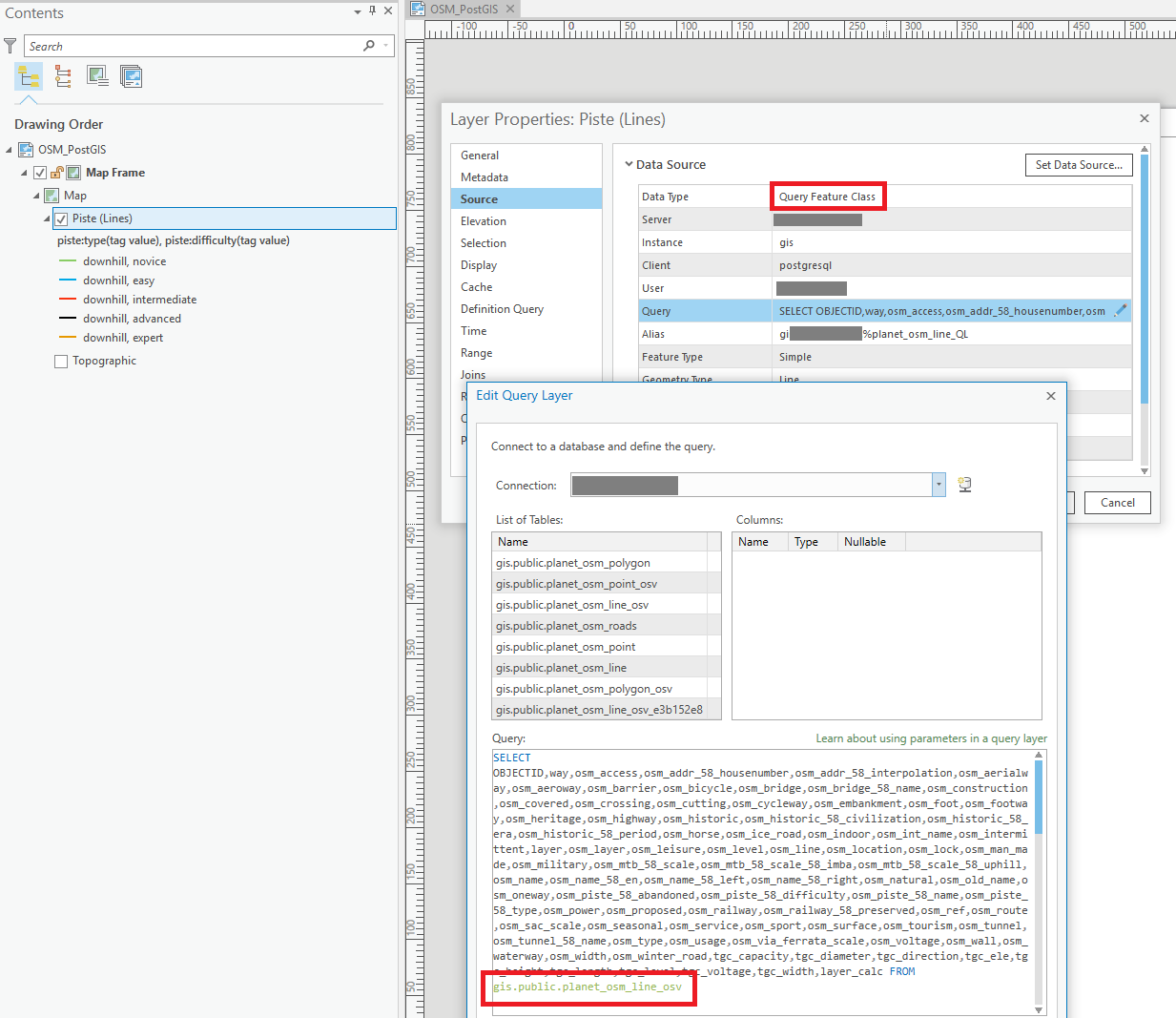

A line layer with a valid Definition Query set present in the TOC. The layer is an ArcGIS Query Layer (so a spatial database but not an enterprise geodatabase) and references a huge Europe OpenStreetMap extract containing +/- 65M records in the line table. This line table has both valid spatial index (GIST) and attribute indexes. The display performance of the layers in Pro's Map window is adequate, and actually surprisingly fast given the huge data source.

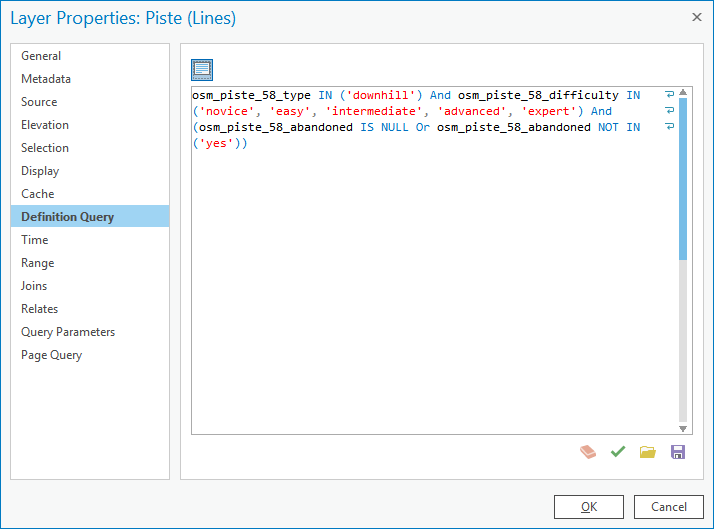

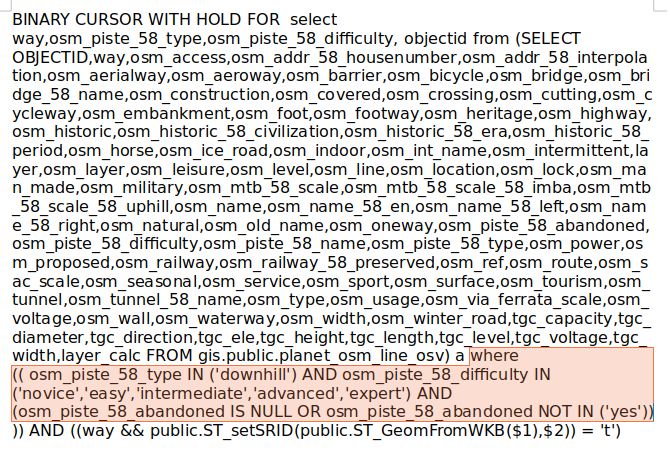

The Definition Query set on the layer, see image, references an indexed field, osm_piste_58_type:

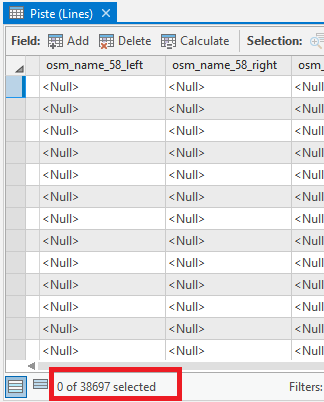

If I insert the Definition Query in DBeaver IDE, and prepend it with the necessary SELECT...FROM... that is missing in the Definition Query itself, and run an execution plan, the query runs in less than 3 seconds (2163ms) and returns 38697 rows, so just under 40k records. The execution plan shows the database correctly using the available index ("Index Scan"):

The problem:

When I attempt to open the Attribute Table in Pro by right clicking the layer and choosing "Attribute Table", Pro, instead of showing the records near instantly as it should based on the 3 seconds execution plan, instead takes > half an hour to open the Attribute Table. This is the time needed for a full table scan. When it is finished, it does show the proper 40k records, so the final selection was made.

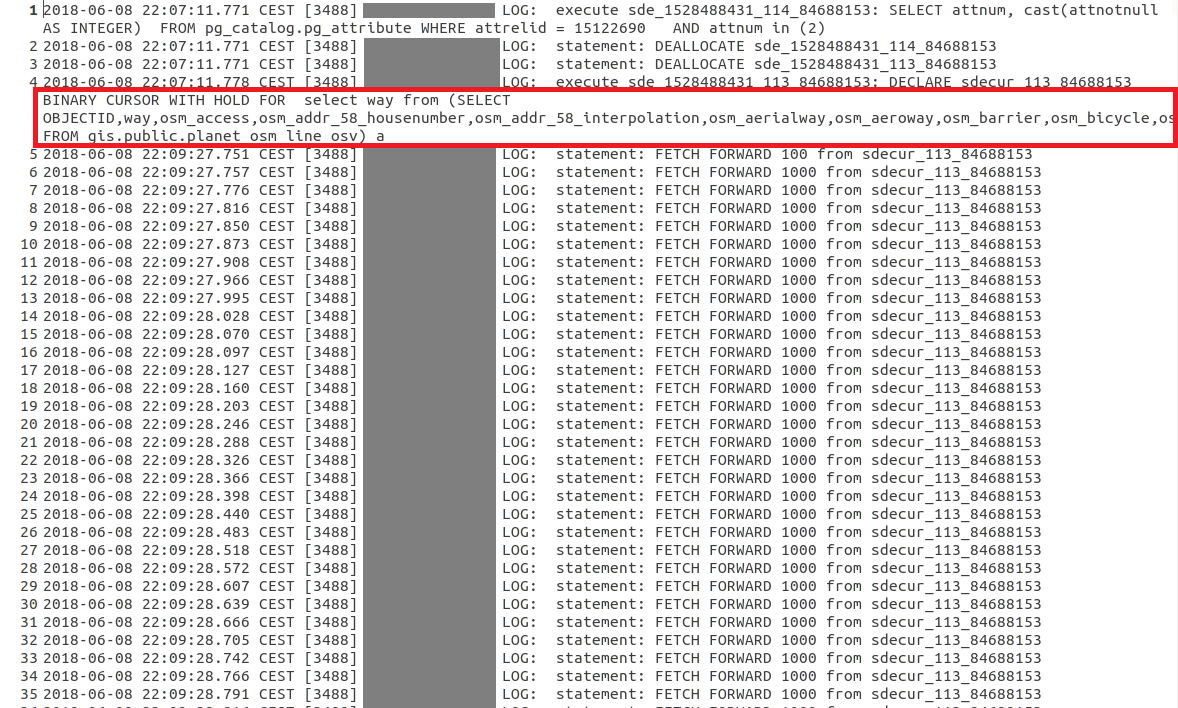

When I enable logging in PostgreSQL and check the created log, I can see the SQL generated the moment I open the attribute table. Much to my surprise, even though the Query Layer had a Definition Query set, this Definition Query is not set on the SQL send to the database to restrict the binary cursor being created, as a consequence, the entire table seems subsequently to start being scanned in chunks of 1000 records, see the FETCH FORWARD 1000 statements in the log. This seems to go on the entire half hour, until a full table scan of all the millions of records is done, and the final table is created. As I wrote above, the final result in the Attribute Table, does correspond to the Definition Query, and is only the 38697 rows.

To inspect this better, I copied both the cursor statement that is initially used to create the layer (which is fast!), and compared it with the above statement representing the opening of the Attribute Table in an OpenOffice document, see the below screenshots. Notice how the first screenshot, which represent the moment the layer is created when the Pro Map document is opened and the TOC layer generated, does contain the Definition Query in the WHERE statement. This WHERE statement is omitted though, in the second screenshot that represents the opening of the Attribute Table:

- Creation of Query Layer:

- Opening Attribute Table:

Am I missing something here, or fundamentally misunderstanding what is going on and misinterpreting this data? Why is there no WHERE statement submitted to the database when opening the Attribute Table of a (Query) Layer?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

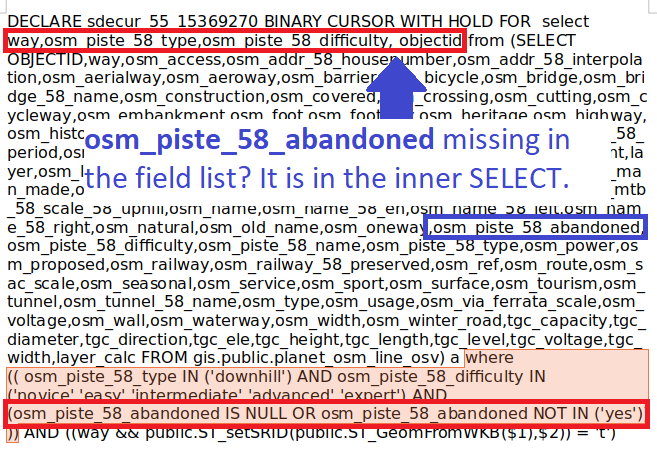

I am now even more confused about what is going on. If you look closely at the first SQL statement that represents the generation of the TOC layer, it appears the required osm_piste_58_abandoned field, that is part of the Definition Query and thus the WHERE clause, is not listed in the field list of the BINARY CURSOR. The other required fields for evaluating the WHERE clause (osm_piste_58_type and osm_piste_58_difficulty) are listed:

The SQL statement thus shouldn't even be valid, yet ArcGIS Pro opens up happily without error and the layer seems OK once I inspect the attributes?...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

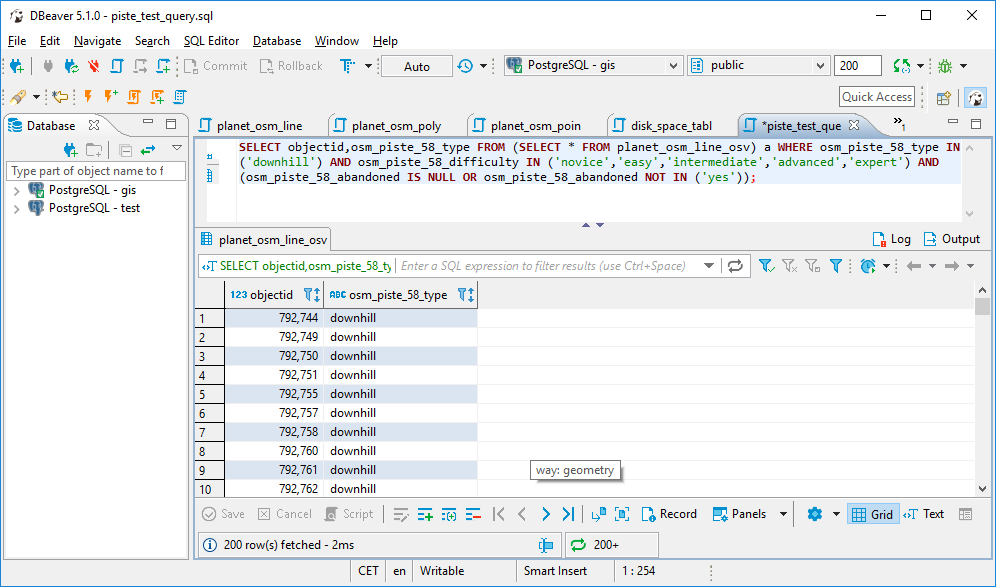

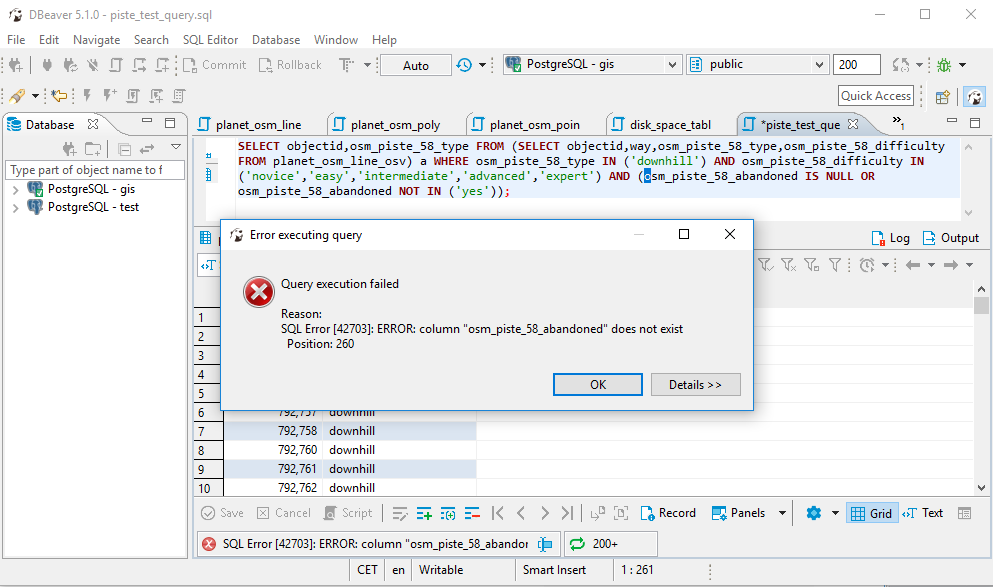

OK, this particular issue with the apparently missing osm_piste_58_abandoned field seems (partly) false alarm. I don't know if this is actually part of the SQL standards, but it seems PostgreSQL is fault tolerant in this respect, and silently adds the required field to satisfy the WHERE condition. I conclude this based on creating a similar statement in DBeaver, that does return results. Nonetheless, it would be nicer to actually see such field explicitly included in the SQL send to the database, also for consistency reasons, as the osm_piste_58_type and osm_piste_58_difficulty fields that are also part of the WHERE statement, are included as I already stated in the previous post:

Only when I exclude the osm_piste_58_abandoned from the inner SELECT, does it fail:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I am having difficulties with query layers myself in both Arcmap 10.5.1 and Pro 2.1.3. Using wireshark I see order by being dropped by Arcmap when sending a request to an Oracle database. This is different from your issue, but points to weaknesses of query layers. I have 2 separate bugs created for query layers used in Arcmap 10.5.1.

The order by portion of the sql statement is also being dropped in Pro 2.1.2 and 2.1.3 so it seems the issue is similar to yours. It is BUG-000113998 for the sorting issue, but I think the ESRI support analyst only applied the bug to ArcMap and not to Pro.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Marco - I think that this would need to be reviewed by a Support analyst on the Geodata team to investigate in more detail. I unfortunately do not have a Postgres instance readily available to just sneak a peak at.

If I remember correctly, ArcGIS clients treat tables in non-geodatabases as query layers. I am not sure when using query layers if the index is used or not. Maybe someone else can chime in on that one.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

@George Thompson:

To clear up a few points:

- Yes, this issue I detected relates to a non-geodatabase spatial database, and the layers added to the TOC are ArcGIS Query Layers.

- This issue has little to do with indexes. The basic problem is no WHERE clause being submitted. You can have a fully indexed database, but if Pro, despite the Query Layer having a Definition Query set, does not submit a WHERE clause to PostgreSQL when opening the attribute table of a Query Layer, then indexes are of no use and the only result of the SQL statement being submitted to PostgreSQL can be a full table scan... Actually, I find it somewhat of a mystery that ArcGIS Pro, after the full table scan, does come up with the correct attribute table result (restricted to the WHERE clause that the Definition Query represents). Clearly Pro must be evaluating the records returned by the FETCH FORWARD 1000 statements on the fly, as otherwise it should return the entire table.

- Yes, please do forward this to a support analyst on the Geodata team.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Marco:

Does your shop use Oracle databases as that is where the issue occurs for me on a non-geodatabase spatially enabled feature class. It might exist in Postgre, but my org does not use that type of database.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

No, this is just PostgreSQL I am working with. I could attempt to replicate your ORDER BY issue though in PostgreSQL if you are interested to know such result, although it likely adds little value for an already logged bug.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I think it would be helpful as it would display that there are a multitude of issues that ESRI needs to deal with in terms of query layers, as ESRI very narrowly word their bugs so my issue might get fixed but yours would not as we are working with different databases.

I would be curious to know if this is expected behavior, because then ESRI would not fix the issue(s). This is important to my org as query layers was the way we were going to distribute our enterprise data to internal GIS users but I am encountering fundamental issues with the way ArcMap works with query layers just like you did.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

@Marco:

I'm not sure if any of your end goals with the data is to use in a portal where it can be added back into Pro (use the data thru a service instead of directly), but when I passed my Oracle based query layer thru AGOL (a portal) as a feature layer from my AGS, the sorting issue magically went away (At least for a simple feature class). It seems that somehow AGOL (a portal) cleanses the SQL query so it works as expected.

Would you consider the behavior in the below thread another fundamental flaw with a query layer going back to database for a full table scan:

https://community.esri.com/thread/216276-available-values-for-a-query-layer-subset-selection