- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Spatial Analyst

- :

- ArcGIS Spatial Analyst Questions

- :

- Slope Percent is off after "Merging" several DEMs

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Slope Percent is off after "Merging" several DEMs

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

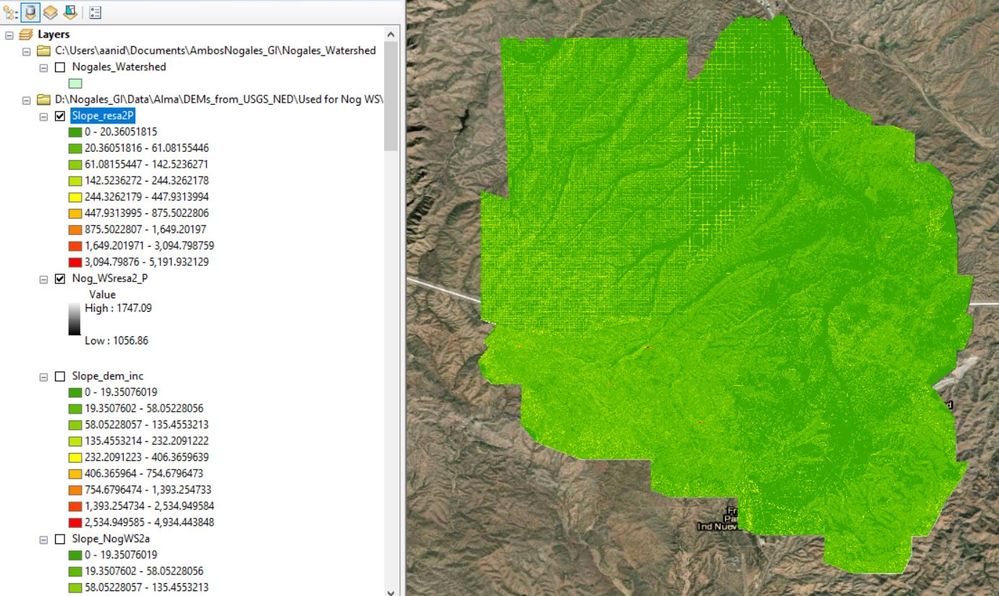

In order to get the entire area of interest covered, I had to "merge" through the mosaic to new raster tool several DEMs with 3 different resolutions (0.6m, 3m, 10m). I then resampled this merged raster using the Nearest Technique.

It all looked great at this point, until I tried calculating slope percent from the resampled raster. The ranges are crazy high (in the thousands), and it is apparent which areas have different resolutions.

I've tried playing around with the parameters in the environment, but nothing has changed. Any ideas??

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Try slope in degrees first, to identify where your high values occur.

It doesn't take much to get a high percentage slope (ie 2m rise over a 1 m run = 200%) so you might have cliff-like features in the image.

Also see this link regarding resampling and surface derivatives

performing-analysis/cell-size-and-resampling-in-analysis

PS I have moved this post to the Spatial Analyst Questions section

arcgis-spatial-analyst-questions

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Dan!

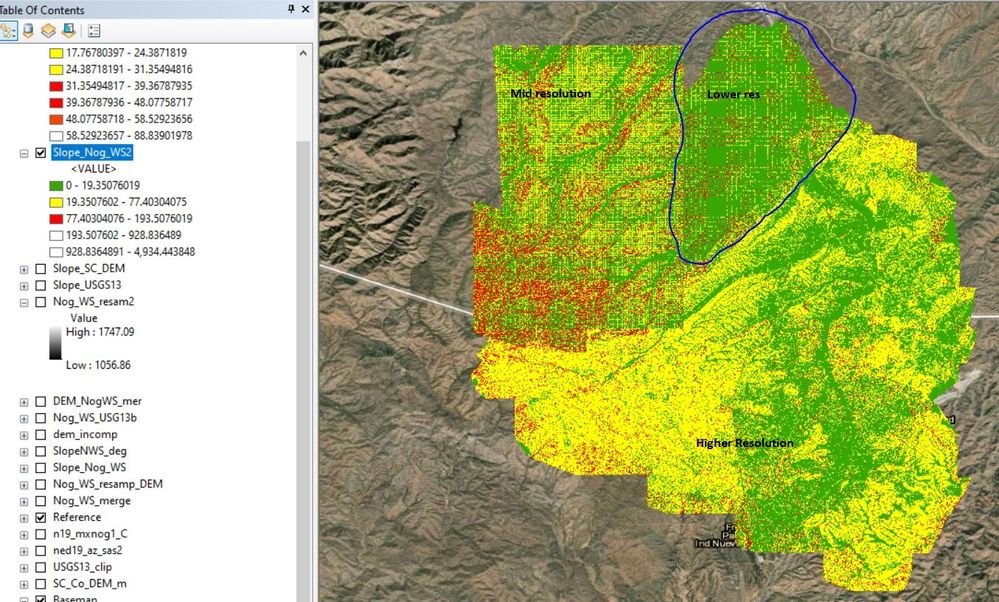

I played around with the resampling and tried cubic technique rather than nearest, but it didn't seem to make any difference in the output raster. Also, I reclassified my slope % and most of my area falls within the 0 - 193% range, which agrees with the degrees visualization, per your suggestion.

I am still unsure of these results though, just because of the gridded appearance in the areas where the lowest resolution DEM was "stitched". I have circled it in the picture below. Do you think this is a reason of concern or am I overthinking this?

ps. thanks for moving my question, it is the first time I post on here!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't know what to suggest at this point, but I am curious as to why you have 3 different resolutions to begin with. Is it not possible to go back and re-interpolate the whole area assuming you have the original data? or is it a case that you are using products that are only available at those cell sizes.

You might want to resample/aggregate your finer cell-sized rasters to the coarser resolution because it doesn't look like the reverse is working very well. Making something coarse appear fine usually doesn't work

... sort of retired...