- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Pro

- :

- ArcGIS Pro Questions

- :

- Union tool error in ArcGIS Pro 3.2.0

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Union tool error in ArcGIS Pro 3.2.0

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I think I've noticed a major bug with ArcGIS Pro 3.2.0.

In the attachment I am sending the project with data (Test.zip).

There are 3 of feature classes in the geodatabase:

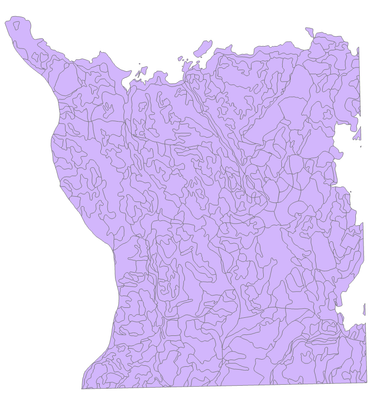

- polygon standsCD,

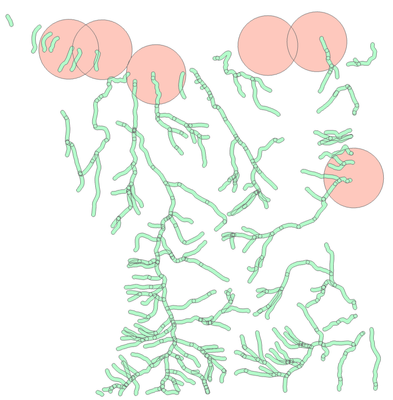

- StreamsCD_Buffer_Union buffer symbolized in unique values of the S_Distance attribute,

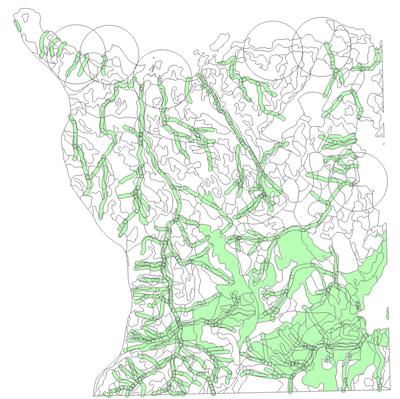

- output feature class from the Union geoprocessing tool.

The Union tool cannot handle a simple union of the first two feature classes. Some objects are assigned wrong values for the S_Distance attribute. In the connected polygons located outside surface stream buffers, the S_Distance attribute value should be 0, and for a many of features the tool incorrectly assigns an attribute value of 50.

It seems to me that this is a very serious error of the application, making it virtually impossible to use it in analyses.

Tomasz Bartuś

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I just went through a very similar issue while using the Union tool. I was performing a simple union of two polygon feature classes and the result should have been a new feature class with about 600 features. Instead, it was producing a feature class that had 6000+ records. Turns out that each unique geometric feature had 10+ records associated with that unique geometry and tons of erroneous values in the attribute fields.

Ultimately this post led me to repairing geometry on my input feature class. 1 of the inputs had 12 or so errors related to ring ordering. After repairing the geometry and running the tool again, the tool ran properly and I got the correct output with ~600 features.

I frequently use public datasets from states and counties for geospatial analysis and I have never run into this one. The data I am using is the exact same data I used 1 year ago for the same process. I'm vetting last year's lab exercise before assigning it to this year's class. Last year I was using ArcGIS Pro 3.1 or 3.1.1 and I did not experience any issues.

I have a few questions for an ESRI representative:

- Are there higher data integrity requirements built in to 3.2?

- Have ring ordering errors always affected the Union tool?

- The operation was completed with no warnings or error messages. Isn't there some sort of QA function that detects when several records with identical geometry are created?

-Dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Dan.

There are no new requirements as far as the correctness of input data geometry. The same high standards apply.

Bad geometry always has the possibility of affecting the processing of data, regardless of tool.

For your third question it's not clear to me what you are asking. Identical geometry can be a valid output to an overlay operation. There are tools (Find/Delete Identical) that can detect and remove identical geometry if they are not desired. If you are referring to whether we have code in place to detect when bad geometry is being used and could potentially cause an issue... we do not. Checking and Repairing all input geometry during analysis is performance adverse and many issues may require manual intervention (In particular, bad Spatial Reference properties can cause 'good' geometry to be 'bad' and 'bad' geometry to be worse once applied during analysis. Repairing a Spatial Reference requires recreating of the feature class. See https://www.esri.com/arcgis-blog/products/arcgis-pro/analytics/geoprocessing-resolution-tolerance-an... ). If errors are thrown while using 'bad' data we catch and report the errors. From the the doc I provide below:

The onus is on the data's consumer to ensure that the feature class contains valid geometries before the data is used for projects or analysis.

Please see https://pro.arcgis.com/en/pro-app/3.0/tool-reference/data-management/checking-and-repairing-geometri... for the documentation on checking and repairing geometry in preparation for analysis.

If you have a copy of the data prior to repair that I can take a look at it would be appreciated. I can confirm a few things.

Thx. Ken

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Ken,

I appreciate the response. Attached are the two inputs I used for the union operation (no repair performed). I am curious to see if you're able to recreate the issue. Also, I would love to hear your thoughts afterwards.

To clarify my third question, I was wondering why Pro would not recognize an erroneous output. I assumed the application would flag something if a union operation results in a feature class with 10+ records for each unique polygon.

Regards,

Dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thanks Dan.

Sorry for the delayed response.

I was able to reproduce the bad output with the bad inputs... I see the same bad output using 3.1, 3.1.1, 3.2 and the soon to be released 3.3.

We'll do some due diligence to make sure there were no internal errors that we missed. We will not be repairing bad geometries each time we encounter an input geometry. All we can do is raise any errors that occur if the bad geometry causes a failure.

To reiterate what I've already posted above... always ensure the input data is good before using in analysis. Checking the inputs for issues should be a step in every workflow prior to analysis. It should be a part of every data providers workflow as well.

Bad input = errors, crashes or worst case scenario bad output with no error.

Thx. Ken

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »