- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Pro

- :

- ArcGIS Pro Questions

- :

- Has anyone automated updates to GIS data?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Has anyone automated updates to GIS data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

The situation is that we have a county wide GIS provider who makes authoritative edits to all types of data (road centerlines, address points, easements etc.), and we want to make sure that we capture all those edits in our data.

Doing these edits can be very time consuming, so I am trying to figure out if there is a way to automate all this.

I know this may be a reach, but has anyone been able to do any sort of automation in a similar situation? Any tips, tricks, or other information will be super useful to me!

Thank you in advance,

Michael

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Good afternoon,

I do the exact opposite. I work for a smaller county and am the only GIS person. So, I do all the edits to the various data layers and so forth then send it to the vendor.

The very first thing that comes to mind would be that the edits could or would be sent to you on a interval you all agree upon. Then at those time intervals you would have a fair notion of what edits took place over time.

A duplication of that amount of work as you have noticed is a lot of time spent where it does not seem like there is a reason to do so.

Thanks

Joe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

So there are several ways in which this can be accomplished. Without knowing your set up, it is difficult to say which one would be best but these may provide some streamlining.

- If your data is versioned, then the version will indicate what edits have been made by indicating the date and time in which edits to that version have been made.

- More complicated but equally streamlined is utilizing python scripts to account for any changes made to the database or to various layers, and have those edits either copied into or overwrite the existing values in each layer

- Create a custom tool (either ArcMap or ArcPro) to transfer attributes based on edit dates and other required attributes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I am intrigued by the python script option (I am getting more into python these days). Do you know where I could find a sample python script?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I provided a python script for a similar question earlier this year, maybe it can help you:

Have a great day!

Johannes

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

We have a similar situation, but ours is a 2-way replication. We send edits to the regional entity, and those are pushed out via sync. We were maintaining a separate layer, but that duplication of effort was not what we wanted, so we switched over to utilizing the regional dataset.

If this isn't feasible, you could track differences through a global/unique ID perhaps combined with the geometry column-- --you'd write a python-based cursor to check if there are any new IDs added or if any of the current IDs have had their attributes of interest changed. If their dataset has a sync enabled then you could grab their data and perform these calculations in your own environment at a scheduled time.

Likes an array of [cats, gardening, photography]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thank you so much for this suggestion! I will certainly consider it in my automation strategy, we do have a unique ID that could be joined. I will look into the cursor (I am getting into python big time myself). Do you have any info on the cursor?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

So generally you can find more resources on cursors here:

https://pro.arcgis.com/en/pro-app/latest/arcpy/classes/cursor.htm

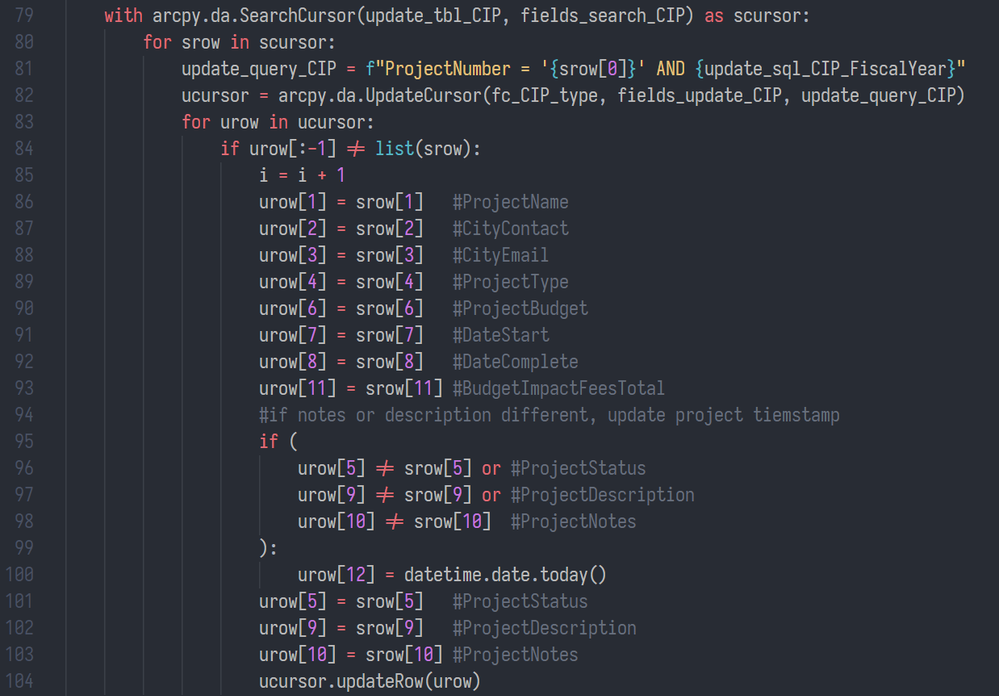

Here's a snippet from an old script, it checked an excel table derived from a Google Sheet to see if there were attribute updates to push to the feature class of interest. So this dynamically creates a SQL filter, then write some basic columns; there's a section in there that tests if certain columns have changed, and if so, updates a time field, and then overwrites those columns as well. You could do something similar. It is a nested cursor, which I'm less of a fan of now. Currently, I'm more inclined to roll through the dataset with a search cursor and save everything into a Python dictionary, and then iterate through that dictionary inside the update cursor as needed.

Depending on how big the dataset is, it may be worthwhile having a few layers of checks:

- is the record count the same? if yes, exit (this assumes attribute updates are not frequently done)

- what records are not contained in the dataset? append those records according to a field map or send an email to GIS listing the records that need to be added or do a mixture of both

- what records are in your dataset that are not in their dataset? send an email alert, or add to safe list

Likes an array of [cats, gardening, photography]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

This is awesome! I will certainly look into this and see how this can help us! Thanks again!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

The other thing I forgot to mention (this might be an easier option if the python option becomes too cumbersome) is if you have an sde database with versions for editing, you can create another version for that other individual to make edits in and reconcile that version into your main version.

So you can have a working version for the individual making the edits and your version for editing; reconciling and posting as needed between the two before reconciling and posting the edits to the uppermost parent version.

There are python scripts that can automate this process as well, utilizing fewer scripts while preserving the data integrity.