- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Enterprise

- :

- ArcGIS Enterprise Questions

- :

- Re: Large ArcGIS Server 'Site': stability issues

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Large ArcGIS Server 'Site': stability issues

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have an existing ArcGIS Server (AGS) 10.0 solution that is hosting close to 1,000 mapping services. We have been working on an upgrade to this environment to 10.2.1 for a few months now and we are having a hard time getting a stable environment. These services have light use, and our program requirements are to have an environment that can handle large amounts of services with little use. In the AGS 10.0 space we would set all services to 'low' isolation with 8 threads/instance. We also had 90% of our services set to 0 min instances/node to save on memory. Below is a summary of our approaches and where we are today. I'm posting this to the community for information, and I am really interested in some feedback and or recommendations to make this move forward for our organization.

Background on deployment:

- Targeted ArcGIS Server 10.2.1

- Config-store/directories are hosted on a clustered file server (active/passive) and presented as a share: \\servername\share

- Web-tier authentication

- 1 web-adaptor with anonymous access

- 1 web-adaptor with authenticated access (Integrated Windows Authentication with Kerberos and/or NTLM providers)

- 1 web-adaptor 'internally' with authenticated access and administrative access enabled (use this for publishing)

- User-store: Windows Domain

- Role-store: Built-In

We have a few arcgis server deployments that look just like this and are all running fairly stable and with decent performance.

Approach 1: Try to mirror (as close as possible) our 10.0 deployment methodology 1:1

- Build 4 AGS 10.2.1 nodes (virtual machines).

- Build 4 individual clusters & add 1 machine to each cluster

- Deploy 25% of the services to each cluster. The AGS Nodes were initially spec'd with 4 CPU cores and 16GB of RAM.

- Each ArcSOC.exe seems to consume anywhere from 100-125MB of RAM (sometimes up to 150 or as low as 70).

- Publishing 10% of the services with 1 min instance (and the other 90 to 0 min instances) would leaving around 25 ArcSOC.exe on each server when idle.

- The 16GB of RAM could host a total of 100-125 total instances leaving some room for services to startup instances when needed and scale slightly when in use.

our first problem we ran into was publishing services with 0 instances/node. Esri confirmed 2 'bugs':

#NIM100965 GLOCK files in arcgisserver\config-store\lock folder become frozen when stop/start a service from admin with 0 minimum instances and refreshing the wsdl site

#NIM100306 : In ArcGIS Server 10.2.1, service with 'Minimum Instances' parameter set to 0 gets published with errors on a non-Default cluster

So... that required us to publish all of our services with at least 1 min instance per node. At 1,000 services that means we needed 100-125GB of ram for all the ArcSOC.exe processes running without any future room for growth....

Approach 2: Double the RAM on the AGS Nodes

- We added an additional 16GB of RAM to each AGS node (they now have 32GB of RAM) which should host 200-250 arcsoc.exe (which is tight to host all 1,000 services).

- We published about half of the services (around 500) and started seeing some major stability issues.

- During our publishing workflow... the clustered file server would crash.

- This file server hosts the config-store/directories for about 4 different *PRODUCTION* arcgis server sites.

- It also hosts our citrix users work spaces and about 13TB of raster data.

- During a crash, it would fail-over to the passive file server and after about 5 minutes the secondary file server would crash.

- This is considered a major outage!

- On the last crash, some of the config-store was corrupted. While trying to login to the 'admin' or 'manager' end-points, we received an error that had some sort of parsing issue. I cannot find the exact error message. We had disabled the primary site admin account, so went in to re-enable, but the super.json file was EMPTY! We had our backup team restore the entire config-store from the previous day, and copied over the file. I'm not sure what else was corrupted. after restoring that file we were able to login again with our AD accounts.

- During our publishing workflow... the clustered file server would crash.

The file-server crash was clearly caused by publishing a large amounts of services to this new arcgis server environment. We caused our clustered file servers to crash 3 separate times all during this publishing workflow. We had no choice but to isolate this config-store/directories to an alternate location. We moved it to a small web-server to see if we could simulate the crashes there and continue moving forward. So far it has not crashed that server since.

During bootups, with the AGS node hosting all the services, the service startup time was consistently between 20 and 25 minutes. We were able to find a start-up timeout setting at each service that was set to 300 seconds (5 minutes) by default. we set that to 1800 seconds (30 minutes) to try and get these machines to start-up properly. What was happening is that all the arcsoc.exe processes would build and build until some point they would all start disappearing.

In the meantime, we also reviewed the ArcGIS 10.2.2 Issues Addressed List which indicated:

NIM099289 Performance degradation in ArcGIS Server when the location of the configuration store is set to a network shared location (UNC).

We asked our Esri contacts for more information regarding this bug fix and basically got this:

…our product lead did provide the following as to what updates we made to address the following areas of concern listed inNIM099289:

- 1. The Services Directory

- 2. Server Manger

- 3. Publishing/restarting services

- 4. Desktop

- 5. Diagnostics

ArcGIS Server was slow generating a list of services in multiple places in the software. Before this change, ArcGIS Server would read from disk all services in a folder every time the a list of services was needed - this happened in the services directory, the manager, ArcCatalog, etc. This is normally not that bad, but if you have many many services in a folder, and you have a high number of requests, and your UNC/network is not the fastest, then this can become very slow. Instead we remember the services in a folder and only update our memory when they have changed.

Approach 3: Upgrade to 10.2.2 and add 3 more servers

- We added 3 more servers to the 'site' (all 4CPU, 32GB RAM) and upgraded all to 10.2.2. We actually re-built all the machines from scratch again

- We threw away our existing 'config-store' and directories since we knew at least 1 file was corrupt. We essentially started from square 1 again.

- All AGS nodes were installed with a fresh install of 10.2.2 (confirmed that refreshing folders from REST page were much faster).

- Config-store still hosted on web-server

- We mapped our config-store to a DFS location so that we could move it around later

- Published all 1,000 ish services successfully with across 7 separate 'clusters'

- Changed all isolation back to 'high' for the time being.

This is the closest we have gotten. At least all services are published. Unfortunately it is not very stable. We continually receive a lot of errors, here is a brief summary:

Level Message Source Code Process Thread SEVERE Instance of the service '<FOLDER>/<SERVICE>.MapServer' crashed. Please see if an error report was generated in 'C:\arcgisserver\logs\SERVERNAME.DOMAINNAME\errorreports'. To send an error report to Esri, compose an e-mail to ArcGISErrorReport@esri.com and attach the error report file. Server 8252 440 1 SEVERE The primary site administrator '<PSA NAME>' exceeded the maximum number of failed login attempts allowed by ArcGIS Server and has been locked out of the system. Admin 7123 3720 1 SEVERE ServiceCatalog failed to process request. AutomationException: 0xc00cee3a - Server 8259 3136 3373 SEVERE Error while processing catalog request. AutomationException: null Server 7802 3568 17 SEVERE Failed to return security configuration. Another administrative operation is currently accessing the store. Please try again later. Admin 6618 3812 56 SEVERE Failed to compute the privilege for the user 'f7h/12VDDd0QS2ZGGBFLFmTCK1pvuUP1ezvgfUMOPgY='. Another administrative operation is currently accessing the store. Please try again later. Admin 6617 3248 1 SEVERE Unable to instantiate class for xml schema type: CIMDEGeographicFeatureLayer <FOLDER>/<SERVICE>.MapServer 50000 49344 29764 SEVERE Invalid xml registry file: c:\program files\arcgis\server\bin\XmlSupport.dat <FOLDER>/<SERVICE>.MapServer 50001 49344 29764 SEVERE Unable to instantiate class for xml schema type: CIMGISProject <FOLDER>/<SERVICE>.MapServer 50000 49344 29764 SEVERE Invalid xml registry file: c:\program files\arcgis\server\bin\XmlSupport.dat <FOLDER>/<SERVICE>.MapServer 50001 49344 29764 SEVERE Unable to instantiate class for xml schema type: CIMDocumentInfo <FOLDER>/<SERVICE>.MapServer 50000 49344 29764 SEVERE Invalid xml registry file: c:\program files\arcgis\server\bin\XmlSupport.dat <FOLDER>/<SERVICE>.MapServer 50001 49344 29764 SEVERE Failed to initialize server object '<FOLDER>/<SERVICE>': 0x80043007: Server 8003 30832 17

Other observations:

- Each AGS node makes 1 connection (session) to the file-server containing the config-store/directories

- During idle times, only 35-55 files are actually open from that session.

- During bootups (and bulk administrative operations), the file's open jump consistently between 1,000 and 2,000 open files per session

- The 'system' process on the file server spikes especially during bulk administrative processes.

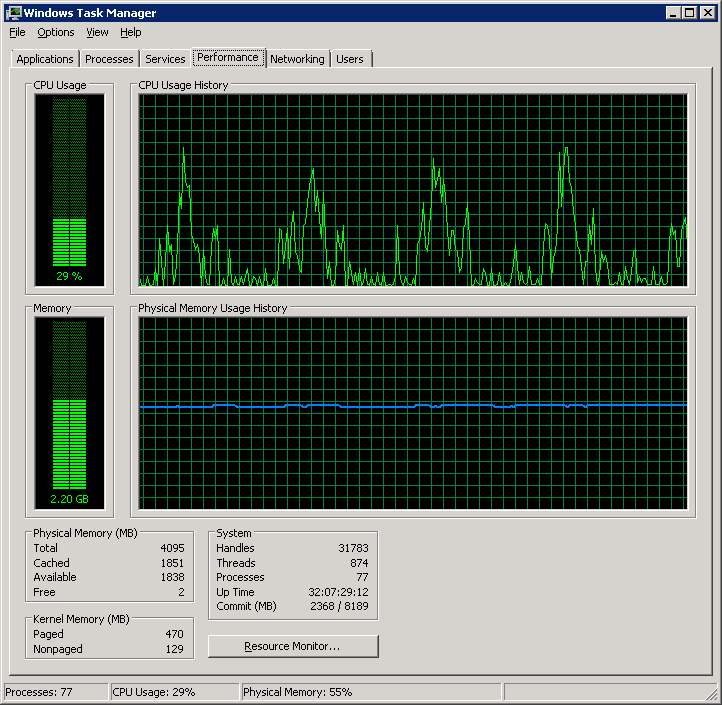

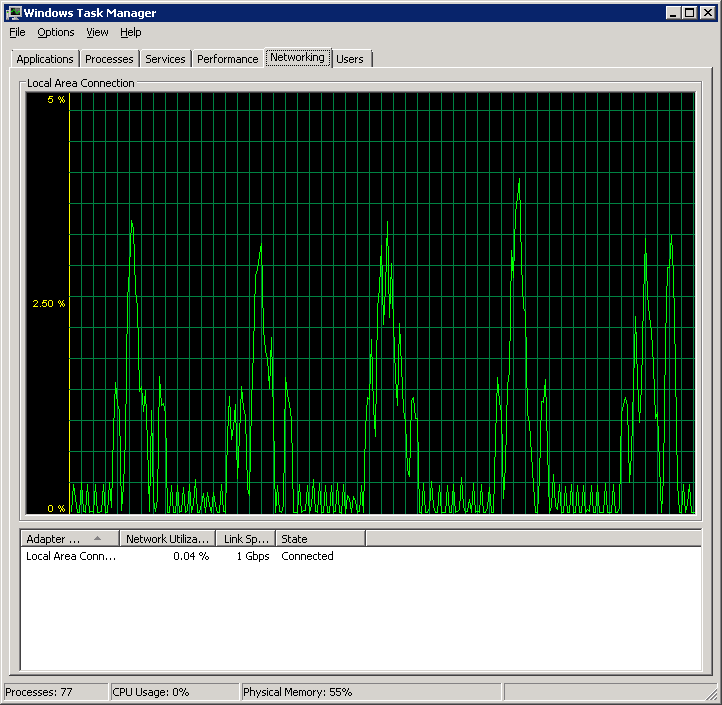

- The AGS nodes are consistently in communication with the file server (even when the site is idle). CPU/Memory and Network monitor on that looks like this:

- AGS nodes look similar. It seems there is a lot of 'chatter' when sitting idle.

- Requests to a service succeed 90% of the time but 10% of the time we receive HTTP 500 errors:

Error: Error exporting map

Code: 500

Options for the future

We have an existing site with the ArcGIS SOM instance name of 'arcgis'. These 1,000 services are running in that 10.0 site for the past few years. Users have interacted with this using a URL like: http://www.example.com/arcgis/rest/services/<FOLDER>/<MapService>/MapServer

We are trying to host all these same services so that users accessing this URL will be un-impacted. If we cannot, we will switch to 1 server in 1 cluster in 1 site (and instead have 7 sites). We will then be re-publishing all our content to individual sites but will have different URL's:

http://www.example.com/arcgis1/rest/services/<FOLDER>/<MapService>/MapServer

http://www.example.com/arcgis2/rest/services/<FOLDER>/<MapService>/MapServer

...

...

http://www.example.com/arcgisN/rest/services/<FOLDER>/<MapService>/MapServer

We would have extensive amount of work to either (or both) communicate all the new URL's to our end users (and update all metadata, products, documentation, and content management systems to point to the new URL's) and/or build URL Re-direct (or URL Re-write) rules for all the legacy services. Neither of two options are ideal, but right now we seem to have exhausted all other options.

Hopefully this will help other users while they troubleshoot thier arcserver deployment. Any ideas are greatly appreciated with our strategy to make this better. Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ESRI was insistent that it needed to be set to system managed with enough RAM available and then some. When we switched from it being hard set at 16GB to system managed, rebooted we saw immediate improvement in performance and stability.

Sent via the Samsung Galaxy Note® 3, an AT&T 4G LTE smartphone

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interesting.

I'll relay this to IT and see if it's something that can be done easily.

I feel like we make changes every day to this thing, but we need to resolve this...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks again for all your help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aaron:

This is very interesting.

Too bad Esri dev wouldn't give out more info.

If the server has enough RAM it should never have to swap to Virtual Memory.

But it can be easy to use up RAM in ArcGIS.

Maybe that's what it's all about.

Even setting a big limit like 24GB, which would seem sufficient,

really isn't and with the system managing the Swap file, it can grow as big as needed.

I had one question, are you using virtual servers?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Paul,

Yes, we are using HyperV. OS is MS 2012. We also try to do a weekly reboot of all our servers as I have heard mumblings around the community of chronic hung soc processes and memory leaks with the 2 java platform SE binary processes that run.

Aaron

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That's right, I recall prior discussions about Hyper V... funny they weren't showing up in my browser.

We also do a reboot due to those exact issues.

We also have cmd windows that are left hanging around by pskill processes.

Most of our servers are still on 10.1. & MSrvr 2008 R2

I do an automated reboot every two weeks which is usually sufficient.

Every once in a great while, our main server will still go south on us but there's no rhyme or reason to it.

It can happen ten days out, or 2 or 3 or ? days out....

How do you reboot your servers? Do you do it manually?

I set ours up via Task Manager but it was a real pain to get it working.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually ESRI (the guys from the Developer Summit 2016 say, for best practices, to have MIN Instance set to MAX if its a service that is heavily used. when min is a low digit (e.g. 1) and max is a larger one (e.g 5) when the demand is there, takes time for ArcSOC to build the instance... thus Min = max = fastest possible availability.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As some have mentioned above, situations like this may be caused by an exhausted non-interactive desktop heap size. The following technical article speaks to this scenario and should be consulted:

Please be aware of the warning messages contained within this article, as modifying the non-interactive desktop heap size can cause other issues and should be carefully tested.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Increasing the non-interactive desktop heap size was in fact the solution for one of our customers.

He couldn't start more than 333 processes on his single-machine-site. After increasing the non-interactive desktop heap size, he was able to start more without errors.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Thomas for the your link by increase the desktop heap size it indeed solved our issue similar to what OP had. Originally I can see the max number of background process stay around 320 include about 300 SOC.exe, and that cause serials of issues on the server and publishing. By increasing to 1024 from 768, I saw the backgroud processes now growed up to 360 and more SOC.exe were able to start.

Just curious, is there a rough math behind the desktop heap size and the number of processes could be supported. E.g. by increasing the heap size every 256, it allows additional X number of processes?

thanks