- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Enterprise

- :

- ArcGIS Enterprise Questions

- :

- Re: 10.5.1 Memory Usage

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

10.5.1 Memory Usage

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

All,

i was curious if anyone else encountered an interesting behavior once upgrading desktop and server to 10.5.1....

We have noticed that after upgrading and running the "manage map server cache tiles", esri ultimately maxes out the memory, and this usually happens 90min after starting the tool. We have run this process many times on the data and not until after we upgraded to 10.5.1 are we now experiencing this.

Machine Specs

Intel Xeon CPU E5-4620 v2

Windows Server 2012 R2

16gb Ram

4 Cores

thanks,

dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

1) we have roads, addresses, other individual point, and line features.

2) this update process runs on a weekly basis

3) on average we see 20k-50k changes total

a. this is why we do the delta b/c so little changes that we don't want to waste time rebuilding everything

4) when we rebuild tiles we do it in two steps

a. forced scales - these are level 0-15, very little data total so we just rebuild them all (usually only 10min)

b. selective scales - this is levels 16-19, this is where we implement our area of interest based on the delta results

1) it is within the selective scales that we see the memory usage spike even faster and ultimately failing

5) the sole purpose of this machine/server is to build tiles, nothing else runs on this machine, we did not want anything interrupting this process or bogging down the machine to slow the process.

note: i really wanted to separate the vector data from the raster data, i feel that the sheer size of all the data due to them being combined is an issue in itself. the raster data only changes once a year, whereas the vector data changes weekly. the reasoning behind combining them is so we would not have to make two separate requests for the vector and raster data, thus bogging down the bandwidth of the system.

dave

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I wanted to share more info with everyone and hopefully shed some more light on why this is very perplexing...

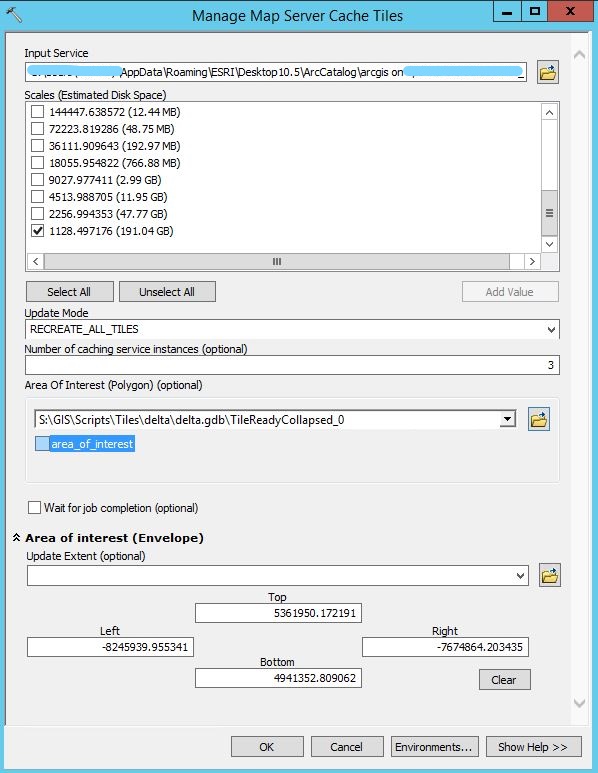

i have two images attached...

1) AOI - this is running the "managemapservercachetiles" on level 19 whit an area of interest polygon

a. there is very little work to be done with recreating all tiles within the area of interest.

2) ALL - this running the "managemapservercachetiles" on level 18 and level 19

a. there is a lot of work to be doing recreating all tiles in these two levels

AOI - memory is running up like crazy and has almost maxed out the machine and is about to fail

a. why would this smaller amount of work cause the memory usage?

ALL - the memory usage has stayed consistent at this level throughout the entire process, the cpu usage jumps up and down but i have always seen that behavior.

what in the world is going on?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Can you provide a screenshot of either the tool with the parameters filled in or the python call to ManageMapServerCacheTiles_server with all of your parameters?

It's been a long time since I had this process running in 10.2, but I thought the parameters for this call changed between 10.2 and 10.5.1 which could be part of the problem you are seeing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

here is the screenshot of the tool:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

not sure why it showed up this way, but when we run the tool we leave "wait for job completion" checked, I believe that is the default. again, not sure why in this screenshot it is not checked.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Can you take a screenshot of the results showing Inputs from a cache update that would not take that long just to get an idea of what that looks like?

Have you made sure that the AOI geometry in the delta.gdb is reasonable and your script to create the AOI is not creating an area much larger than what you are expecting?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

here are the inputs as seen from geoprocessing results.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

all,

would installing and utilizing background geoprocessing assist in memory usage?

I wasn't sure if the background geoprocessing was utilized for the ManageMapServerCacheTiles gptool

thoughts?

dave

- « Previous

-

- 1

- 2

- Next »

- « Previous

-

- 1

- 2

- Next »