- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Dashboards

- :

- ArcGIS Dashboards Questions

- :

- Improving Dashboard (Classic) Performance

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Improving Dashboard (Classic) Performance

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello guys,

I am currently working on a project using Dashboard Classic in Enterprise 10.9. The dashboard will be using referenced data from PostgreSQL version 12.4.

The data will be based on 3 kinds of data:

1. A polygon, with around ~91000 rows. I've already set the visibility scale to prevent all of the data to be shown at the same time.

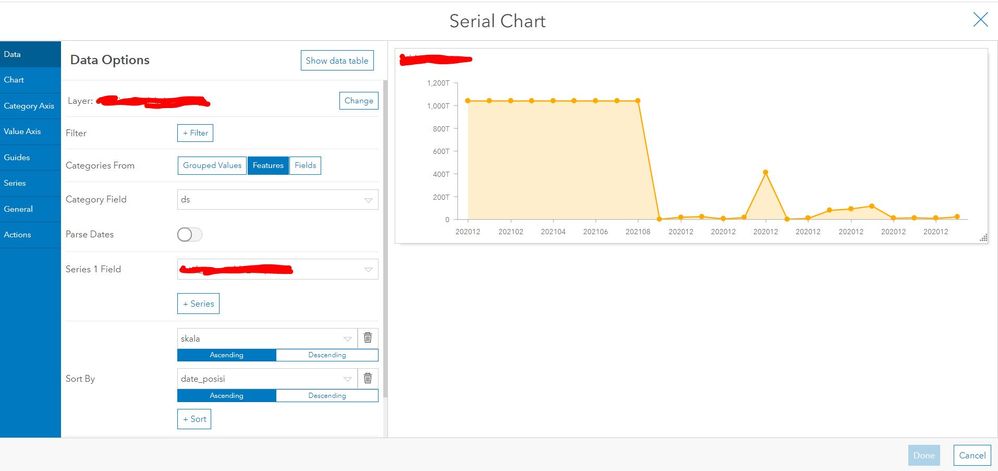

2. A table, with around ~70000 rows monthly (with 2 years retention, so ~1.7 million rows), which will be used for diagrams. We've tried to limit the maximum categories and not use any grouped values to prevent on the fly calculation. The table and polygon will be connected through filter widgets.

3. A query layer (joining the aforementioned polygon and table) with a result of ~70000 rows. We've tried our best to only use this data when needed. Since the query layer tends to have poor performance.

Is there any other way to improve the dashboard performance? Whether from dashboard configuration level, database setting level, or even hardware level.

Best regards,

Naufal

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

If you can do any spatial or attribute aggregations at the database level, and serve them as views/query layers to power any indicators/charts etc. I'd recommend it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi David,

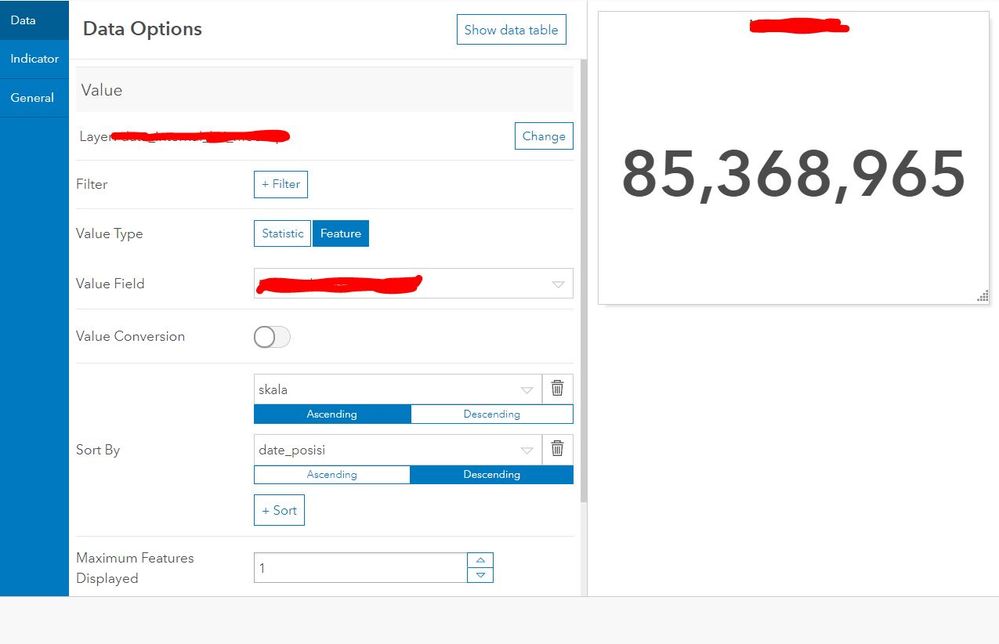

We are currently using "feature" instead of "statistic" to prevent any attribute aggregations in the dashboard level. Does it work the same?

Best regards,

Naufal