- Home

- :

- All Communities

- :

- ArcGIS Topics

- :

- Applications Prototype Lab

- :

- Applications Prototype Lab Blog

- :

- Esri-3D-Android App

Esri-3D-Android App

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

I built this app to show some of the capabilities of the recently released ArcGIS Quartz Runtime 100.2.1 SDK for Android—specifically its 3D capabilities. The 3D and runtime teams have put in a lot of work to make 3D data and analyses run smoothly on the latest mobile devices.

What does it do?

Esri’s I3S specification covers three kinds of scene layers: 3D Objects, Integrated Meshes, and Point Clouds. Currently, 3D Objects and Integrated Meshes can be displayed in the Quartz runtime. The web scene this app loads by default shows examples of both those layer types.

The app uses a web scene ID to load a list of scene layers, background layers, and slides (3D bookmarks). You’ll find that web scene’s ID in the identifiers.xml source code file. If you want to open a different web scene than the default one, use the Open button in the toolbar to enter your ArcGIS.com credentials. It will then find out which web scenes your account owns and let you open one of those instead. Note that it will only show the web scenes you have created—not all the web scenes that others have created and made available to you.

The Bookmarks button will show a list of slides in the web scene; tapping one will take you to the slide location. The Layers button shows a checkbox list of all scene layers defined in the web scene.

Standard navigation

First, get familiar with panning, zooming, rotating, and tilting the display. The SDK uses the device’s GPU to accelerate graphics computation and make navigation smoother. You can find more information on supported out-of-the-box gestures and touches here: https://developers.arcgis.com/android/latest/guide/navigate-a-scene-view.htm

This app’s tools can all be found under the rightmost toolbar icon; tap it and you will see a pop-up menu. Standard Navigation will disable any currently chosen tool and return the view to its standard, out-of-the-box navigation gestures as documented in the link above.

Measure tool

This tool is straightforward to use; activate it and tap a location. It calculates a distance and heading from your observation point in space to the location you tapped on the ground. The location and bearing are simple Pythagorean and trigonometric calculations; the point here was not about the calculations, but about using 3D graphics and symbols to display the results.

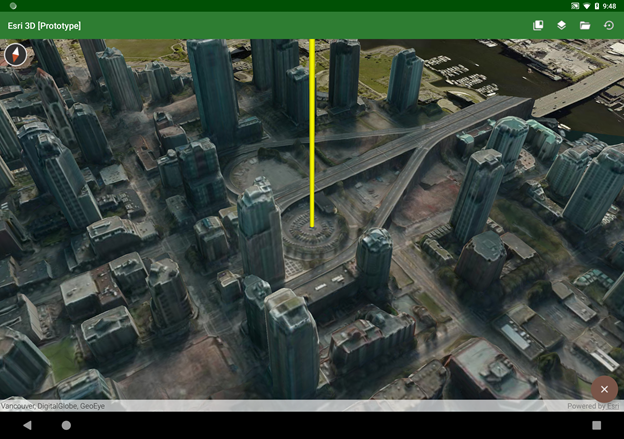

Line of Sight

Line of Sight and Viewshed are two new onscreen visibility analysis tools; there is detailed information on what that means here: https://developers.arcgis.com/android/latest/guide/analyze-visibility-in-a-scene-view.htm

Line of Sight is simple to implement; just set a start point and an end point, and add the analysis overlay to the scene. Updating the analysis is no more difficult than updating the end point location.

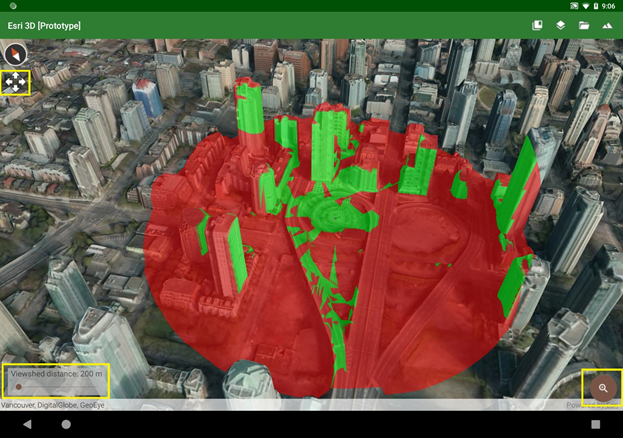

Viewshed

The Viewshed analysis does some extra work beyond what the SDK provides. First, each analysis is limited to a 120° arc; each tap invokes three analyses for complete 360° coverage. I also wanted a put the user right in the middle of the analysis, as if they’re standing on the ground—and that’s what the zoom floating action button does. Once the camera moves down into the scene, the floating action button becomes a return button, which will take the camera back to its original point in space.

Once the camera moves down into the scene, the floating action button becomes a return button, which will take the camera back to its original point in space. There’s also a slider in the lower left of the screen which lets you interactively change the viewshed distance. You can use it to explore different visibility scenarios in different scenes; you might want to use a smaller value for dense urban areas or a much larger value for unimpeded rural landscapes.

There’s also a slider in the lower left of the screen which lets you interactively change the viewshed distance. You can use it to explore different visibility scenarios in different scenes; you might want to use a smaller value for dense urban areas or a much larger value for unimpeded rural landscapes.

I wanted to make the analysis experience more interactive by letting you watch the analysis move as you drag your finger around the screen. This can be an interesting exercise, but it uses the same gesture that’s normally used to pan the view. You may reach a point where you want to pan the view without having to go back into standard navigation mode first. If you long-press—tap and hold a finger down without moving it for a second or so—you should see a four-arrows icon show up underneath the compass. That means the view is now in pan mode, and the display will pan (instead of re-running the viewshed) until you lift your finger.

Sensor Navigation mode

Once I was in the shoes of an observer in the middle of a viewshed, I thought it would be fun if I could tilt and rotate the device itself to move the view—kind of like a physical viewport into a virtual scene. And that’s what Sensor Navigation mode does. It listens to the device’s gyroscopic sensors to know when you’ve moved the device, and it moves the scene accordingly. The downside with this mode is that it can request so much scene data that the device, network connection, or scene service may not be able to keep up.

Pivot lock

If you see a building or other feature of special interest, you can use Pivot Lock to focus on that location and rotate around it. Activate the tool, then tap or drag a point, and the view will begin to rotate around it. Return to standard navigation by tapping the floating action button. You can stop the rotation by tapping anywhere on the display; then you can tap or drag a new point to start again. This tool uses the SDK’s OrbitCameraController to provide this functionality without a lot of custom code.

You can stop the rotation by tapping anywhere on the display; then you can tap or drag a new point to start again. This tool uses the SDK’s OrbitCameraController to provide this functionality without a lot of custom code.

Technical notes

All the tools extend the https://developers.arcgis.com/android/latest/api-reference/reference/com/esri/arcgisruntime/mapping/... class. When one is selected, it’s just one line of code to set the new touch listener on the Scene View and let it take over responsibility for all touch gestures until a new tool is chosen.

While the manifest requires OpenGL ES 3.0 or above, that’s not a strict requirement of the runtime SDK (although that could possibly become a requirement in a future release). This will run on devices using OpenGL ES 2, but those devices are generally older and don’t have the GPU, memory, or processor power to run 3D apps smoothly anyway.

I did use a couple of open-source libraries that are licensed under the Apache 2.0 license.

Availability

The source code for this app is available in a public Github repo; find it at https://github.com/markdeaton/esri-3d-android

Feel free to clone or fork the repo and use it as you like. Also, I’ll probably be making a one-time major update for the next release of the Esri SDK, as that release will probably make obsolete much of the custom web scene parsing code in the app.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.