- Home

- :

- All Communities

- :

- Developers

- :

- Python

- :

- Python Questions

- :

- replace commas from .csv

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

replace commas from .csv

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I have a .csv that was created using input_xls.to_csv, and everything delimits correctly. However, within some of the string records are commas (e.g. a business name like 'Widgets, Inc.'). The result is incorrect delimiting when importing the .csv into a file geodatabase using arcpy.conversion.TableToTable - values end up shifted over into other columns. I want to replace the commas with a space.

I cannot figure out how to use either something like

.apply(lambda x: x.replace(',', ' '))

or

[val if val else " " for val in row] + ([" "] * (len(first_row) - len(row))) (found here: https://community.esri.com/t5/python-questions/find-and-replace-blanks-in-csv-with-python/m-p/673632)

Any help to nudge me in the right direction would be appreciated. Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

What you can do is read your excel file into an array, where when appending data into the array, you call the replace command to get rid of the comma.

inRow = [str(row[0]),str(row[0]),str(row[2]),str(row[3]),str(row[4]),str(row[5]),str(row[6]),str(row[7]),str(row[8]),str(row[9]),str(row[10])]index = 0for i in inRow:if "," in i:i = i.replace(",","")inRow[index] = iindex += 1points.append(inRow)

AllProjects_CSV.truncate()writer = csv.writer(AllProjects_CSV,delimiter=',')writer.writerow(fields)writer.writerows(points)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

You might want to try bringing in the csv back into Excel, then try the Excel to Table tool to see if it handles the embedded commas better when bringing back into a file geodatabase

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

What is your input_xls? Is it a pandas DataFrame?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Yes, I'm using pandas .read_excel and .to_csv

I was out Friday and will come back to this later today, but plan to look further at what Matt Kramer posted above. Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

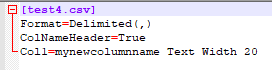

Personally I cannot recreate your probleme, but you have two options.

1. change regional settings of your machine to use different seperators (semicolon for example)

2. in the same folder as your data add file "schema.ini", where you specify how data is seperated, additionnaly you can change the name of columns and its types.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I never followed-up with the solution I found using csv and pandas:

Creating a new .csv is not an issue, because I delete all of them at the end of the process.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

This gets me close to what I need, however everything gets written to one single column. Any thoughts? I see quotes around the full length of the row across all the columns so perhaps that is the issue. So "Column 1, Column 2, Column 3".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

jschuckert - This was a while ago, and under a previous employer, but I believe I used the output .csv from the code above as input to arcpy.conversion.TableToTable, and specified a field map which contained all the necessary fields.

-Justin