- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS GeoEvent Server

- :

- ArcGIS GeoEvent Server Questions

- :

- Stream Service output keeps breaking

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Stream Service output keeps breaking

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

In one of our two geoevent server machines, we have two stream services that streams the location of around 17k members in real-time. One service streams the location of radios devices and the second one the location of what we called IRIS devices -which are just Ipad devices.

The service that streams the location of the Ipad devices breaks consistently every 24 to 48 hours. Everytime it breaks we need to recreate the output connector - "Send features to Stream Service" and republish the service. We estimate that we have a peak of 1000 concurrent users connected to that service. This is just an estimation.

Is there anything we can do to fine tune the output? - allocate more memory? any logs that can help us to find out why is breaking?

We have recreated the service in a second machine and it does not break, althought it does not have users subscribed.

Note: Geoevent 10.8, Windows 2012 R2 in a virtual machine with 16GB of RAM and 4 CPUs. RAM and CPU utilisation is always at around 60%.

Note2: The service was working fine until two weeks ago that started to behave as I describe above.

We could not found a reason why the service is breaking, Can someone give us a hand?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello Walter --

Up front, I'm going to ask that you open an incident with Esri Tech Support to get a specialist assigned who can help reproduce the issue on our end. This is for traceability in case the behavior you are seeing is a bug which needs to be addressed by the development team (as opposed to something we can configure in your deployment or environment that solves the issue for you).

A couple of things I know about stream services:

- There is only one web socket running for each stream service output. This web socket is run as part of the GeoEvent Server's JVM. When multiple clients subscribe concurrently to the web socket they all suffer a deterioration in performance.

- I do not know what the upper limit is on how many clients can concurrently subscribe to a single stream service - only that there is little you can do to scale out the stream service for many hundreds or thousands of subscribers. You cannot, for example, configure more than one web socket resource to run and support a stream service you expect to experience a high volume of subscribers.

There are four component loggers for stream services which you might try setting to log DEBUG messages to see if you can capture an error or warning around the time the stream service stops broadcasting data.

com.esri.ges.datastore.agsconnection.NewStreamServiceBuilder

com.esri.ges.framework.streamservices.client.StreamServiceClientImpl

com.esri.ges.framework.streamservices.client.AGSConnectionStatusListenerStreamServiceClient

com.esri.ges.transport.streamService.StreamServiceOutboundTransport

I do not know off hand how verbose these component loggers are relative to data ingest volume / velocity. If you request DEBUG logging, monitor your karaf.log found beneath ...\ArcGIS\Server\GeoEvent\data\log to gauge how fast the log file is growing and log file rollover. If the logging is too verbose you could easily miss the data you are trying to capture as the current karaf.log fills-up, rolls over, and older rolled log files are deleted.

As one stream service appears to be working reliability and the other seems to be failing every day or so, is there anything obviously different between the two? Are both stream services broadcasting roughly the same number of Point (my assumption ?) feature records per second? Do both stream services have roughly the same number of concurrent subscribers? You indicate that the problem does not manifest when the same stream service is run on a second machine, but then, there isn't anyone subscribing to that instance of the stream service.

I know that Esri Tech Support has a similar case / incident they are helping the development team reproduce -- not specifically stream service related, but related to event processing stopping after a variable length of time (reportedly 2 - 5 days). To briefly describe some underlying GeoEvent Server architecture for you, there are two Kafka topic queues working with each GeoEvent Service. The first routes event records from an input (the producer) to a GeoEvent Service for processing. The second routes processed event records from the GeoEvent Service to an output (a consumer). If something were to go wrong with the Kafka event broker I might expect an input would continue to ingest and adapt data to create event records, but those event records would not be processed by a GeoEvent Service -- you would see the event count of the input increase as data was received, but the 'In' / 'Out' event count of the GeoEvent Service in which that input was incorporated would not increment. Or, if the second Kafka topic had failed, you might see event data being processed and the 'Out' event count for a GeoEvent Service incrementing, but the stream service output's event count would not increment. Knowing which of these you are observing (if either applies) will help Esri Technical Support better address an incident you submit with them.

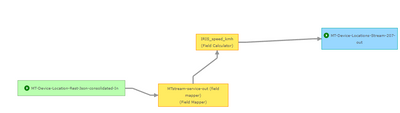

To help Esri Technical Support we will need you to capture a sample of the radio device or iPad device data being sent to GeoEvent Server. It doesn't look like there is anything "stateful" in your GeoEvent Service (e.g. I don't see an Incident Detector or any particular filtering logic) which might prevent us from running the same few hundred event records in a loop through GeoEvent Server for 48 hours. If you have reason to believe that we need more temporally consistent / contiguous data, we'll need your help to get a snapshot of that so that we can run the system for 48 hours to see if we can recreate the failure in-house. Please also create an XML snapshot of your configuration using the 'selective' export option to export just the GeoEvent Service whose stream service output is having a problem. This will simplify the XML snapshot by not including inputs, outputs, GeoEvent Definitions, or other configurable elements not shown in the relatively simple GeoEvent Service you illustrated above.

Hope this information helps --

RJ

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Many thanks for the response @RJSunderman

The approach we are following is to isolate the service failing in a brand new GeoEvent 10.9.1 server. The new server is not federated with our Portal to make the installation as simple as possible.

We have done it and we are seeing other issues. I'll create separate posts and if your don't mind I cc you.