- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Field Maps

- :

- ArcGIS Field Maps Questions

- :

- Large Dataset - Performance Issues

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Large Dataset - Performance Issues

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

@Anonymous User

@Anonymous User

Hello All:

My organization is having performance issues with Field Maps:

Our field crews are editing a large work order dataset using Field Maps Version 22.3.0 and editing a hosted feature layer published from ArcServer ArcMap version 10.6.1 Advanced License. When the feature layer was originally published in 2016, it was blank. Now it has over 735 points and is 1.758 MB with 1,619.908 MB worth of attachments. The feature layer is configured with conditional visibilities, and prior to last week, I had the same feature layer in 3 different maps with filters established on each one (it is a work order layer and different users need to see different "status" fields).

They are sporadically reporting issues of "lost data" when trying to submit edits and new points. Where it takes very long to submit a new point or make edits to the layer with additional attachments. And in some cases, our field crews claim they submitted a point, and when reviewing the edits at the end of the day, they say points are missing.

I originally thought it was issues or concerns with different editing account types (which was not the case after much testing), then I opened a customer support ticket with ESRI because I thought we did not have the correct service definition settings - specifically with "maxrecordcount" (this theory was debunked because our feature count is below this threshold and they recommended working offline - which is not an option for us). I then called ESRI customer support a second time and they did not help any except for providing known bugs and enhancements.

My mindset then went to the editing issues coming from bad cell service in our city, however the issue is only occurring with Field Maps, and no other app on their devices runs poorly. I spoke with our I.T. department and they said we do not have unlimited, high speed data plan so this might have something to do with it.

I ended up noticing the size of the dataset the field crews were editing and optimized our data by disabling autosync, lowered the attachment size when collected in Field Maps from "LARGE" to "MEDIUM", I rebuilt the spatial indices, and I created hosted feature views instead of filters. This is working since the layer in question is a work order map, and I was able to narrow the points with a filter on the hosted feature view.

**The question I am proposing is this: we still have 5 other ASSET INVENTORY layers whose attachments range from 1-5 GB that are continuously growing. IF ESRI IS PROMOTING THAT THEIR SOFTWARE CAN HANDLE INVENTORY DATA COLLECTION, WHAT IS THE BEST WAY TO MANAGE THE LARGE DATASET SIZE SO THAT THE LAYERS DRAW CORRECTLY, AND MOST IMPORTANTLY THAT WE STOP LOSING DATA? Our field crews have said on multiple occasions that it takes an incredibly long time to submit when editing existing/adding new assets to these inventory layers (admittedly sporadic). I cannot create hosted feature views to these inventory layers because all assets need to be visible.

Any insight with managing large inventory datasets is extremely appreciated as I cannot find any proper documentation online, even in "Big Data" forums. Thank you for your time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I am working a ticket now. Over the last 5 years I have found that once you get to about 10GB the service starts having lots of issues. I cannot export them at all or use my backup script to export.

We usually turn off downloading of attachments to the tablet since we mostly collect and push. I have some services well over 20GB, 15 FC and tables, 100,000+ records in some of them at the end of the season and they are working fine. But again we add do not edit. Getting it out of AGOL has been a major pain though.

I am not sure how much you are downloading at one time. Are you adding items or just inspections on an item? In the end they are just consumer grade tablets so they do hit a limit. But I agree Esri testing limits are too low.

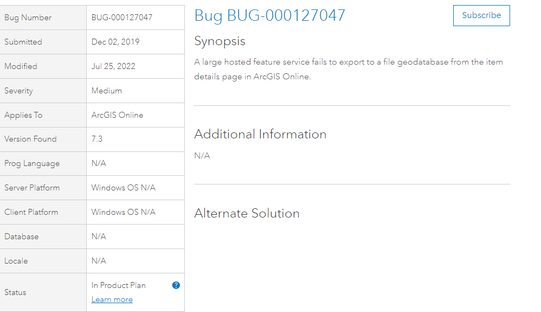

Edit here is the official bug. Its been 3 years though.

Hope that helps some

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Similar issue: Last spring I created an asset inventory with Survey123, consisting of a feature class (locations) and a linked table (inspections). I envisioned the workflow to be collecting locations and initial inspections with Survey123, then using Collector or Field Maps to add inspection records. Each inspection record typically included 3 attached photos. Because cell service was very spotty, I expected to work entirely offline.

When I started, I downloaded a map of my entire area (1.6 million acres) to Collector Classic with no problem.

Since my Survey123 feature class and table were also included in my Collector map, once I sent the survey records and synced the Collector map, my survey records appeared on the map.

As I added more and more records, I started having problems-- the first was that the Android tablet I was using ran out of space. I switched to an iPad, but had very limited success even getting an offline area to download in Field Maps. Most map area download attempts resulted in "download failed", even for areas <200MB (after 15-30 minutes spent downloading). Although I had been able to download the entire 1.6 million acre area at the start of the project, by the time I had >3,500 asset records, the download size forced me to break the area into 9 separate pieces, which are not nearly as convenient for field workers (which area am I in?).

The asset inventory had over 3,500 records, each with 1-5 (average 3) photo attachments. Field workers need to be able to add inspection records in the field, and offline, because they don't always have cell service.

I considered trying to sideload a MMPK, but field workers need to be able to add inspection records in the field, and I understand MMPKs are read-only.

One option might be to use Survey123 exclusively. I enabled the inbox, so once all 3500 records are downloaded to a device, they could be updated offline.

I am very disappointed that Field Maps does not handle big data sets well. It is marketed for data collection, but if you can only collect a little bit of data before it has performance lags and download errors, or if you have to break your area into very many tiny pieces because of download size, why use it?

A few similar threads on this topic that I found:

https://community.esri.com/t5/arcgis-field-maps-questions/field-maps-cumulative-lag/m-p/1046667

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

My organization is in the same boat as the first thread you posted regarding "cumulative lag".

I reached out to ESRI Customer Support and they told me this performance issue "is to be expected". Very disappointing indeed that this is exists because we were very excited to implement FieldMaps. In regards to your recommendation of using Survey123 exclusively, we have two hesitations:

1.) we just spent the first part of last year implementing FieldMaps and our field users are not always welcoming to change. I would hate to come to them and say "here is another change in your workflow". This is an internal conflict, and if it must be done to improve performance then we might have to.

2.) My organization has been aware of going offline, however we have apprehensions due to threads of a whole different set of issues regarding trouble syncing and losing even more data. I do not want to take 1 step forward and 3 steps back and would rather endure performance issues than bulk data loss.

Since we are making the edits online Our issue really isn't downloading the data, so much as experiencing data loss and long lag times from editing existing/submitting new points.

thank you both for your input.