- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Enterprise

- :

- ArcGIS Enterprise Questions

- :

- Feature Classes QUADRUPLES in size when imported t...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Feature Classes QUADRUPLES in size when imported to SDE

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

We have SQL Express and Enterprise workgroup. When I import a feature class from a file geodatabase into a new or an existing enterprise geodatabase the file triples in size - on import alone. I'm connection with Esri support but so far they have only been able to replicate my problem, not give me any answers.

The workflow is so basic, all I am doing is moving data from a file gdb to an SDE. Nothing else is happening and my data goes from 118 MB to 585 MB. Compressing has no effect.

sde problem #file size increase

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi Meghan,

What type of geometry (i.e. GEOMETRY, GEOGRAPHY, SDEBINARY) are you storing the feature class in the Enterprise Geodatabase? You can check by right-clicking on the feature class > Properties > General tab. It should state this there.

Also, which version of SQL Server Express are you running?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thank your for replying, sorry for the delay in getting back to you. My feature class says Storage: High Precision using Geomoetry spatial type (SRID 6434) and we're using Microsoft SQL Server Express Edition (64-bit) 11.0.2100.60

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I'm sorry, we are on SQL Server Express 2012, but the version number is correct

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

How are you checking the size of the Feature Class?

Are you just looking at the total size of the SQL Server database? Did you consider the log file size as well?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hi there-

Thanks for the feedback. Yes, we checked the log file and its only 25MB. Our IT manager was able to shrink it, but it had no effect the size of our database instance.

I saw another suggestion to remove the geoprocessing history but that didn't have any impact either.

It's really frustrating because we have a workgroup license so our enterprise geodatabases are already limited to 10 gigs. So having data blow up to 4 times the size once it's stored in enterprise isn't manageable.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

You can find out the size of the table in SQL Server with this query: You will need to update for the tablename

***********************************************************************

SELECT

t.NAME AS TableName,

s.Name AS SchemaName,

p.rows AS RowCounts,

SUM(a.total_pages) * 8 AS TotalSpaceKB,

CAST(ROUND(((SUM(a.total_pages) * 😎 / 1024.00), 2) AS NUMERIC(36, 2)) AS TotalSpaceMB,

SUM(a.used_pages) * 8 AS UsedSpaceKB,

CAST(ROUND(((SUM(a.used_pages) * 😎 / 1024.00), 2) AS NUMERIC(36, 2)) AS UsedSpaceMB,

(SUM(a.total_pages) - SUM(a.used_pages)) * 8 AS UnusedSpaceKB,

CAST(ROUND(((SUM(a.total_pages) - SUM(a.used_pages)) * 😎 / 1024.00, 2) AS NUMERIC(36, 2)) AS UnusedSpaceMB

FROM

sys.tables t

INNER JOIN

sys.indexes i ON t.OBJECT_ID = i.object_id

INNER JOIN

sys.partitions p ON i.object_id = p.OBJECT_ID AND i.index_id = p.index_id

INNER JOIN

sys.allocation_units a ON p.partition_id = a.container_id

LEFT OUTER JOIN

sys.schemas s ON t.schema_id = s.schema_id

WHERE

t.NAME NOT LIKE 'dt%'

AND t.is_ms_shipped = 0

AND i.OBJECT_ID > 255

GROUP BY

t.Name, s.Name, p.Rows

ORDER BY

t.Name

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

One thing to note, at least in my experience, that data storage (spatial data) does not always "equal" the same between storage types: shapefile, file geodatabase, Enterprise geodatabase, etc.

As Jake mentioned there are many different storage types and each type has different characteristics based on the data and the RDBMS being used. You also have to remember that there are very different tables within a file geodatabase (which is Esri's developed format) and SQL Server (MS developed).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Any discussion on data "size" has to start with describing the data structure. What are the columns and data types? How many rows? What parameters/arguments are being used when copying data from the file geodatabase to an enterprise geodatabase?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

EIDT: a little grammar

Hi Thanks for responding.

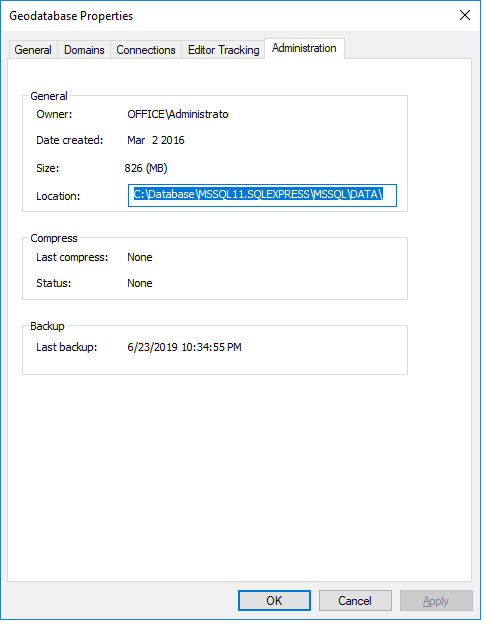

When I came aboard my company there were some pre-existing SDE databases. I'm not sure how these were created. When I right click on an SDE database and go to the Administration Tab, I am getting my size measurements there. In this particular case, I have 825 MB of data for over 100 different feature classes comsprised of tens of thousands of features. This SDE database was created in 10.3. In looking at some of the feature datasets the geoprocessing history tells me that much of the data was brought in using the Feature Class to Geodatabase tool in the Conversion.tbx. The data in here contains, census tracks, municipal parcels, annotation layers, sewer and water infrastructure and some iterative analysis results among the feaure classes covering at least 4 or 5 towns in Massachusetts. There are points, lines, polygons, tables etc.

After I came aboard we upgraded from ArcServer 10.5 (workgroup) to ArcGIS Enterprise 10.6 using the same SQLServer express instances and same machines.

To illustrate my specs I'll outline the steps I took to demonstrate my problem to Esri Support.

In my workflow I'm in ArcCatalog 10.6.1. I have an Advanced license. I expand the Database Servers icon. Under my arcserver_sqlexpress.gds I right click and select "New Geodatabase". I name it "Test_Support3" I right clicked on the name of the database an imported a feature class of contour lines. These are 5 foot intervals covering a town that's 15 sq miles, highest elevation is 400 ft, When they are in a file geodatabase the size (in Catalog) is listed as 72.92 MB. There are 7 fields in the attribute table: Object ID, a text field (254 characters), 2 doubles, a long integer, a short integer and a geometry (polyline).

I brought this same data in a second by opening ArcGIS Pro, loading the same source feature class into a new map and using the right-click menu on feature class in the table of content to export to a geodatabase.

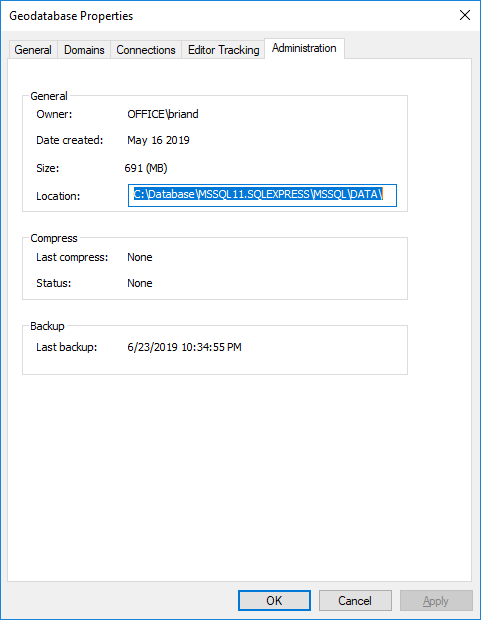

My third feature class is a polygon feature class of my state's town boundaries with about 10-15 fields in the attribute table. In a file geodatabase this is 412 kb . In ArcCatalog, I right clicked on Test_Suppport3 and made new, empty feature class using the schema of the data I wanted to load, and then I loaded the data. Now I have 3 feature classes in Test_Support3. When I look at the Administration properties it says this is 691 MB.

This database has never been edited or versioned. I did try removing the geoprocessing history but it had no impact. For the sake of accuracy I just ran a compression to no avail:

If you have any suggestion I would very much welcome them! Thank you for the assistance!