- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS GeoEvent Server

- :

- ArcGIS GeoEvent Server Blog

- :

- GeoEvent: Debug Update Features Output

GeoEvent: Debug Update Features Output

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

This article discusses a targeted way to debug erroneous records created by GeoEvent in a hosted feature layer. For more general details on configuring and using GeoEvent logging system, please see RJ Sunderman's series of blogs on the topic: https://community.esri.com/community/gis/enterprise-gis/geoevent/blog/2019/06/15/debug-techniques-co...

The Situation

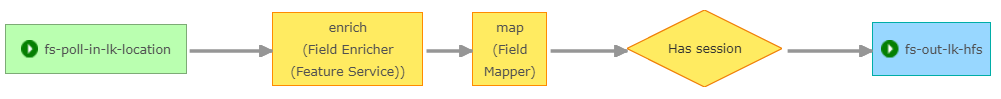

We have a GeoEvent Service that is reading in events from a hosted feature layer, attempting to enrich the data, filtering any data that didn't get enriched, and writing them out to another hosted feature layer:

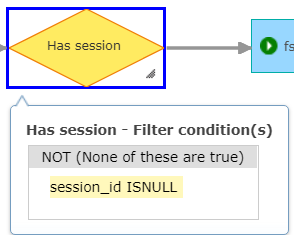

The filter is attempting to pass only events that have a valid session_id

Obviously, it is expected that the data table in the target hosted feature layer will not contain any features with a NULL session_id. Yet, when checked there are occaisionaly records that get through the filter.

Debugging

Follow the steps below to debug this issue:

- In the configuration file C:\Program Files\ArcGIS\Server\GeoEvent\etc\org.ops4j.pax.logging.cfg

- Set the log4j2.appender.rolling.strategy.max to something large like 200

- In GeoEvent Manager

- Create a new GeoEvent Input Poll an ArcGIS Server for Features that reads records from your target Hosted Feature Layer (the feature layer that is getting records with null that you don't want to see)

- Be sure to set the where clause to monitor for the error condition like session_id is null

- Create a new GeoEvent Output Send an Email that will send a notice to you that an erroneous record has been stored in the Hosted Feature Layer

- Add your email to the Sender and Recipient list

- Set the message format to HTML

- In the body, you should consider adding the important fields from the failure event like SessionID: ${session_id}

- Include a link to the REST end point query method so you can quickly evaluate the invalid record(s) like the following:

- <a href=”https://<YourServerName>/arcgis/rest/services/Hosted/Last_Known_Locations_HFS/FeatureServer/0/query?... Here To See Errors</a>

- Create a new GeoEvent Service called “Monitor for Errors”

- Add your Feature Service Poll input and Email output from above then connect them up

- Press the Publish button

- Create a new GeoEvent Output Write to a JSON File to write data to disk

- Name it file-json-out-before

- Set the Filename Prefix: before

- Modify other settings as needed

- Create a new GeoEvent Output Write to a JSON File to write data to disk

- Name it file-json-out-after

- Set the Filename Prefix: after

- Modify other settings as needed

- In your existing GeoEvent Service that is currently processing data

- Place the file-json-out-before before your final filter

- Place the file-json-out-after after your final filter

- Press the Publish button

-

- Set the LOG LEVEL on the following loggers to TRACE

com.esri.ges.transport.featureService.FeatureServiceOutboundTransport

- com.esri.ges.httpclient.http

- Wait for an email

- Create a new GeoEvent Input Poll an ArcGIS Server for Features that reads records from your target Hosted Feature Layer (the feature layer that is getting records with null that you don't want to see)

While You Wait...

While you are waiting for an issue to occur, keep an eye on your disk space/usage for the files being written out:

- Periodically delete old karaf#.log files in your C:\Program Files\ArcGIS\Server\GeoEvent\data\log directory.

- Periodically delete old before#.json and after#.json files in the output directory you specified.

You've Got Mail!

Once you get an email indicating an error has occured:

- Stop all GeoEvent Inputs, Outputs, and Services if you can to make sorting out the logs easier (or stop the GeoEvent Windows Service while you collect the files).

- Collect the files from the following locations. You are looking for files that contain data before and after the timestamp on the email.

- C:\Program Files\ArcGIS\Server\GeoEvent\data\log\karaf#.log

- <Your JSON Output File Directory>\before#.json

- <Your JSON Output File Directory>\after#.json

- You will want to collect logs from your ArcGIS Server during this time as well.

- If the failure record contains any sort of identifiable information you can use that to locate the event in the files above.

- If not, you may have to resort to sorting through the timestamps until you find the exact time the event went through the system.

- See RJ's blogs mentioned at the top of this page for tips/tricks on analyzing the log files.

See Also:

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.