- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS GeoEvent Server

- :

- ArcGIS GeoEvent Server Questions

- :

- Re: Update polygon with the average value of the p...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Update polygon with the average value of the points that are within it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

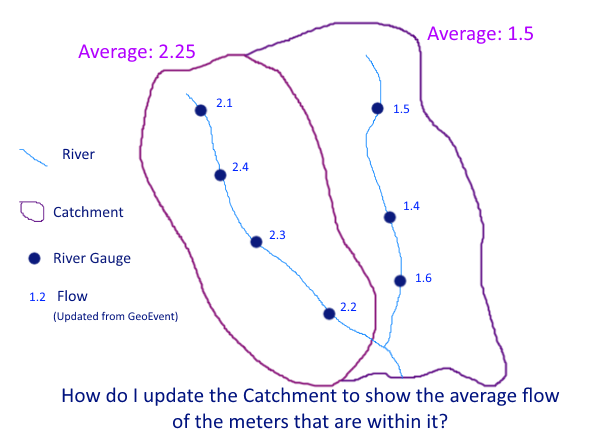

- I have a static polygon (catchments) layer.

- I have a number of points (river gauges) that have a fixed location, but GeoEvent is updating their flow values every few minutes via an 'Update a feature' output connector.

- Many river gauges can fall within the many catchments.

- Each catchment has a unique ID

- Each river gauge has a unique ID.

- The river gauge has no attribute telling me which catchment it falls within.

I want to update a 'Average Flow' attribute within the catchment layer, to reflect what the current average readings are for the river gauges that fall within it.

I am sure this should be a simple one to solve, but would like to do this as elegantly as possible.

- I assume that I need to import the catchment layer as a geofence (one off import as never changes)

- Should I be making use of either an incident detector or a spatial processor to find out the underlying gauges within each catchment?

- A field calculator to calculate the average value for these values for each catchment

- An update a feature output connector to send the update to the catchments layer

The bit I am missing is how to summarise the information. How can I turn the 4 events in the left catchment (in figure) into one average value to then send onto the cathment layer, same again but the 3 events for the right catchment?

RJ Sunderman care to put me out of my misery? How would you achieve this?

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey,

GeoEvent is not designed to share information across multiple events. There are a few processors out there that might cache a previous event (enters, exits, event joiner, etc.) but these are usually the exception to the norm.

Rather than using GeoEvent to aggregate your records, I would suggest using the platform that is storing your data:

- RDBMS - If you are putting the data into a relational database, create a SQL View to join and aggregate your data.

- ArcGIS Online - In ArcGIS Online you can take advantage of the Join Features functionality to create a join between two tables (e.g. county polygons and your Survey123 data table) that will update with the data. See the following blog for a discussion and example.

Visualizing related data with Join Features in ArcGIS Online

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have this same kind of problem right now, working at a state EMA incovid-19 support.

In ArcGIS Online I have a Survey123 for ArcGIS generated Feature Service that collects (and updates) cost numbers in two different fields, as well as a "County" field. There are many entries for each county. I need to sum the data into a County (polygon) Feature Service, with a summed $ for the two fields, for each county.

The end result will be an Operations Dashboard for ArcGIS showing (among other elements) a map of counties colored by one of the two summed costs.

Am I missing something basic in GeoEvent that will complete this operation? I would imagine a Dissolve (by county) with a sum for both fields would do the trick, but there isn’t a dissolve processor. I am imagining that it might be done through a Regular Expression Calculation, which I don't know where to start with.

Thank you for any help,

--Adam

summarize data geoevent summarize

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey,

GeoEvent is not designed to share information across multiple events. There are a few processors out there that might cache a previous event (enters, exits, event joiner, etc.) but these are usually the exception to the norm.

Rather than using GeoEvent to aggregate your records, I would suggest using the platform that is storing your data:

- RDBMS - If you are putting the data into a relational database, create a SQL View to join and aggregate your data.

- ArcGIS Online - In ArcGIS Online you can take advantage of the Join Features functionality to create a join between two tables (e.g. county polygons and your Survey123 data table) that will update with the data. See the following blog for a discussion and example.

Visualizing related data with Join Features in ArcGIS Online

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree with Eric Ironside, the problem described isn't a good fit for GeoEvent Server because every event record is generally considered atomic. I can't compute a sum or average value across several events without developing a custom processor to collect event records over a period of time and periodically dump a cache to compute a statistic.

Taking a closer look at Simon Jackson initial ideas, we could import the catchment polygons as geofences and then ingest and process the river gauge point features to get the name of the catchment AOI the points fall within (using a GeoTagger). But that only sets us up for some sort of post processing operation external to GeoEvent Server; we've only used GeoEvent Server to enrich a feature record set of river gauges with an attribute identifying a catchment. Assuming neither the location or geometry of catchment polygons / river gauge points frequently change, this becomes an infrequent batch operation, not a recurring real-time operation.

Turning the problem inside out, we could import the river gauge points as geofences then ingest and process the catchment polygons. A GeoTagger could be used to enrich each polygon with a comma separated list of sensor identifiers. But again, we only get identifying names from GeoTagger – if we split the delimited list in order to use a Field Enricher to pull actual flow rates into each record we're no better off that having ingested the point records in the first place ... we cannot rejoin the split delimited list to obtain metrics from several event records to compute an average.

I suppose we could use a Field Calculator to append each river gauge's value to the gauge's name and use a stream service to broadcast these so that a geofence synchronization rule could actively update a set of geofences whose name provides both a sensorID and an observedValue ... then, as part of a different GeoEvent Service, ingest and process the catchment polygons using a GeoTagger to collect all the "enhanced" geofence names into a comma delimited list. A regular expression, like Adam Repsher suggests, could then (maybe) pull apart the delimited list, extract each sensor's observed value from the "geofence name" and compute an average ...

But this is hardly the elegant solution Simon is looking for. It's forced, brutish, and fragile as we now have two independent GeoEvent Services polling catchments and river gauges, updating geofences, and presenting us with inherent race conditions.

If you really wanted to do this using GeoEvent Server, you would want to develop a custom processor that incorporated a timer. The processor would catch and hold a series of GeoTagged points as long as its timer had not expired. As the processor was collecting data, it could enter metrics from each river gauge into some sort of key/value structure in memory using the catchment identifier as the key. The processor's timer would reset as new gauge event records are received and its in-memory data structure updated. If the timer ever expired ... say, 30 seconds after not seeing any new data ... it would compute averages for all the catchments it had key values for and write-out updates to the catchment feature records.

The burden here is that you have to use the GeoEvent Server's Java SDK to create a custom processor. I don't see anything elegant you can do with GeoEvent Server out-of-the-box to solve this problem.

Hope this information is helpful –

RJ