- Home

- :

- All Communities

- :

- Products

- :

- ETL Patterns & Data Interoperability

- :

- ArcGIS Data Interoperability

- :

- Data Interoperability Blog

- :

- Code Free Web Integration, With a Touch of ArcPy

Code Free Web Integration, With a Touch of ArcPy

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

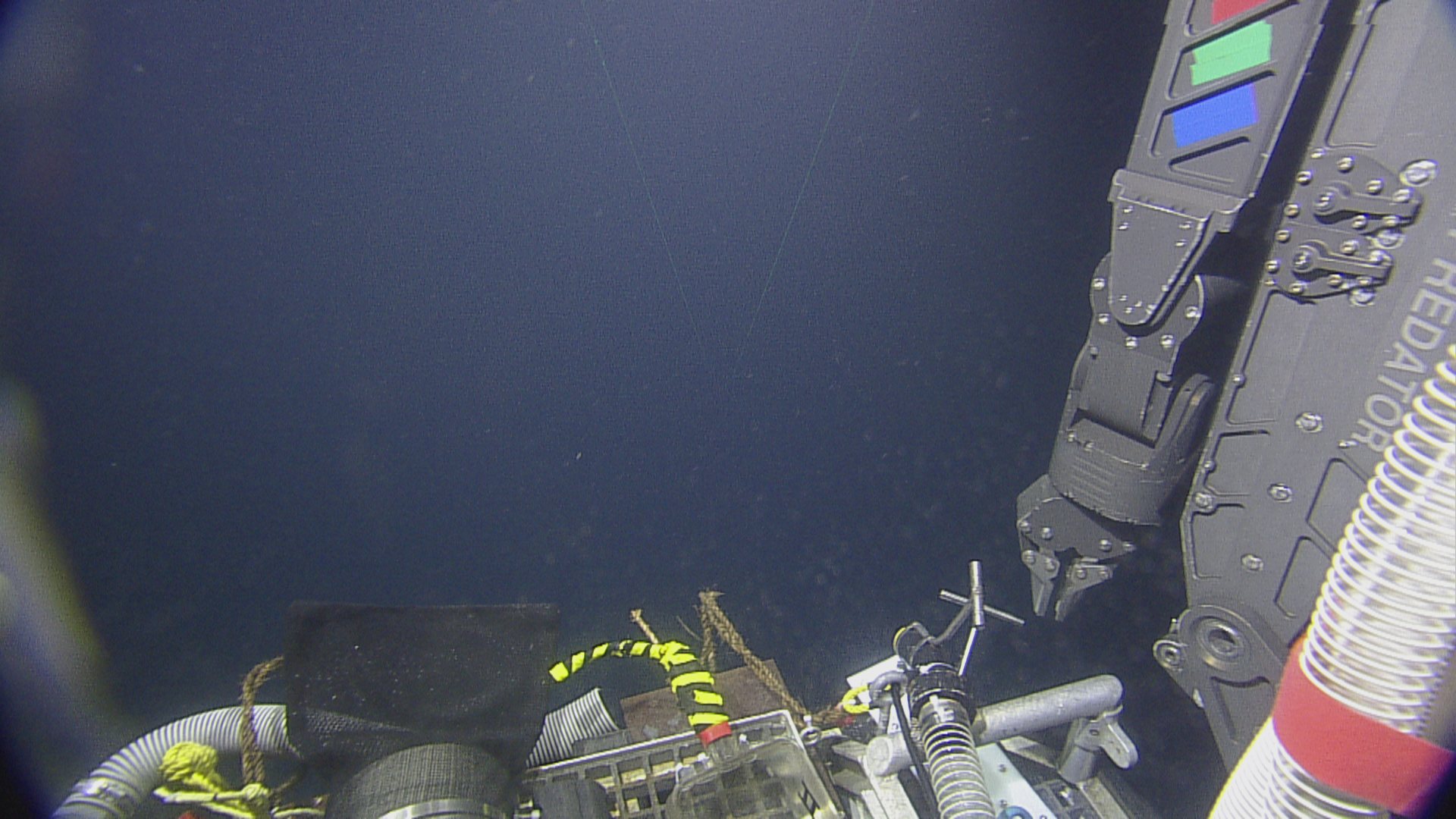

We're going on a journey to the bottom of the sea, but the real message here is the ability of ArcGIS Data Interoperability to reach out to the web (or anywhere) and get feature and media data into a geodatabase feature class with attachments without having to code. Well just a tiny bit, but you don't have to sweat the details like a coder.

A colleague came to me asking if ArcGIS Data Interoperability could bring together CSV position and time data of a submersible's missions and related media content and get it all into geodatabase. In production all data sources will be on the web. No problem. Data Interoperability isn't just about formats and transformations, it is also about integrations, and building them without coding.

Python comes into the picture as a final step that avoids a lot of tricky ETL work. The combination of an ETL tool and a little ArcPy is a huge productivity multiplier for all you interoperators out there. Explore the post download for how the CSV and media sources are brought together - very simply - below is the whole 'program':

ArcGIS Data Interoperability has a great 'selling point', namely that you can avoid coded workflows and use the visual programming paradigm of Workbench to get data from A to B and in the shape you want. I often show colleagues how to efficiently solve integration problems with Data Interoperability and its always pleasing to see them 'get it' that challenges don't have to be tackled with code.

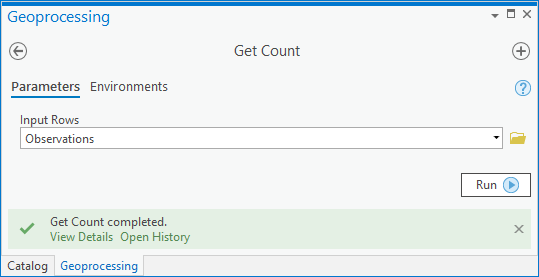

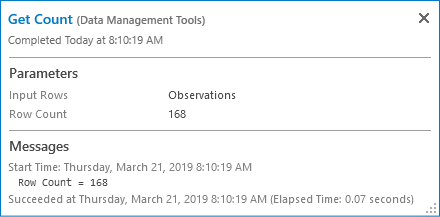

Low level coding is the thing we're avoiding. ArcGIS geoprocessing tools are accessible as Python functions; using geoprocessing tools this way is just a command-line invocation of what you have access to in the Geoprocessing pane and Analysis ribbon tools gallery and so on. If this is news to you, take a look and run the Get Count tool first from the system toolbox and then use the result in the Python window.

Here is the tool experience:

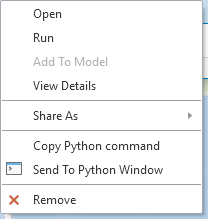

Now in the Catalog pane History view, right click the result and send to the Python window:

You'll see the Python expression equivalent of the tool:

Note I haven't written any code...

Where am i going with this? ArcGIS Data Interoperability concepts differ a little from core geoprocessing in that input and output parameters tend to be parent workspaces and not feature types or tables within them. You frequently write to geodatabases for example, in which case the output parameter of the ETL tool is the geodatabase, not the feature classes and tables themselves, although these are configured in the ETL tool.

What if you need to do something before, during, or after your ETL process for which there is a powerful ArcGIS geoprocessing tool available but which would be really hard to do in Workbench?

You use high level ArcGIS Python functions to do this work in Workbench.

I'll give a simple, powerful example momentarily, but first some Workbench Python tips.

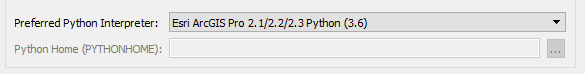

Workbench allows you to configure your Python environment; to avoid any clash with Pro's package management just go with the default and use these settings:

In Tools>FME Options>Translation check you prefer Pro's Python environment:

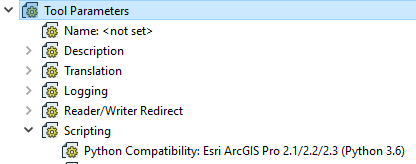

In your Workbench, check your Python Compatibility will use the preference.

Now you know the ArcGIS Python environment can be used.

For my use case I'll provide a real example (attached below) where I need to create and load geodatabase attachments. We cannot do this entirely in Workbench (except by how I'll show you) because it cannot create the attachments relationship class. You could do that manually ahead of loading data, but then you still have to turn images into blob data, manage linking identifiers and other things that make your head hurt, so lets use ArcPy. Manual steps also preclude creating new geodatabases with the ETL tool, which I want to support.

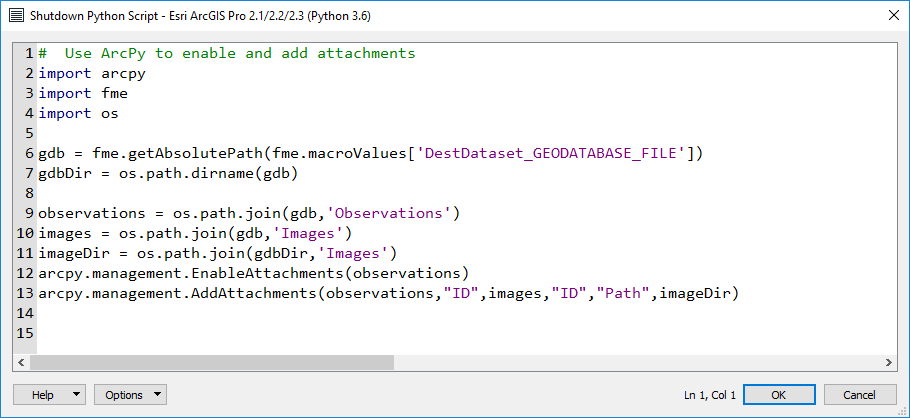

The example Workbench writes a feature class destined to have attachments, and a table that can be used to load them. You can research the processing, but the key element to inspect is the shutdown script run after completion, see Tool Parameters>Scripting>Shutdown Python Script.

Here is the embedded script:

Now this isn't a lot of code, a few imports, accessing the dictionary available in every Workbench session to get output path values used at run-time, then just two lines calling geoprocessing tools as functions to load the attachments.

This is a great way to integrate ArcGIS' powerful Python environment in ETL tools. Sharp eyed people will notice a scripted parameter in the workspace too, it doesn't use ArcPy so I can't claim it as avoiding low level coding, it was just a way to flexibly create a folder for downloading images at run-time. There are a number of ways to use Python in Workbench, but I would be detracting from my message here that you can start with the simple and powerful one - use ArcGIS geoprocessing where it saves work. Enjoy!

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.