- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS GeoEvent Server

- :

- ArcGIS GeoEvent Server Blog

- :

- Debugging the Add a Feature / Update a Feature Out...

Debugging the Add a Feature / Update a Feature Output Connectors

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

This blog has been updated as part of a new series describing debugging techniques you can use when working to identify the root cause of an issue with a GeoEvent Server deployment or configuration. The original blog's text is included below, however, please consider the new blogs which can be accessed by clicking any of the quick links below: |

- Debug Techniques - Configuring the application logger

- Debug Techniques - Add/Update Feature Outputs

- Debug Techniques - Application logging tips and tricks

- Debug Techniques - Geofence synchronization deep dive

How to debug the Add a Feature and Update a Feature Output Connectors is probably the question I have been asked the most over the last couple of years working on GeoEvent Extension development team – so it’s appropriate that my inaugural blog to GeoNet address it.

The scenario:

- An input appears to be successfully receiving and adapting the data from the stream and creating GeoEvents.

- The Filters and/or processors incorporated in a GeoEvent Service are handling the GeoEvents as expected.

- The event count on an Add a Feature or Update a Feature Output Connector is incrementing, but no features are being added or updated in the targeted feature layer.

So, how do you start debugging to determine what the problem might be?

My advice is to enable DEBUG logging on the feature service outbound transport to see if we can capture the JSON request being sent to ArcGIS Server and the response GeoEvent Extension receives from ArcGIS Server.

I’ve attached an image below (FeatureServiceUpdate.png) of a karaf.log I created a while ago which shows the transactions taking place when a Output Connector performs an HTTP/POST to update features in a feature service. Don’t be concerned that the illustration identifies the 10.2.2 version – the concepts and workflows are the same for the GeoEvent 10.3 and 10.4 product releases when using a traditional RDBMS.

To enable DEBUG logging on a single component in GeoEvent Manager:

- Navigate to the Logs page and click the Settings button.

- Enter the logging component in the text field Logger and select the DEBUG log level.

- Click Save.

In this case you want to log DEBUG messages for the com.esri.ges.transport.featureService.FeatureServiceOutboundTransport component only. Setting a logging level of DEBUG on the ROOT component is not recommended. Doing this will produce a very verbose set of log messages and can cause the Logs page in the GeoEvent Manager to 'hang' as it tries to refresh the rapidly updating logs.

With DEBUG logging enabled for the specified component, the GeoEvent Extension will produce more detailed logs when the feature service outbound transport handles event data. The DEBUG logging statements will include the JSON being sent and the ArcGIS Server’s response. I prefer looking at the log in a text editor, rather than using the log manager in GeoEvent Manager. You can find the karaf.log in the default folder C:\Program Files\ArcGIS\Server\GeoEventProcessor\data\log.

In the FeatureServiceUpdate.png that is attached, find the two messages time stamped 2014-03-28 14:06:09,074. The “querying for missing track id XXXX” messages indicate the GeoEvent Extension has discovered that it has not cached any information on features with the TRACK_ID “SWA1568” or “SWA510”. Looking at the third message in the series, it shows the SQL where clause used to query the …/FeatureServer/0/query REST endpoint to discover the necessary OBJECTID values.

The response from the ArcGIS Server includes a JSON array features[ ] with the geometry, OBJECTID, and unique identifier field (flightNumber in this example) for the features with the flight identifiers “SWA1568” and “SWA510”. Notice that it took 175 milliseconds for the GeoEvent Extension to receive the ArcGIS Server response:

- 14:06:09,250 - 14:06:09,75 = 75 ms

You might find this query/response latency information valuable when profiling / debugging your GeoEvent Services which are adding or updating features through a feature service.

Once the GeoEvent Extension has the necessary OBJECTID values for the features it wants to update, it posts a block of JSON to the …/FeatureServer/0/updateFeatures REST endpoint. ArcGIS Server responds with “success”:true 325 milliseconds later:

(577 - 252 = 325).

If you find too many JSON event records being included in the transactions, making the log file difficult to read, you can try configuring your Update a Feature Output Connector to specify that the ‘Maximum Features Per Transaction’ should be limited to 1 (the default is 500). This obviously not something you would do in a production environment, but while debugging it can make the log file much easier to read.

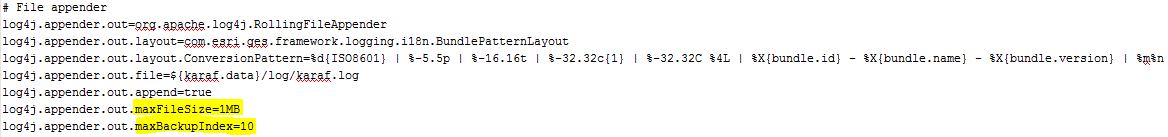

If you find that the log is rolling over too frequently, you can edit settings in the following configuration file to allow the karaf.log to grow larger than 1MB and to keep more than 10 archival log files:

- ...\Program Files\ArcGIS\Server\GeoEvent\etc\org.ops4j.pax.logging.cfg

(Click the thumbnail above to open a larger view)

The logging package changed with the 10.6.0 release to use version 2.x of Log4J.

Settings applicable for the log4j2.appender are illustrated in the attached ops4j.pax.logging.cfg.png file.

You can also edit the message formatting specified by the layout.ConversionPattern in this configuration file to reformat the messages being written to the karaf.log - more information on that can be found here: http://www.codejava.net/coding/common-conversion-patterns-for-log4js-patternlayout

Hope this information helps –

RJ

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.