- Home

- :

- All Communities

- :

- Products

- :

- ArcMap (Retired)

- :

- ArcMap Questions

- :

- Table to table - field cache?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Table to table - field cache?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I used ArcPy to import a csv file as a table into a GDB. Fine, it worked as usual.

Then I figured I had to add some columns, so I changed the csv file and tun the python again. In the result, some columns were imported incorrectly. So I retried from within ArcDesktop, but got the same result.

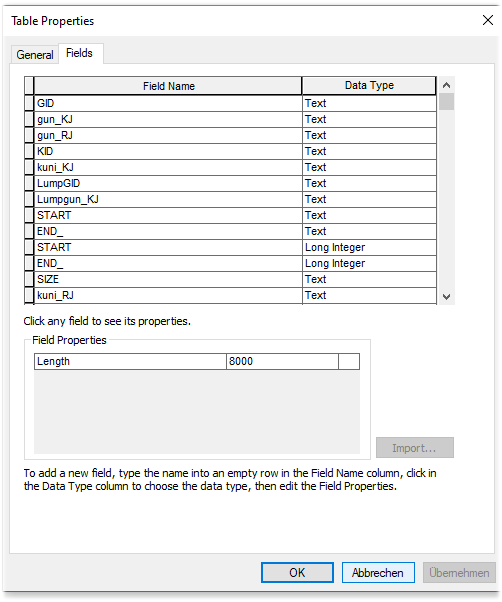

So, I right clicked on the CSV in the Catalog and saw a messed up field list that didn't correspond to the actual fields in the CSV. Two fields were even doubled because when running the tool the second time, I had changed the Data type of 2 fields manually.

When I rename the CSV, the fields just show up as they should.

I figure there has to be a cache for those properties, but I don't know how to reset that cache.

I am asking because just renaming won't help. My tables have specific naming conventions for iterations.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

when you say you changed the data types of the fields manually, you have to ensure that the whole column contains data of that type. Any value which differs from the data type will change the whole field.

Also, can you identify what was different in the two tables so people don't have to scroll up and down looking for differences ![]()

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Yes, but that doesn't touch the issue. I mentioned that just to explain why according to the first field table, there were two "START" and "END_" fields. The main point is: The fields in the field table of ArcCatalog do not match the fields the csv actually has - unless I rename the .csv

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

field names contained spaces they would be replaced by underscores

if the csv field name was a reserved word, it would also be changed and

duplicate field names are not allowed

two tables of the same name wouldn't be permitted in a gdb

those are the only basic rules I can remember offhand

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I used ArcPy to import a csv file as a table into a GDB.

What is your workflow and which tools, specifically, are you using to import the CSV into the GDB?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

It's really simple: I used this code to import the CSV into my gdb:

# Process: Table to Table

print ("1/3: Import table")

arcpy.TableToTable_conversion(Dump_Domain_csv, the_geom_gdb, "Dump_Domain", "")

When I ran this script for the first time, Dump_Domain_csv had the structure/fields as in the first screenshot. It worked fine.

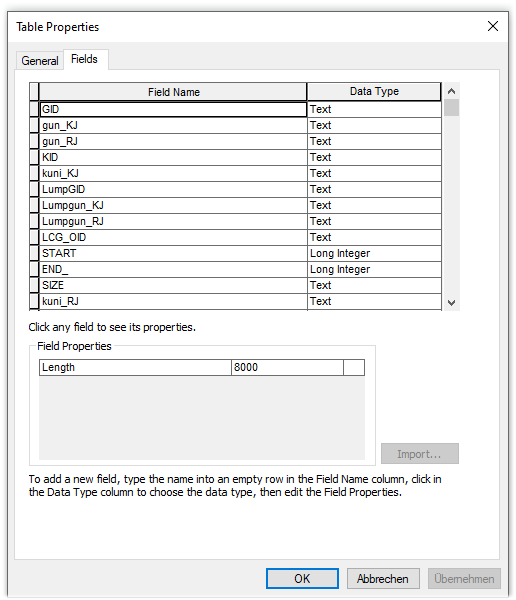

Then I changed my Dump_Domain_csv outside of ArcGIS (Excel). Now it has the fields of the second screenshot.

HOWEVER, when rerunning the same python code as above, it still applies the old fields.

Then I opened ArcCatalog to check the properties and it said it still had the original structure (screenshot1).

Then I checked my CSV if it was somehow weird or messed up, but it was fine.

Then I simply renamed the csv file from Dump_Domain_csv to DumpDomain_csv and ... tada, it works and the fields show up as they should (screenshot2).

But as I have said, my solution can't be just not to use the original file name...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I would have added this to your workflow if you are having naming of table and field issues

Remove the table from the table of contents, if it was added.

Delete the table from the gdb in catalog.

Refresh the gdb in catalog.

Save project

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Hello

I have the same issue as Werner Stangl.

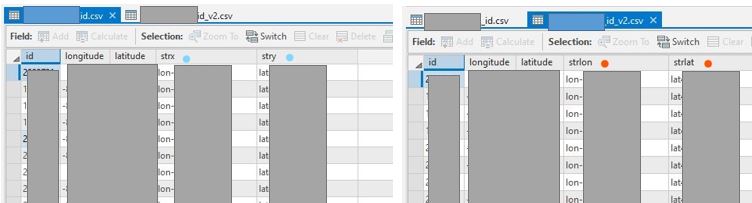

Here are my csv file structures of original (left) and renewed (right) ones. There are no spaces or special characters in the field names.

I originally had strx and stry in my csv file. I deleted strx and stry since I do not use them and reimported the same csv in ArcGIS. For some reason, ArcGIS overwrites the variable names, strlon and strlat, with strx and stry (left one in the image below).

The correct names are imported only when I saved the csv file with a different name (right one in the image).

I could renew the name this time, but I do not want to do it every time I update my data in the future.

I have tried what is written in this thread such as:

1) Use TableToTable_conversion

2) Delete all the csv files from ArcGIS, save the project, create the csv file again, refresh the gdb, and import the csv again.

3) (I also tried) Reboot my computer to refresh everything

Unfortunately, none of them worked out since ArcGIS imports csv with a wrong field name in the first place. I suspect the same thing as Werner that this issue has to do with a cache for field names, but not sure.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Exactly same issue here. ArcGis remembers fields in csv created months ago, so I gave up using single name and create very long random name during every run. B-i-i-g pain. Every script that using csv is much longer now.