- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Pro

- :

- ArcGIS Pro Questions

- :

- Why won't ArcGIS Pro symbolize a total range?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have a hex grid (polygons) containing a Point_Count field (an output of Summarize Within).

The FC contains around 163,000 rows.

The Point_Count field contains values between 1 and 1200.

However, whenever I try to do a graduated symbology, the Maximum is set to 661. When I look at statistics on the Symbology panel, it incorrectly lists the Maximum as 661. Thus, even if I manually set the histogram to 1200 max, it "snaps" back to 661 regardless. Consequently it will not symbolize around 200 polygons that represent values over 661.

I tested this error by doing the following:

- Creating a new feature class containing the same content and loading it from scratch (the previous layer was loaded and symbolized via script). No difference.

- Took the same data (both original and copied) and loaded it into QGIS 3.4. It picked up the correct min/max values without issue on both datasets.

My guess is that Pro is trying to be "helpful" somewhere but durned if I know how or why.

Crossposted from https://gis.stackexchange.com/questions/319517/why-is-arcgis-pro-not-symbolizing-my-entire-range-of-...

Note: no specific data is required to recreate this - anyone can take a random cluster of dots across a grid of this size, run summarize within, and obtain a similar output. My cells are 50m radius (circle inside).

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

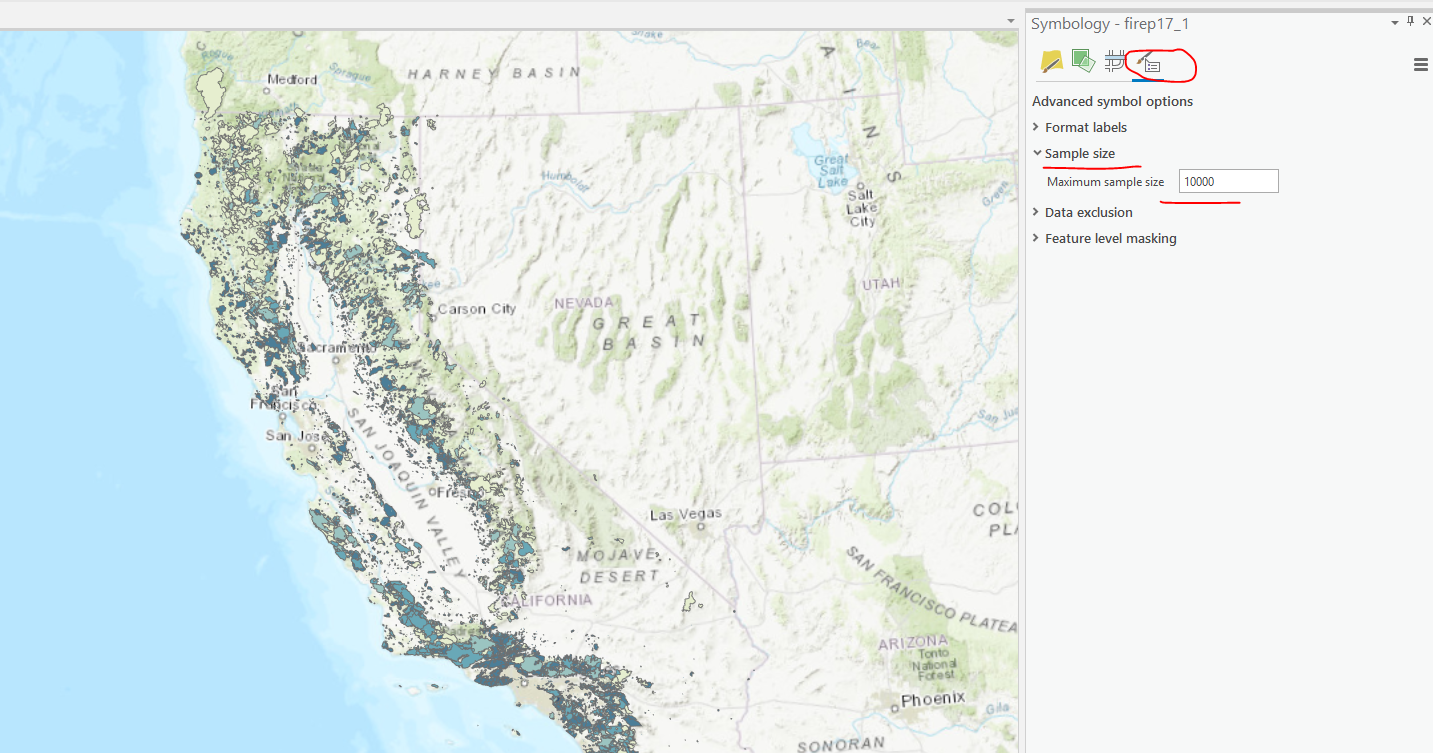

Have you tried upping the Maximum sample size?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Kory, that did the trick. Because of the distribution I had to push it up to 163000 but it did work. Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great. You might want to go over here and vote up if you haven't: https://community.esri.com/ideas/14414-no-sample-sized-reached-error-in-arcgis-pro