- Home

- :

- All Communities

- :

- Developers

- :

- Python

- :

- Python Questions

- :

- edit_features null values becoming 0s

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

edit_features null values becoming 0s

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

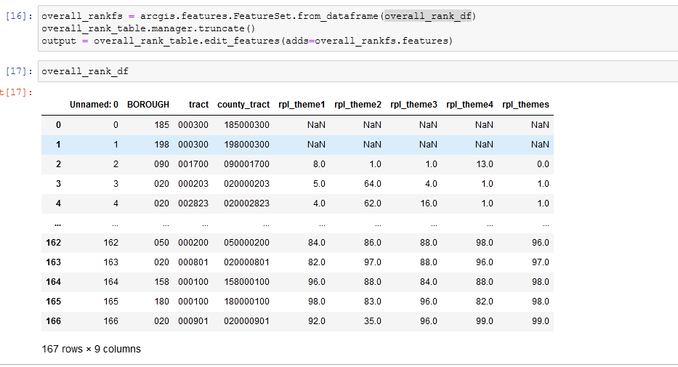

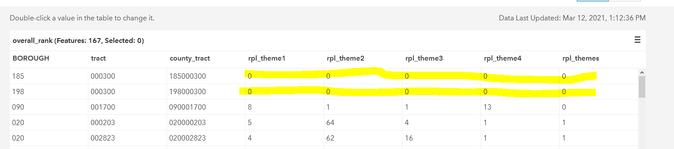

I am trying to use edit_features to update a hosted table with data from a pandas dataframe. The process runs without error, but the null values in the dataframe are automatically converted to 0s in the table as shown below. Is there a way to prevent this because 0s in these fields have an inherent meaning and they should be missing, not 0.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

strange, but the second table looks like the values are a text representation of integers (left justified, with the floats converted to integer).

NaN only applies to floats, there is no NaN for integers, the normal procedure is to assign an integer that can't exist in the data set (smallest negative or positive integers are often used).

So in short, if conversion to integer was done (inadvertantly or not), ... 0 .... is the substitute in the absence or other rules of

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

This is the output I am trying to get. When I append data manually using a csv file I am able to get the nulls. These values are stored as integers in the hosted table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

As indicated, there is no "null" for integers, they are converted to "0" by default, so my suggestion stands about using a value that can't possibly exist in your data set (eg -9999999 or whatever), then deal with the null-replicants as you see fit.

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

How does the current hosted table have nulls as integers?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

they are handled internally ... masking if you will, just like they are in ArcGIS Pro. There are real NaNs for floats, just not integers. Your first table has the columns as floats in the dataframe, hence NaNs, your second table is integers hence no NaNs (NaInt not an integer doesn't exist)

So either make sure that they are floats on transfer, deal with the zeros or exclude the records that are NaN is pretty well your options

... sort of retired...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Gotcha, I will try to figure out how to have the python script mask them as they are handled when manually updating then. Because the maps that are pointing to this data use the missing values as a specific symbology for census tracts with no data and I'm trying to not have to mess with the map too much.