- Home

- :

- All Communities

- :

- Products

- :

- Geoprocessing

- :

- Geoprocessing Questions

- :

- Frequency tool creating duplicate entries?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Frequency tool creating duplicate entries?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

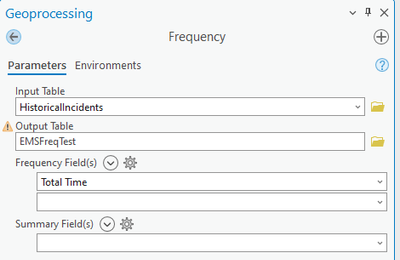

I am working with fire department incident data, and I am trying to determine the frequency of each response time. Response time is in a double field and represents seconds (whole number). There are over 100k records, and for some reason when I run the Frequency tool, it is breaking down some response times into multiple entries.

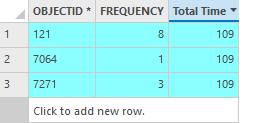

This is creating 1485 duplicate entries (15,113 unique times, but 16,598 entries), with 1409 records creating duplicates. For example, in my incident data I have 12 incidents that have a response time of 109 seconds. Running the frequency tool is creating 3 separate records for 109 seconds

I cannot figure out why it is grouping them this way.

I was able to use the summary statistics tool to create a new table that consolidated the duplicates, but I was wondering if anyone knew exactly what went wrong here, or if they were having the same issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you maybe have some significant figure display set on that field? e.g values are actually 109.1, 109.2, 109.4