- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Pro

- :

- ArcGIS Pro Questions

- :

- Re: Why does ArcGIS Pro have to be so slow???

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Why does ArcGIS Pro have to be so slow???

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Why is ArcGIS Pro so slow? To select assets, field calculate, display layers, change symbology... the easiest of tasks that are commonly utilized within ArcMap are a drag on the software.

When will ArcGIS Pro become faster than ArcMap? That will be the day it could replace it as the goto product for GIS professionals.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I just did a Pro training course. For simplicity, I took our (admittedly elderly) dual 2.2GHz, 4Gb Windows 10 laptop. This has run Desktop (many versions) perfectly fine for years along with a suite of other GIS/DB tools, and seemed to work okay with some basic testing of Pro after installation.

Once I loaded up some tutorial data for exercises, it was agonising. 99+% CPU usage, most of the GPU and perilously close to thrashing page file (I shut down everything possible to shut down in Windows) running consistent 1.5-2GB RAM usage. It was basically unusable, 1-2 minute waits to do anything in Windows, and I could see Pro literally rendering every object second by second.

Sometimes it just gave up and said "meh, close enough for government work"

This was with a few tiny tutorial datasets and the web basemap/hillshade.

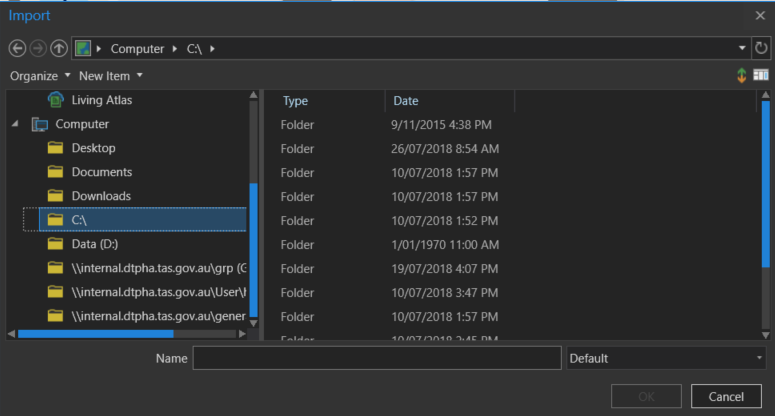

I didn't even dare trying 3D seeing as Pro was unable to even handle a file explorer dialog. After falling further and further behind the class simply because Pro does not run on a Desktop-capable computer, I gave up and ditched it for my workstation (2014 quad 3.4GHz, 16GB) for which is was fine, although the NVidia Quadro struggled a bit with frame rates in dynamic 3D.

Looking at what it was doing, I think Pro is spinning up a large number of background processes/services which eat up resources (geoprocessing handler, job handler, etc.) I could also see it dynamically building displays, panes and elements, sometimes rejigging them in real time as things opened and changed to get the desired layout, so there is a lot of work going on under the hood which is much more demanding and sophisticated than Desktop. Every time you click on something it is running through a whole set of rendering/workflow/data management dependencies.

For example even something simple like opening an attribute table. In Desktop, bang, open pane with first X lines, done. In Pro it was doing some kind of indexing, analysis, calculating different font sizes, column width etc to make it all 'look nice' before opening the table, and then of course depending on docking it had to resize multiple other objects and re-render them as well.

Short story: Unless you have a fairly new computer, expect that you will have to upgrade it to run Pro.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

"For example even something simple like opening an attribute table. In Desktop, bang, open pane with first X lines, done. In Pro it was doing some kind of indexing, analysis, calculating different font sizes, column width etc to make it all 'look nice' before opening the table, and then of course depending on docking it had to resize multiple other objects and re-render them as well."

This is precisely my experience as well. But its not completely because you have a slow computer.

Even with a very fast computer, it runs slow. As you mentioned, it seems pro needs to re-render and reprocess everything anytime you click. I am running an Intel i7 2.8ghz with 16gb ram and an NVME SSD hard drive and I get the spinning wheel anytime I click something in pro (and this is the second computer Ive run Pro on). This is not acceptable.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Are you able to submit that very obvious performance issue to tech support? I see pretty much the same thing.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Will do

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I'll just add that both computers used above are standard (not premium) SATA SSDs and all the data, OS and temp data was on them, not a spinning platter.

We're looking to go to M.2 NVMe for our next hardware upgrade, based on where high-end gaming is going. How do you find this works in a GIS environment?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

It doesn't. Having to buy a gaming computer in order to run GIS software that previously didn't need a gaming computer is "unsustainable", quoting the folks that I had to ask for $ with which to buy the computers....to your specific question, I think you'll get more ROI on faster processor/more cores versus a high-end NVMe drive.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Oh of course, it's cpu-bound, I could see that easily. I was interested in how you found the technology compared to contemporary storage.

We've had the same issues with cost. Senior managers have difficulty understanding that a bog-standard micro desktop suitable for government form-fillers with some light Excel, Word and Outlook use is completely useless for GIS/geoprocessing. We've actually had replacement requests knocked back because we "don't need a gaming/multimedia laptop" or even "you don't need a laptop, you can use a desktop" (for field work?) and had to escalate back up the chain to get the hardware needed for the job.

Even ICT doesn't get GIS and big data/processing requirements. In the last PC roll out they dodged the question of backups and when I complained (while they were decommissioning machines) we had sixty 1TB drives to copy they said "there isn't enough network capacity for that, just back up your data to usb thumb drives" *snort* Sure....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Replying to myself, how narcissistic! ![]()

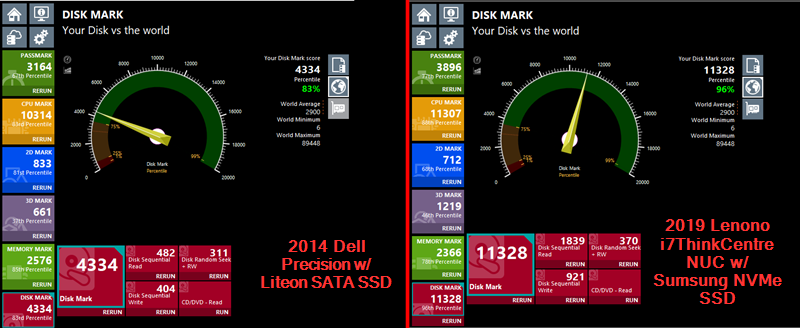

Ego aside, here's an update for those interested in the hardware side of Pro requirements. We're about to replace our Dell Precision quad-3.4GHz 16GB with Lenovo ThinkCentre hex-2.4GHz 16GB (don't ask why we are 'sidegrading', public service)

Of particular interest is the comparison between the 2014-era consumer Liteon 256GB SSD on SATA and the 2019 prosumer Samsung 500GB SSD on NVMe.

I won't go into the other benchmarks but they are roughly comparable as shown. As you can see above even though Passmark is just a synthetic benchmark, the performance difference between the two SSD models is dramatic, and remains the same regardless of the other hardware configurations (all Lenovos had the same class drive) as shown below.

This results in a smoother and more responsive experience in Pro, making up for some of the possible disadvantages of the system. Based on this and initial testing I would highly recommend adopting M.2 NVMe in your next scheduled upgrade. I still haven't rebuilt my gaming rig (shame, all I can run is Warframe. Badly) but I'll update you with the results, although that example won't be so clear cut as there's more variables beyond the primary drive being changed in support of *ahem* real-time dynamic rendered entertainment suites.

Finally, if you don't have the opportunity to move to NVMe and must stay with SATA (for example, you do not have M.2 support on your computer) be aware that even all 'standard' SSDs are not the same. Samsung (and others) are built with different tech it seems the big difference is that QLC ram in the Samsung QVO is cheaper but less durable than TLC ram in the Samsung EVO, max speed also drops.

Ref. "MLC vs TLC vs QLC: Why QLC Matters"

tldr: Replace your old SSDs with TLC-SSDs for maximum performance and durability. For Samsung this is the EVO gamerspec model, not QVO desktop model.

This article was not sponsored or endorsed by any of the manufacturers mentioned and remains my subjective and probably worthless opinion only.

Edit: Added world benchmark link for the Liteon in case you thought I'd accidentally benched my optical drive (yes the Dell Precision has one). Who knew Liteon SSDs were even a thing? Surprise! ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

ESRI, it's a shame that we have to have discussions like this four years after the product was introduced! Not all of the us, users, have enough time, chance, clout or privilege to test and choose hardware. Most of us work with what's available. With ArcMap, all we had to ask our IT was "Give me enough RAM" (if that). I have stronger words to express my feelings, but...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

This forum thread is like an echo chamber. But it would be really interesting to know how/if ESRI going to address this problem. I'm wondering if someone is addressing it during the User Conference?