- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Pro

- :

- ArcGIS Pro Questions

- :

- Specify extents in python for Export Training Data...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Specify extents in python for Export Training Data for Deep learning command

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

I have written python code to run the "Export Training Data for Deep Learning" tool several times with different extents each time. I am not used to altering environments and am also a beginner with python, but I am very familiar with the "Export Training ..." tool. I have not had success getting the tool to run with the extents I specify, it always runs it with the default extents. I have had success getting it to only process the extents I want it to when I ran the tool through the geoprocessing tab instead of in python.

I have tried two ways to set my extents. Previous to this block of code I have already defined Xmin, Ymin, Xmax, and Ymax, along with all my variables for the "Export Training ..." tool. Here are the two lines of code for the first method I tried:

arcpy.env.extent = arcpy.Extent(Xmin,Ymin,Xmax,Ymax)

arcpy.ia.ExportTrainingDataForDeepLearning(in_raster, [...] )

Here is the code for the second method:

with arcpy.EnvManager(extent=str(Xmin)+" "+str(Ymin)+" "+str(Xmax)+" "+str(Ymax)):

#below I print that last command to check I got this in the same format as I hope it is in

#it has printed how I expect it to

print("extent="+str(Xmin)+" "+str(Ymin)+" "+str(Xmax)+" "+str(Ymax))

arcpy.ia.ExportTrainingDataForDeepLearning(in_raster, [...] )

Here is the code for the time it worked from the geoprocessing window:

with arcpy.EnvManager(extent="186250 1556250 302500 1767500"):

arcpy.ia.ExportTrainingDataForDeepLearning("Extract Bands_Gr1_03E", r"D:\LHD_GIS\ResearchAttempt4\TestExports\Dam58_03", "LHD_Poly_all_TileName", "TIFF", 1024, 1024, 128, 128, "ONLY_TILES_WITH_FEATURES", "PASCAL_VOC_rectangles", 0, None, 0, None, 45, "MAP_SPACE", "PROCESS_AS_MOSAICKED_IMAGE", "NO_BLACKEN", "FIXED_SIZE")

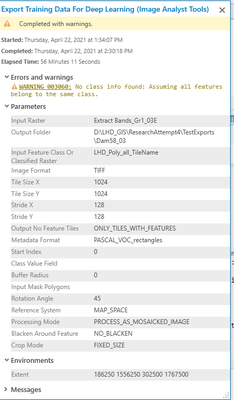

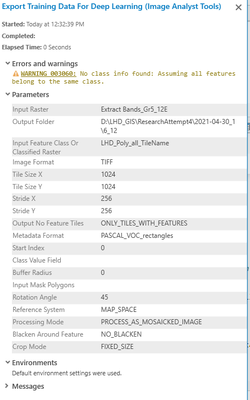

The tool runs perfectly except that it still uses default extents. Thus far I haven't let it loop through to run the command a second time because it takes 4 hours to run on the entire raster rather than the extents I want it to run on. Any ideas would be much appreciated! I have ArcGIS Pro 2.7.3. Here are some screenshots.

Here is the report from the history window the time the tool worked from the geoproccessing window.

This is the my most recent run (currently being processed) that should have extents set:

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

The below 2 examples work for me with my data:

import arcpy

arcpy.env.extent = arcpy.Extent(468500,7864000,469000,7864500)

arcpy.ia.ExportTrainingDataForDeepLearning("input.tif", r"C:\deep_learning\output\export1", "Training_Data", "TIFF", 256, 256, 0, 0, "ONLY_TILES_WITH_FEATURES", "PASCAL_VOC_rectangles", 0, "Classvalue", 0, None, 0, "MAP_SPACE", "PROCESS_AS_MOSAICKED_IMAGE", "NO_BLACKEN", "FIXED_SIZE")import arcpy

arcpy.env.extent = "468500 7864000 469000 7864500"

arcpy.ia.ExportTrainingDataForDeepLearning("input.tif", r"C:\deep_learning\output\export2", "Training_Data", "TIFF", 256, 256, 0, 0, "ONLY_TILES_WITH_FEATURES", "PASCAL_VOC_rectangles", 0, "Classvalue", 0, None, 0, "MAP_SPACE", "PROCESS_AS_MOSAICKED_IMAGE", "NO_BLACKEN", "FIXED_SIZE")When you have these sorts of problems, it is always good to get the simplest set of inputs to test with. I suggest creating a test featureclass with just 2 polygons in separate corners of your image. Then try and export the training data for just one of them using the extent parameters. Also try doing it just like the above, without using variables.

Also, one of the reasons it may be taking 4 hours is that you have specified the rotation angle as 45 degrees. For each image created it will create an additional 7 images (going around in 45 degree increments), and could therefore maybe take up to 7 times as long. This will also make your deep learning training much longer as well. Normally how this is handled is that when you train your model you tell it to transform your input images by randomly rotating them. So you get all the benefits of more training data but without pushing huge amounts of samples through the model.

And one more thing from that warning message - it's probably a good idea to define a class value for your data, just in case that causes other problems later. Just add a field called Classvalue (as a Long) to your polygons and set it to 1. Then choose that field in the parameters.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

The below 2 examples work for me with my data:

import arcpy

arcpy.env.extent = arcpy.Extent(468500,7864000,469000,7864500)

arcpy.ia.ExportTrainingDataForDeepLearning("input.tif", r"C:\deep_learning\output\export1", "Training_Data", "TIFF", 256, 256, 0, 0, "ONLY_TILES_WITH_FEATURES", "PASCAL_VOC_rectangles", 0, "Classvalue", 0, None, 0, "MAP_SPACE", "PROCESS_AS_MOSAICKED_IMAGE", "NO_BLACKEN", "FIXED_SIZE")import arcpy

arcpy.env.extent = "468500 7864000 469000 7864500"

arcpy.ia.ExportTrainingDataForDeepLearning("input.tif", r"C:\deep_learning\output\export2", "Training_Data", "TIFF", 256, 256, 0, 0, "ONLY_TILES_WITH_FEATURES", "PASCAL_VOC_rectangles", 0, "Classvalue", 0, None, 0, "MAP_SPACE", "PROCESS_AS_MOSAICKED_IMAGE", "NO_BLACKEN", "FIXED_SIZE")When you have these sorts of problems, it is always good to get the simplest set of inputs to test with. I suggest creating a test featureclass with just 2 polygons in separate corners of your image. Then try and export the training data for just one of them using the extent parameters. Also try doing it just like the above, without using variables.

Also, one of the reasons it may be taking 4 hours is that you have specified the rotation angle as 45 degrees. For each image created it will create an additional 7 images (going around in 45 degree increments), and could therefore maybe take up to 7 times as long. This will also make your deep learning training much longer as well. Normally how this is handled is that when you train your model you tell it to transform your input images by randomly rotating them. So you get all the benefits of more training data but without pushing huge amounts of samples through the model.

And one more thing from that warning message - it's probably a good idea to define a class value for your data, just in case that causes other problems later. Just add a field called Classvalue (as a Long) to your polygons and set it to 1. Then choose that field in the parameters.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

Thank you @Tim_McGinnes ! I will try your suggestions when I return to the office; it all sounds like good advice. I appreciate the suggestion to randomly rotate the images when I train the model instead of exporting them with 45 degree rotations. Could you help me find how I can do that? I've never seen the option in ArcGIS Pro before.