- Home

- :

- All Communities

- :

- Products

- :

- ArcGIS Data Interoperability

- :

- ArcGIS Data Interoperability Blog

- :

- Using ODBC data sources in ArcGIS Pro - optionally...

Using ODBC data sources in ArcGIS Pro - optionally including .accdb

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

GeoNet Ideas contains many customer requests for ODBC connectivity from Pro to databases that are not supported ArcGIS workspaces. This blog is about implementing read-only import of ODBC data sources to Geodatabase. See also the paragraph titled 'Update' for a simple way to move data sources between formats using the same underlying approach.

Thumbnail: We'll use a scripted approach, creating a Python script tool in a Pro toolbox. You could make this a standalone script and a scheduled task. The coding isn't scary. You'll need permission to create an ODBC data source on your computer, and if you need to publish this to ArcGIS Enterprise the data source will need to be set up there too. If multiple users need to use the tool on your machine the ODBC data source will need to be a system one. Off we go...

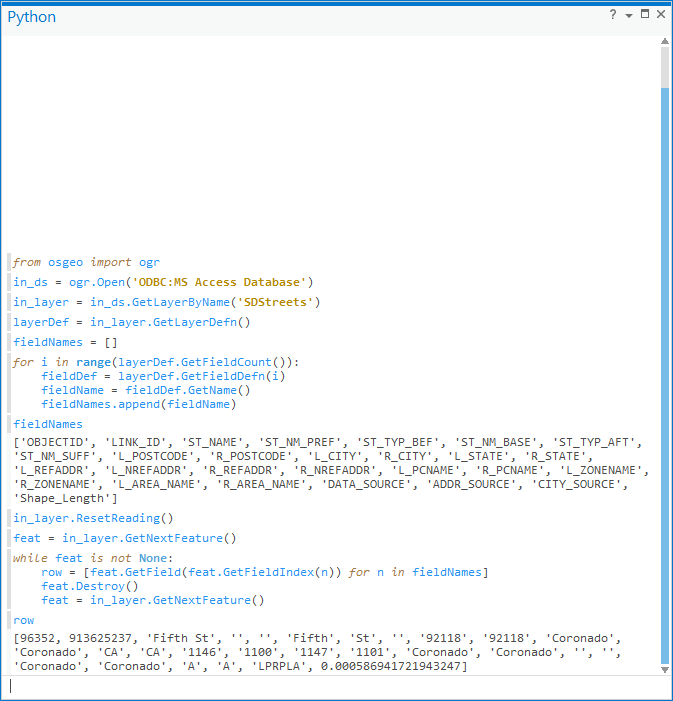

The ArcGIS Pro python environment ships with a module named osgeo, from the OSGeo organization. This supplies the GDAL libraries that support conversion of hundreds of geospatial formats, and one of the supported sources is ODBC, which isn't a 'format' of course but handles moving tabular data around.

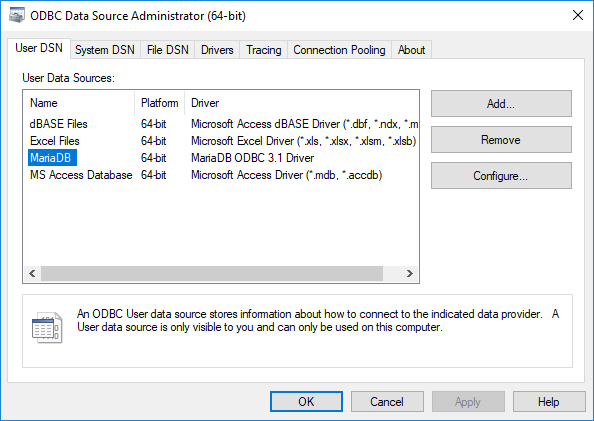

For my example source I chose MariaDB, a binary equivalent to MySQL. After installing MariaDB and the appropriate 64bit ODBC driver I imported some CSV data and created a user-level ODBC data source in Windows. Here is how the admin tool looks (click on images to enlarge them):

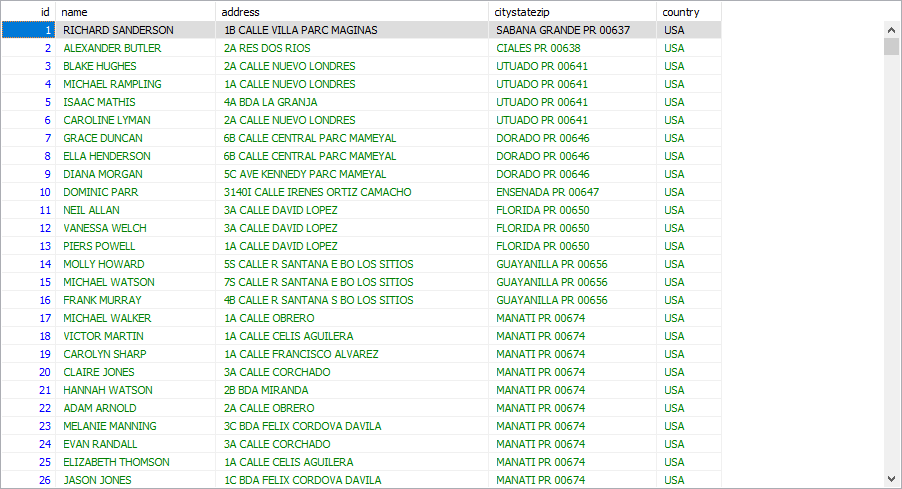

MariaDB ships with a handy administration utility - HeidiSQL - here is how the Data view of my target data looks in HeidiSQL (the names and addresses are made up):

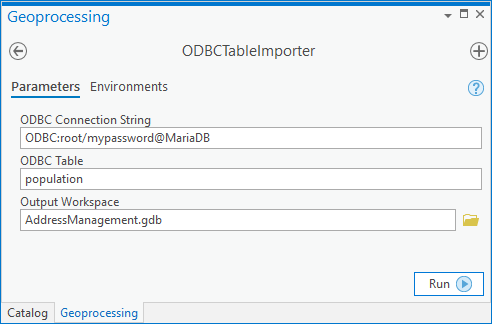

So that's my target data, now how to get at it? To understand what the osgeo module needs to connect to my ODBC source I researched the relevant vector driver. So far so good. With a little more surfing some examples and the submodule osgeo.ogr API the parts were apparent. Next step - code it! Here is the result in my project:

The blog download has the tool and source, plus the CSV data I used. Disclaimer: this is a very simple example without any defensive code to handle variability in the input data. The idea is to give you confidence you can script a repeatable workflow.

How did I do? I run the tool:

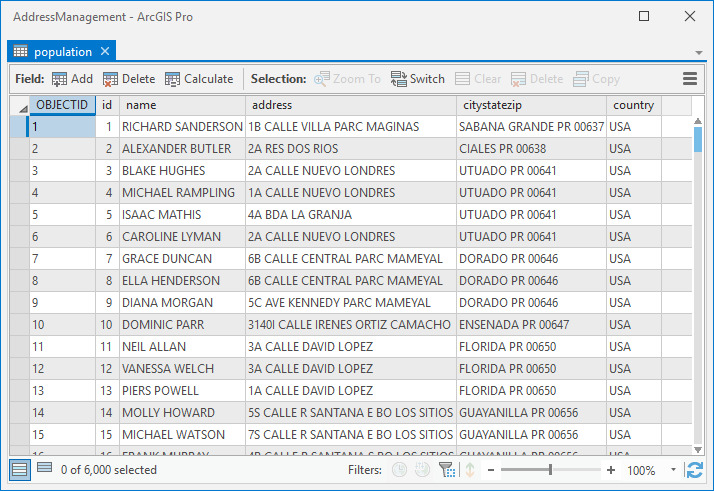

...and the output table is created in my project home geodatabase.

Success! I imported 6000 rows in about 8 seconds. So that is the pattern I wanted to show. The approach will handle more data types than just the string and integer values I used, and it is quite likely the part of my code where I map OGR field types to ArcGIS field types has issues. Please do comment in this blog space on your challenges and successes.

Now for the optional extra - Access databases!

I have 64bit Office on my machine, I also have Microsoft Access Runtime 2013 installed, I'm not entirely sure if both are needed or just one but my ODBC datasource options include .mdb and .accdb. Otherwise the pattern to reading Access databases is the same as the above. I configured an ODBC MS Access Database connection in the 64bit ODBC administrator to connect to an .accdb database on disk. I possibly should have added a new one and given it a descriptive name but you get the idea. From there it is just like any other ODBC source, except it does have a dependency on 64bit Office and/or the runtime driver.

Update: I created this sample with a rudimentary knowledge of what the OGR drivers delivered with the osgeo module can do. It is way easier to just copy an OGR layer to an OpenFileGDB layer than create one with ArcPy and use a cursor to write into a new table or feature class. Re-purpose the approach I describe in the comments in the below post about the OpenFileGDB driver:

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.